- Private equity firms are facing an AI value gap: pilots and investment are up, but measurable returns are still limited. As holding periods stretch and LPs put more pressure on value creation, AI has to become an operating improvement lever, not another experiment.

- Closing that gap starts with what sponsors standardize. The strongest path is a governed, model-agnostic Work AI layer, not a single model. That gives firms a way to connect existing systems, preserve permissions, support multiple LLMs, and turn one lighthouse deployment into repeatable portfolio playbooks across similar holdings.

- Early Glean deployments at a healthcare technology PortCo and a PE-backed ERP software company show what that looks like in practice: strong adoption, clear ROI, faster onboarding, and higher developer productivity. They also point to where sponsors can start — GTM, engineering, finance, and support — and how to measure progress: time-to-value and AI-attributed EBITDA impact, not seats or pilot count.

Private equity firms have no shortage of AI pilots. What they lack is a clearer path from those pilots to measurable, repeatable value.

There’s a growing disconnect between where private equity is directing its AI capital and where AI value is captured. In early March, Reuters reported that Anthropic was in talks with Blackstone and Hellman & Friedman on an AI-focused JV built for portfolio-wide deployment, while OpenAI reportedly explored a multibillion-dollar enterprise deal with a consortium of private equity sponsors.

On the surface, these deals look like a race for model access, pricing leverage, and competitive advantage. Underneath, Operating Partners are asking a more fundamental question: Where does AI hit the P&L, and what do you need to standardize across the portfolio to make that repeatable?

As firms work to improve outcomes across existing holdings, support value-creation plans, and strengthen exit readiness, the choice is: anchor AI strategy to a single model relationship and inherit that vendor’s roadmap, economics, and constraints, or standardize on an open Work AI platform that connects natively to portfolio company systems, preserves model flexibility, and makes value capture more repeatable across the fund.

The firms that create the most value will be the ones that build a durable foundation for deployment, governance, and reuse across the portfolio, while preserving option value around models and AI apps.

Why so many firms are still stuck between pilots and scale

In private equity, the debate isn’t whether AI matters. It’s why, despite so much AI activity, so little of it is showing up in measurable value.

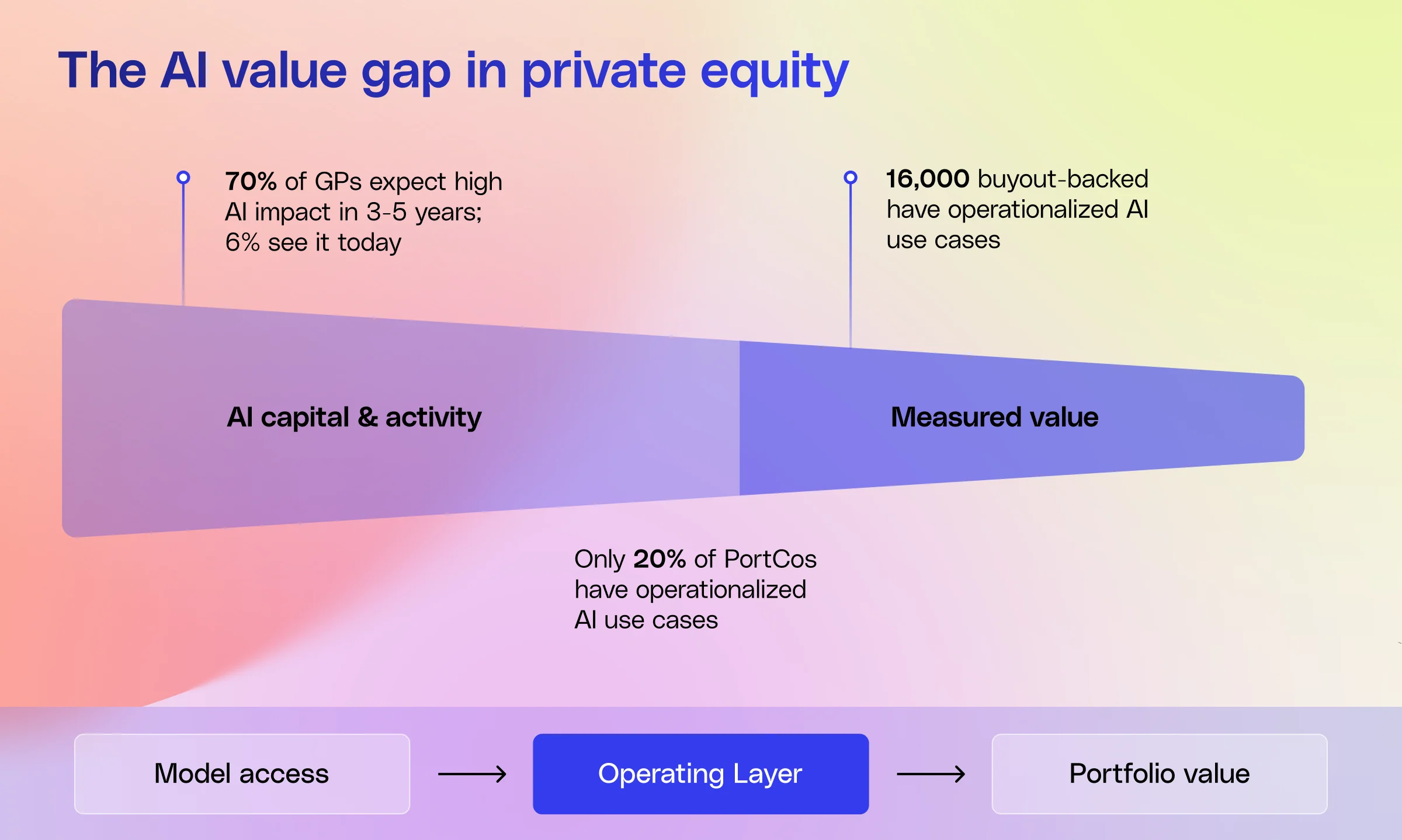

Part of the answer is timing. AI has moved quickly from experimentation to expectation. McKinsey’s 2026 Global Private Equity Report found that 70% of GPs expect AI to deliver high impact in their own operations in 3–5 years, yet only 6% see it doing so today. LPs are watching closely: 53% now rank a GP's AI value creation strategy among their top five criteria when selecting a manager. The patience for AI as a forward-looking promise is running out.

Yet most portfolios aren't close to delivering. Bain’s 2025 Global Private Equity Report, which tracks roughly $3.2 trillion in AUM, found that only 20% of portfolio companies have operationalized use cases that deliver measurable returns. Many of the remaining 80% are trapped in "pilot purgatory" — experimenting in silos and duplicating costs without a shared playbook or economies of scale.

This gap is colliding with a liquidity crunch. McKinsey points to a related pressure: 16,000 buyout-backed companies have already passed the four-year hold mark (52% of total buyout-backed inventory), the highest on record. Average holding periods are stretching toward 6.6 years. For sponsors managing aging assets, that raises the bar for margin expansion and exit readiness. AI starts to matter differently in that environment, less as a future-facing experiment, and more as a lever for operating improvement inside the current portfolio.

In this climate, AI can’t remain a side initiative. It has to show up in the value-creation plan, owned by the management team and Operating Partners, with a clear path from early proof points to repeatable value across the fund.

Real-world examples of repeatable AI value

Two recent Glean deployments show what measurable AI value can look like in practice.

Regulated knowledge

A healthcare technology PortCo rolled out a centralized AI platform across 1,800 employees in a complex, compliance-heavy environment. Within six months, adoption reached 95%, teams had built more than 1,100 agents, and the PortCo was tracking a 2.4x ROI from business process and IT architecture improvements alone and a ~20x ROI from just eight use cases — with a repeatable template now being applied to other regulated assets in the fund.

Accelerating R&D velocity

At a PE-backed ERP software company with roughly 1,600 employees, a similar platform bridged Slack, Teams, and code repositories into a single permissions-aware context layer. The results within six months: adoption reached 100%, onboarding timelines for new joiners fell by 50%, developer productivity rose by up to 18%, and the sponsor attributed more than $1.65 million in annualized productivity gains realized with runway for additional upside.

The common thread across both deployments is the platform layer: a model-agnostic, permissions-aware system that connects proprietary data across the tools employees already use. That infrastructure stays with the PortCo at exit. And because the underlying LLM is a flexible component, these gains will endure even as the underlying model changes.

What do these deployments have in common, and what do sponsors need to standardize to make that kind of value repeatable across the portfolio?

What sponsors need to standardize

As sponsors move from experimentation to execution, the strategic question sharpens: what, exactly, should be standardized across the portfolio?

The answer isn't every workflow, use case, or model decision. It's the operating layer that determines how AI gets deployed, governed, and reused across companies. That's where sponsors can create consistency without locking themselves into the wrong constraint too early.

The model-centric bet

A model-centric strategy secures access to a leading model, negotiates favorable economics, and uses that relationship to accelerate adoption across the portfolio. The same logic often extends to broader enterprise AI paths, where tools like Copilot or Gemini Enterprise are attractive because firms already have vendor relationships, pricing agreements, and security frameworks in place.

There’s some logic to that approach. A single vendor can offer privileged access, volume-based pricing, a simpler security narrative, and a faster path to early deployment. But the risks are just as real. Models are changing quickly, and enterprise fit still varies by use case. What creates simplicity early can create rigidity later, especially across a portfolio of very different businesses.

The platform-first path

A platform-first strategy starts from a different premise. Instead of asking which model to anchor to, it asks which capabilities the fund wants to make repeatable across the portfolio.

In practice, that means standardizing the operating layer — how AI connects to core systems, enforces permissions, and runs governed workflows, all on an architecture that can support different models over time. The model still matters, but it’s no longer the strategy. It’s a replaceable part inside a reusable operating layer.

That’s the value of a model-agnostic approach. It gives sponsors the flexibility to swap the underlying model over time while keeping the workflows, context, and operating capability in place. At exit, that capability remains embedded in the portfolio company’s operating model as portable IP rather than tethered to a single third-party vendor.

That logic lines up with the broader research. BCG’s 10-20-70 rule argues that only 10% of the effort (and value) lies in the algorithm itself. 20% is data and infrastructure, and a staggering 70% is the process and governance transformation required to make the technology stick.

What that looks like in practice

For private equity, a platform-first approach has to be concrete. It means standardizing on infrastructure that can work across different businesses, teams, and tech stacks:

- Deep integrations into the tools portfolio companies already use

- A permissions-aware context graph that maps company knowledge across documents, tickets, code, and other core systems.

- Orchestration that can route work to the LLM best suited to the task based on complexity, cost, and risk

- Model flexibility, so sponsors aren't tied to one vendor roadmap

- Fast deployment, so teams can prove value quickly and scale what works

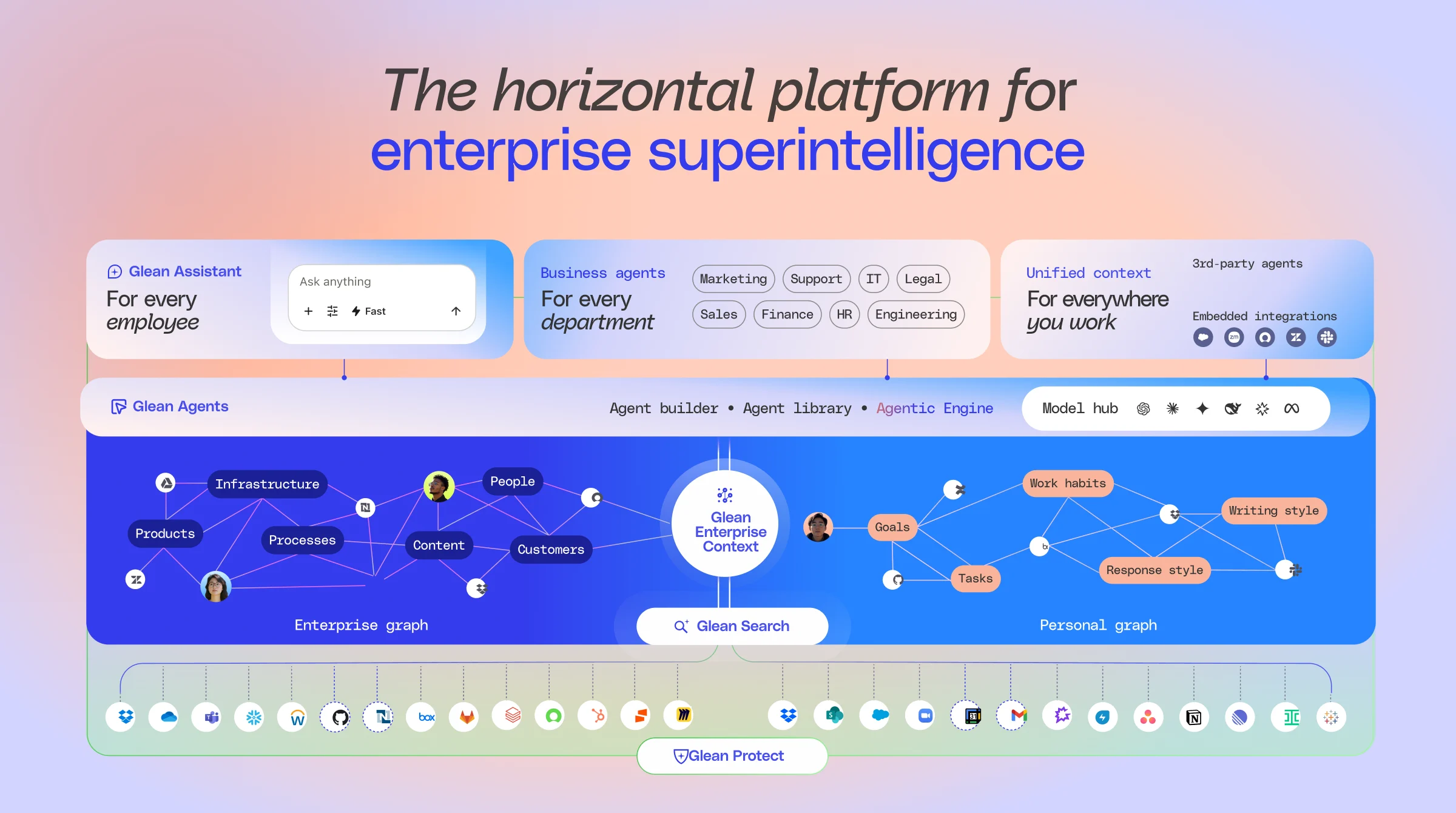

This is where Glean fits. Glean is designed to help firms standardize on a governed AI layer across the fund and portfolio. It natively connects to more than 120 data sources, supports custom connectors, and builds a personalized knowledge graph on top of company data and workflows. It also enforces inherited permissions, supports multiple leading LLMs and flexible hosting models, and can be deployed in as little as two to four weeks with measurable time-to-value.

That combination matters because it maps to how sponsors actually drive value: through repeatable value-creation motions tied to cost reduction, operational efficiency, revenue growth, and multiple expansion.

From lighthouse deployment to portfolio playbook

Taken together, these aren’t AI showpieces; they’re operating patterns. In each case, the sponsor started with one portfolio company, tied AI to a specific value-creation lever, and then turned that motion into something they could reuse.

.png)

The sequence should be:

- Prove value in one company. Start with a strong sponsor, a clear business priority, and a measurable outcome tied to a value-creation lever.

- Codify what worked. Define the deployment motion, governance model, and rollout approach in terms the next company can reuse.

- Scale across similar holdings. Apply the same pattern across companies, functions, or problems where the conditions are similar.

Platform choice is what makes this scale. With a common, governed AI layer, each lighthouse win becomes a portfolio playbook; without it, it stays locked inside a single portfolio company.

Where value shows up first

The first wave of AI value in private equity doesn’t come from edge-case experiments. It comes from existing business priorities with a clear value-creation lever.

At the portfolio-company level, that often means a focused set of operational priorities:

- Go-to-market productivity and sales execution.

- Engineering velocity and onboarding.

- Finance efficiency, reporting, and close acceleration.

- Customer support, automation, and triage.

- Support and back-office workflows, where scattered knowledge and manual work slow teams down.

These are practical places to start because the business case is easier to define and the path to proof is shorter. Sponsors can tie deployment to outcomes like faster employee ramp time, lower software spend, reduced manual work, and more productive use of internal expertise.

At the fund level, the same approach can support work across:

- Due diligence

- Portfolio management

- Investor relations

- Internal collaboration

- Market analysis

When sponsors standardize this well, the upside compounds:

- Better procurement and governance leverage

- Higher confidence in AI risk management

- Faster time-to-value

- More consistent deployment patterns across portfolio companies

- A stronger exit narrative built on measurable, operationalized AI use

The goal is to start where value is already visible, prove it in business terms, and build from there.

The new mandate for sponsors

Private equity’s next phase of AI adoption will look different from the last. The firms that win will be the ones that standardize a small set of repeatable playbooks on top of a governed platform layer that can scale across the portfolio.

Making that shift requires three changes:

1. Treat model choice as flexible.

Model choice should stay reversible. Platform choice should be more durable. Sponsors need room to adapt as the market changes without rebuilding the operating layer every time the model landscape shifts.

2. Move from pilots per company to playbooks per fund.

The goal isn't to prove AI separately in every portfolio company. It's to identify repeatable use cases, cluster similar holdings by function or operating need, and deploy the same playbook across the portfolio.

3. Measure like an investor.

Stop tracking seats as the main signal of progress. Track time-to-value, AI-attributed EBITDA impact against the original value creation plan, and how quickly a successful deployment can be repeated across the portfolio.

At exit, buyers pay for infrastructure that’s already generating margin, not for a vendor relationship they’ll need to renegotiate. Sponsors who understand this will stop optimizing for model access and start building systems that turn AI into durable, transferable operating leverage.

Request a demo to see how Glean helps private equity firms turn AI pilots into repeatable portfolio value.