AI sprawl occurs when disconnected AI tools lack shared context, integration, and governance, causing promising pilots to stall in production.

To scale successfully, CIOs need a horizontal Work AI platform like Glean that provides unified enterprise context, built-in governance, and secure orchestration.

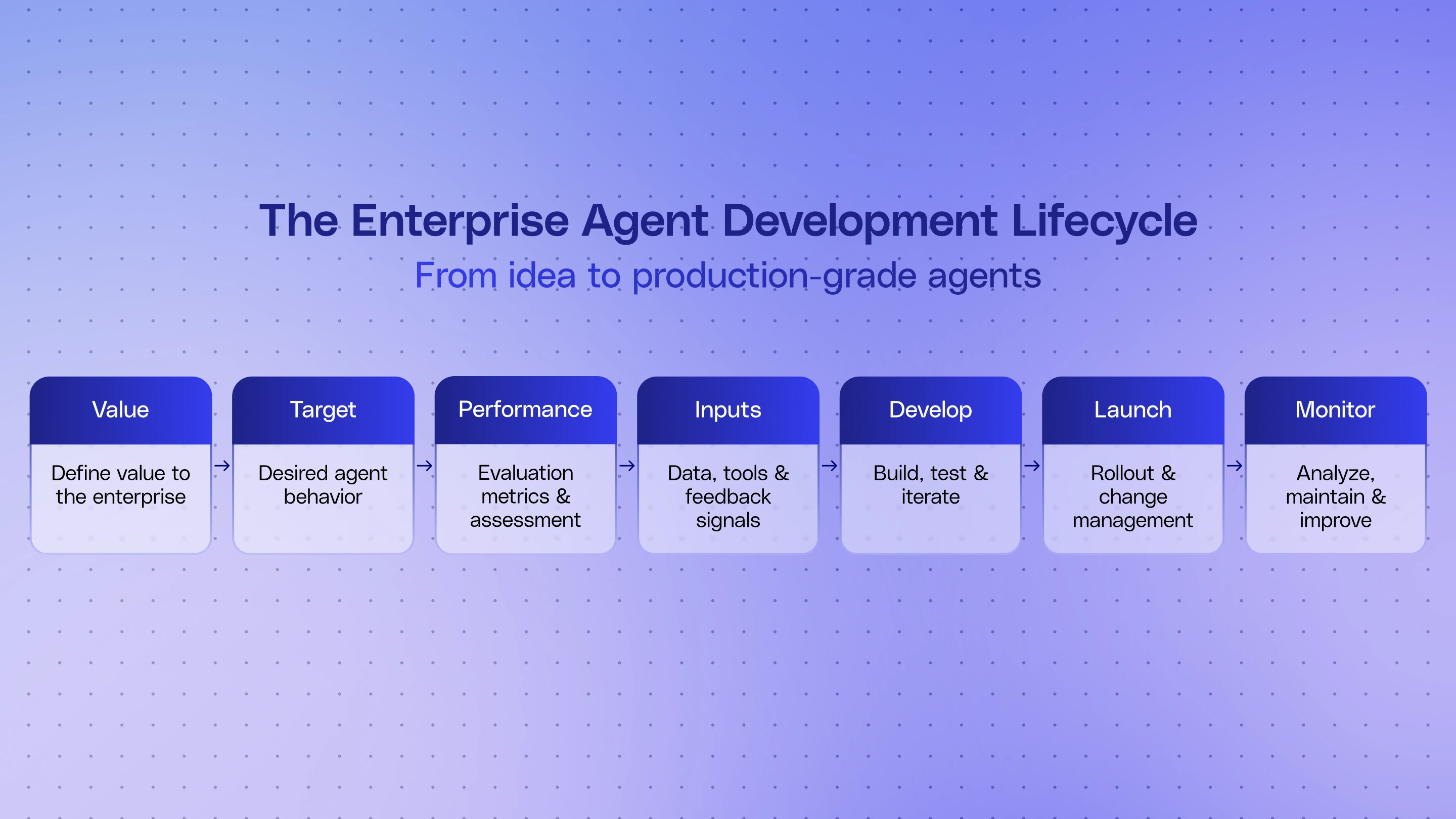

Adopting Glean's Enterprise Agent Development Lifecycle (ADLC) helps organizations transition from scattered experiments to a disciplined, measurable AI operating model.

AI sprawl happens when enterprises add disconnected AI tools faster than they build shared context, governance, and operating discipline around them. For most CIO’s, the question is no longer whether AI matters. It already does. The question is how to deploy and scale it successfully within the enterprise.

Today, teams are experimenting with embedded assistants, app-specific agents, custom workflows, and new model providers. New workflows, AI-driven results, and onboarding more tools can look like progress. But it often creates AI sprawl—too many pilots, too many surfaces, too little coordination, and too much uncertainty about what is actually delivering value. The resulting loss of control over which systems are being used, which ones can be trusted, where sensitive information is flowing, and which projects are actually worth scaling makes AI sprawl an uncomfortable problem for tech leaders.

AI sprawl is a symptom, not the root problem

Most AI sprawl comes from three structural problems:

Thin context — Agents do not have enough enterprise context to do reliable work.

Without rich, permission-aware context, agents produce generic outputs, miss business nuance, and require too much human review. They may look strong in a demo, but they are too brittle for real operational workflows.Poor integration and orchestration — Teams wire together one-off tools and agents that are hard to scale or govern. A pilot can survive on hand-built glue. Production cannot. Once work has to move across systems of record, collaboration tools, and approvals, brittle integrations create failure points, duplicate logic, and operational overhead.

Scaling without observability or governance — What worked as a pilot breaks down as agents spread across teams. As adoption grows, leaders need traceability, permissions, approval flows, cost controls, and clear ownership. Without that, trust drops, admins slow rollout, and promising projects stall before broad deployment.

These are the reasons agent projects look promising in a demo and then stall in production.

Why this keeps happening

AI and agent issues are largely failures of architecture. When context is rebuilt use case by use case, agents only see a narrow slice of the business. When integrations are handled as one-off project work, connectors multiply, workflows become brittle, and lock-in creeps in. And when AI is layered onto broken governance and observability processes, it creates and exacerbates disorder.

For CIOs, the takeaway is simple: context, orchestration, and governance cannot be solved separately for every new agent. They need to become shared infrastructure.

A horizontal Work AI platform allows you to create, publish, and monitor agents safely in a single place

Glean is uniquely well positioned for secure agent building because its foundation is a unified, permission-aware enterprise context layer. Instead of forcing every team to assemble its own retrieval stack, Glean gives agents access to shared context across the enterprise, grounded in the same knowledge and permissions model the company already relies on.

On top of that, Glean is building an open platform that helps enterprises coordinate work across systems, models, and agents without losing the shared context and controls that make AI usable at scale. With managed actions and integrations, teams can spend less time rebuilding plumbing—and more time delivering outcomes.

Glean also heavily invests in built-in governance and observability so you can scale and monitor your AI agents with ease. Glean’s philosophy is that these controls belong in the platform, not in per-agent checklists. This matters because when governance and observability are built into the platform, organizations can scale agents with confidence instead of adding risk, inconsistency, and operational drag with every new deployment.

The Enterprise Agent Development Lifecycle is the mental model that connects all of this

CIOs need more than a toolset. They need a way to think about AI sprawl as a system.

The Enterprise Agent Development Lifecycle (ADLC) is a framework developed by Glean’s Solution Architects. This process helped scale agent success at some of our largest customers. It helps organizations work through the full set of problems behind AI sprawl: how agents get the right context, how they connect to systems and workflows, how they are designed around real business processes, and how they are governed, monitored, and improved over time.

Instead of treating each new agent as a one-off project, teams can evaluate it as part of a shared lifecycle: define the value, connect the right context and tools, build responsibly, deploy with guardrails, and measure what happens next.

To get ahead of AI sprawl, CIOs need to move from experimentation to an operating model built on three things: shared enterprise context, governed orchestration, and a disciplined agent lifecycle.

Winning AI is not about more pilots—it’s about the right platform and operating model. Glean helps CIOs maximize AI ROI by investing in the Enterprise ADLC within Glean, combining enterprise context, open orchestration, governance, and observability in one horizontal Work AI platform.

Learn more at Glean:LIVE

At Glean:LIVE in May, we’ll go deeper on how CIOs can move from scattered pilots to a governed Enterprise Agent Development Lifecycle—and how Glean helps support that shift across the full lifecycle.