Essential KPIs for evaluating AI sales enablement effectiveness

Enterprise sales teams now spend on AI-driven tools at an unprecedented rate, yet most organizations lack a clear framework to measure whether those investments actually translate into revenue. The gap between adoption and accountability has become one of the most expensive blind spots in modern go-to-market strategy.

AI sales enablement KPIs exist to close that gap. These metrics connect the dots between tool usage, seller behavior, and business outcomes — giving revenue leaders the evidence they need to justify spend, optimize strategy, and scale what works.

This guide breaks down the essential KPIs for evaluating AI sales enablement effectiveness, from productivity metrics and revenue attribution to cost savings and long-term ROI. Each section provides a practical lens for measuring what matters — and ignoring what doesn't.

What Are AI Sales Enablement KPIs — and Why Do They Matter?

AI sales enablement KPIs are specific, measurable indicators that tie AI-driven sales tools to real business outcomes — not just activity volume or feature adoption. Traditional sales metrics tend to report what already happened: deals closed, calls logged, emails sent. AI sales enablement KPIs go further. They reveal whether AI is genuinely changing how reps sell, how fast new hires ramp, and how effectively teams convert pipeline into revenue.

The distinction matters because many organizations invest in AI sales tools without defining what success looks like upfront. Without clear KPIs, it becomes nearly impossible to calculate ROI, justify continued investment to a CFO, or distinguish a tool that drives revenue from one that simply generates dashboards. The right KPI metrics bridge the gap between enablement activity and closed deals, giving leaders a direct line of sight from adoption to impact.

Measuring sales effectiveness starts with separating signal from noise. The most useful KPIs predict revenue growth; the least useful are simply the easiest to pull from a report. Completion rates and login counts might confirm that reps opened the tool — but they say nothing about whether the tool improved discovery calls, shortened sales cycles, or lifted win rates. Teams that define evaluation criteria and platform quality metrics early, tied to business outcomes rather than surface-level usage, consistently outperform those that measure after the fact.

How to calculate ROI for your AI sales enablement investment

The formula is fixed: (Attributed sales impact – full program cost) / full program cost × 100. The harder part sits in the accounting. License fees rarely reflect the true cost of an AI sales enablement rollout; a credible model also includes deployment work, systems integration, onboarding, change support, data upkeep, and the ongoing expense of model review and maintenance.

The denominator matters as much as the numerator, so establish a pre-launch benchmark before the tool enters daily use. Capture a clean snapshot of current team performance, then isolate movement with structured comparison:- Parallel groups: compare one cohort with AI access against a similar cohort without it during the same selling period.- Time-based analysis: compare the same team before adoption and after adoption, while controlling for territory shifts, seasonality, and pricing changes.- Discounted credit: assign only part of the observed lift to the program — often 25% to 50% — so the model holds up under finance review.

Return appears in phases, not as a single spike. In the first 30 to 90 days, the clearest gains usually come from workflow throughput: shorter prep time for calls, faster recap creation, and quicker access to approved collateral; a sales workflow guide from Glean reflects that pattern. Around months three to six, the picture broadens to stronger execution and measurable commercial lift. By month six and beyond, cumulative gains across efficiency and deal flow often bring payback inside a nine-month window.

The sales productivity metrics that reveal AI's real impact

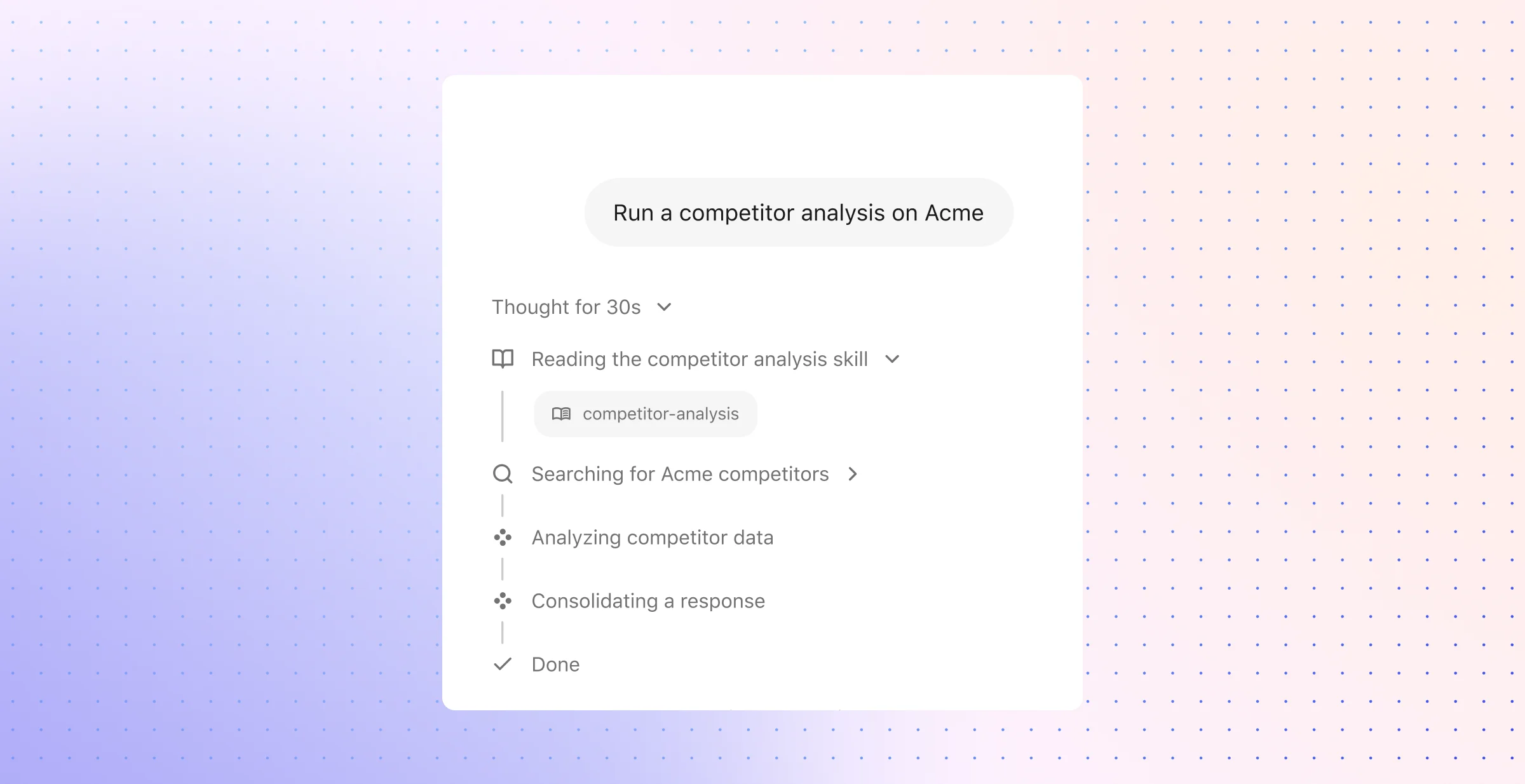

Productivity shows up in capacity, not in login counts. The useful test is simple: does AI increase customer-facing time, reduce manual effort, and raise output per seller without extra headcount. Strong programs shift work away from admin-heavy tasks such as account research, CRM hygiene, and follow-up drafting; in many teams, that shift adds back several hours each week for pipeline creation, deal strategy, and buyer engagement.

What to measure first

- Selling time ratio: Measure the share of each rep’s week that goes to prospect and customer work versus internal admin. This metric shows whether AI creates more room for discovery, follow-up, and deal progression rather than more tool management.

- Ramp-to-productivity milestones: Track days to first closed deal and days to consistent quota attainment. A shorter ramp expands productive capacity faster, which matters most in organizations that hire in waves.

- Stage velocity: Measure how long opportunities remain in each pipeline stage, not just total days to close. AI should reduce drag between handoffs by surfacing relevant context, approved messaging, and next-step guidance at the point of need.

- Revenue per active seller: This metric isolates individual output and shows whether AI lifts production across the team.

- Quota attainment spread: Review how many reps hit target before and after rollout. A wider lift across the middle of the team signals stronger enablement than a small gain among top performers alone.

The most durable productivity gains come from systems that combine retrieval, context, and execution inside the flow of work. In practical workflow patterns, such as those described by Glean for sales teams, high-value use cases include instant access to call moments, correspondence history, and deal-relevant assets; that makes seller-facing time, stage velocity, and in-workflow retrieval success more useful than surface activity metrics.

Revenue attribution: connecting enablement to closed deals

After productivity metrics show operational lift, revenue attribution needs deal-level proof. Finance leaders want incremental revenue, not broad usage trends. The cleanest approach starts inside the CRM: tag opportunities where AI shaped account research, content selection, follow-up quality, or manager guidance, then compare those deals against a matched baseline from the same sales motion.

A focused attribution model should track four signals:

- Assisted close-rate gap: Compare the close rate of opportunities touched by AI-enabled workflows against similar opportunities without that support. This metric shows whether enablement improved execution inside active deals rather than just tool adoption.

- Contract value lift: Track changes in average selling price for AI-assisted opportunities. Larger deal values often reflect better discovery, stronger business cases, and sharper positioning across stakeholders.

- Opportunity aging and stall rate: Measure how long deals remain open, where they pause, and how often they fail to advance after key milestones. AI support often shows up here first — especially when reps receive precise account context, relevant proof points, and recommended next moves during live deal work.

- Play-level yield: Map specific enablement plays to revenue outcomes by segment, region, or buyer profile. This reveals which motions produce durable commercial results instead of isolated wins.

Attribution still requires restraint. A revenue lift model loses credibility when it ignores external factors such as pricing changes, marketing programs, product releases, or territory mix. Use matched cohorts, shared time windows, and conservative credit allocation so the measured gain reflects actual enablement influence. In practice, the strongest evidence appears when AI affects execution in the moment — before a discovery call, during multithreaded deal progression, or at the point where a late-stage opportunity risks delay.

Cost savings and efficiency gains worth measuring

Cost savings in AI sales enablement fall into two buckets: direct expense reduction and cost avoidance. Direct savings show up in fewer overlapping tools, lower cost per lead, and lower cost per opportunity; cost avoidance sits deeper in the model — less rep attrition, fewer stalled deals, less manager time spent on manual coaching prep, and less budget tied up in content that never reaches buyers.

Where savings appear first

- Tool consolidation: Many sales teams pay for separate systems for call review, content discovery, note capture, and rep guidance. AI that brings those jobs into fewer workflows can reduce license sprawl and cut admin overhead across sales operations.

- Acquisition efficiency: Measure cost per lead and cost per opportunity before and after rollout. Better prioritization, stronger follow-up consistency, and sharper message quality should reduce both.

- Content efficiency: Track which assets appear in active opportunities and which ones correlate with buyer engagement. This exposes collateral that consumes production budget but adds no measurable value in live deals.

- Coaching efficiency: AI can flag weak discovery, inconsistent objection handling, and missed follow-up patterns without a manager listening to every call. That shortens the path from issue identification to targeted reinforcement.

Early efficiency gains often come from process compression rather than headcount reduction. A rep who receives cleaner account context, better content recommendations, and faster post-call guidance can move through more selling activity with the same number of hours.

The hidden savings often matter more than the visible ones. Replacing an underperforming seller can cost 1.5 to 2 times annual salary once recruiting fees, lost coverage, delayed ramp, and manager attention enter the model. AI enablement that helps mid-tier reps execute more like top performers can prevent that spend before it hits the budget.

Efficiency KPIs should reflect that broader view. Track retired software spend, manager prep time per coaching session, content utilization in open and won deals, lead response consistency, and the gap between assigned training and observed behavior change. As recommendation systems learn from usage patterns and outcome data, these gains tend to compound quarter over quarter.

How to align sales enablement KPIs with business outcomes

Misalignment usually starts at the planning stage, not in the dashboard. Teams often pick metrics that describe program activity instead of the business constraint the program exists to fix — a stalled pipeline, inconsistent discovery quality, weak forecast confidence, or slow follow-up after buyer meetings.

A stronger approach starts with an operating goal and works backward from there. For one team, that may mean a 15% drop in stalled late-stage opportunities; for another, a two-day reduction in proposal turnaround or a measurable lift in manager coaching follow-through after flagged calls. That structure gives each KPI a job.

Use a three-tier KPI model

A three-tier model keeps measurement disciplined and prevents disconnected reporting:

Tier 1 — operational adoption: This layer tracks whether the program has enough reach to matter. Eligible-user coverage, manager participation, workflow embed rate, and repeat usage inside daily sales motions belong here. Weak numbers point to rollout friction, low trust, or poor fit with existing systems.

Tier 2 — execution quality: This tier looks at how seller execution changes after adoption. Useful metrics include AI draft acceptance rate, content recommendation use in live deals, prep-time reduction before meetings, and coaching follow-through after identified skill gaps. This is where teams see whether the tool improves decision quality rather than just speed.

Tier 3 — business effect: This layer captures commercial movement tied to the earlier shifts. Stage-to-stage conversion, forecast accuracy, pipeline coverage by rep, late-stage stall rate, and expansion influence show whether stronger execution translates into stronger sales outcomes.

This structure also clarifies ownership. RevOps can inspect Tier 1 for rollout health; frontline leaders can inspect Tier 2 for sales execution change; finance and executive teams can inspect Tier 3 for budget justification.

Report KPI results in business language

KPI reporting should read like an operating review, not a training recap. A statement such as “82% of sellers completed AI-guided prep modules” says little on its own; “proposal turnaround dropped from three days to one, which improved stage progression in enterprise accounts” gives leadership something they can use.

Quarterly review matters because business priorities shift faster than enablement programs often do. A team under pressure to improve forecast confidence should not rely on the same scorecard as a team focused on multi-threading large accounts or reducing opportunity decay in a new market segment.

One more layer improves accuracy: pair outcome metrics with AI quality metrics. Guidance such as Glean’s evaluation approach reinforces this point well — weak business impact may come from low-quality answers, poor grounding, or incomplete task execution rather than poor program design. That distinction prevents the wrong fix.

A practical framework for evaluating AI sales enablement effectiveness

A workable evaluation framework needs more than a KPI list. It needs a measurement system that finance trusts, sales leaders can read quickly, and enablement teams can actually maintain quarter after quarter.

Set the baseline before rollout

Before the first workflow goes live, lock down the measurement rules that will govern the program. Teams usually lose credibility here — not because the tool fails, but because no one agreed on what counts as usage, what counts as impact, or which data source serves as the source of truth.

Use the baseline phase to define four things:

- Metric ownership: Assign one owner for each core metric — usually sales operations for pipeline and conversion data, enablement for coaching and adoption data, and finance for cost accounting. Shared metrics without a clear owner drift fast.

- Calculation rules: Standardize how each KPI gets calculated. “Ramp time,” “active usage,” and “AI-assisted opportunity” all need explicit definitions or the dashboard becomes impossible to defend.

- Measurement window: Set fixed review periods before launch. A 30-day readout, a 60-day capability check, and a 180-day commercial review create consistency and reduce selective interpretation.

- Cost boundaries: Include every material cost in the model from day one — licenses, integration work, change management, internal admin time, and model-related operating expense.

This step turns a future ROI discussion from opinion into accounting. It also prevents the common pattern where a team claims success from one metric shift while finance points to a different denominator.

Segment usage to isolate impact

Once the program is live, look past aggregate averages. A blended team average often hides the only signal that matters: who changed behavior, in which workflow, and with what commercial effect.

Segment the population in ways that reflect how sales actually runs. Adoption level matters, but so do role, tenure, region, sales motion, and manager. A new SDR in a transactional motion should not sit in the same comparison set as a strategic account executive with a mature book of business. The cleanest evaluation comes from matched groups with comparable coverage, cycle length, and opportunity profile.

Use a structured review cadence to keep those comparisons useful:

- Operational readout: Check whether the tool appears inside real work — deal prep, follow-up drafting, call recap review, account research, and asset selection. This shows workflow embed, not surface activity.

- Execution readout: Review whether sellers make better choices with the tool — stronger talk tracks, better content selection, tighter follow-up, and more consistent use of approved plays.

- Commercial readout: Examine whether those behavior shifts show up in deal progression, conversion quality, and revenue outcomes across matched groups.

This is also the stage where benchmark context matters. Compare results against internal targets, pre-launch performance, and realistic external norms so leaders can tell the difference between statistical noise and a material operating shift.

Build one score leaders can use

Most executive teams do not want six separate dashboards from six separate functions. They want one clear view of whether the investment deserves more budget, more rollout support, or a redesign.

A composite effectiveness score solves that problem when the weighting reflects business priorities. The score should combine several dimensions, with heavier emphasis on commercial impact than on activity volume:

- Revenue velocity impact: Deal progression speed, conversion strength, and movement through the funnel.

- Productivity amplification: Reduction in manual research, prep overhead, and time lost to disconnected systems.

- Strategic effectiveness: Quality of sales plays, consistency of execution by segment, and strength of manager reinforcement.

- Organizational learning: How quickly best practices spread across teams, how well new workflows stick, and whether managers can replicate top-performer behavior at scale.

This score should not stand alone. It works best beside a short exception report that explains what changed, what stalled, and which variables may have influenced the result outside the tool itself. That keeps the number useful without turning it into a black box.

The framework also needs disciplined review. Weak programs usually fail in familiar ways: they rely on vanity activity, skip benchmark context, let coaching quality sit outside the model, or collapse every positive outcome into one inflated attribution claim. Strong programs treat evaluation as an operating routine with regular recalibration of thresholds, weights, and workflow design. They also inspect the mechanics beneath the business result — output quality, retrieval quality, and prompt or workflow quality — because a weak answer path can suppress performance long before the revenue dashboard shows the damage.

The difference between AI sales enablement that justifies its budget and AI that quietly drains it comes down to measurement discipline — not more dashboards, but better questions asked earlier. Teams that define success before rollout, segment impact by real selling behavior, and report in business language will consistently outperform those chasing activity metrics after the fact.

If you're ready to see how AI can work inside your sales workflows with the context, security, and scale your team needs, request a demo to explore how we can help transform your workplace.