How AI enhances test accuracy and coverage in quality assurance

Software testing at enterprise scale has hit a structural bottleneck. Requirements live in product management tools, code changes sit in repositories, defect history accumulates in ticketing systems, and customer impact data flows through support platforms — yet QA teams are expected to synthesize all of it before writing a single test case.

AI-driven search changes that equation. By applying contextual retrieval, natural language understanding, and permission-aware access across an organization's full knowledge landscape, these tools give QA professionals a unified starting point for every testing decision — from test design to defect triage to release readiness.

The result is not just faster testing. It is more accurate testing grounded in real evidence, broader coverage focused on genuine risk, and stronger collaboration across the teams that share responsibility for software quality.

What is AI-driven search in quality assurance?

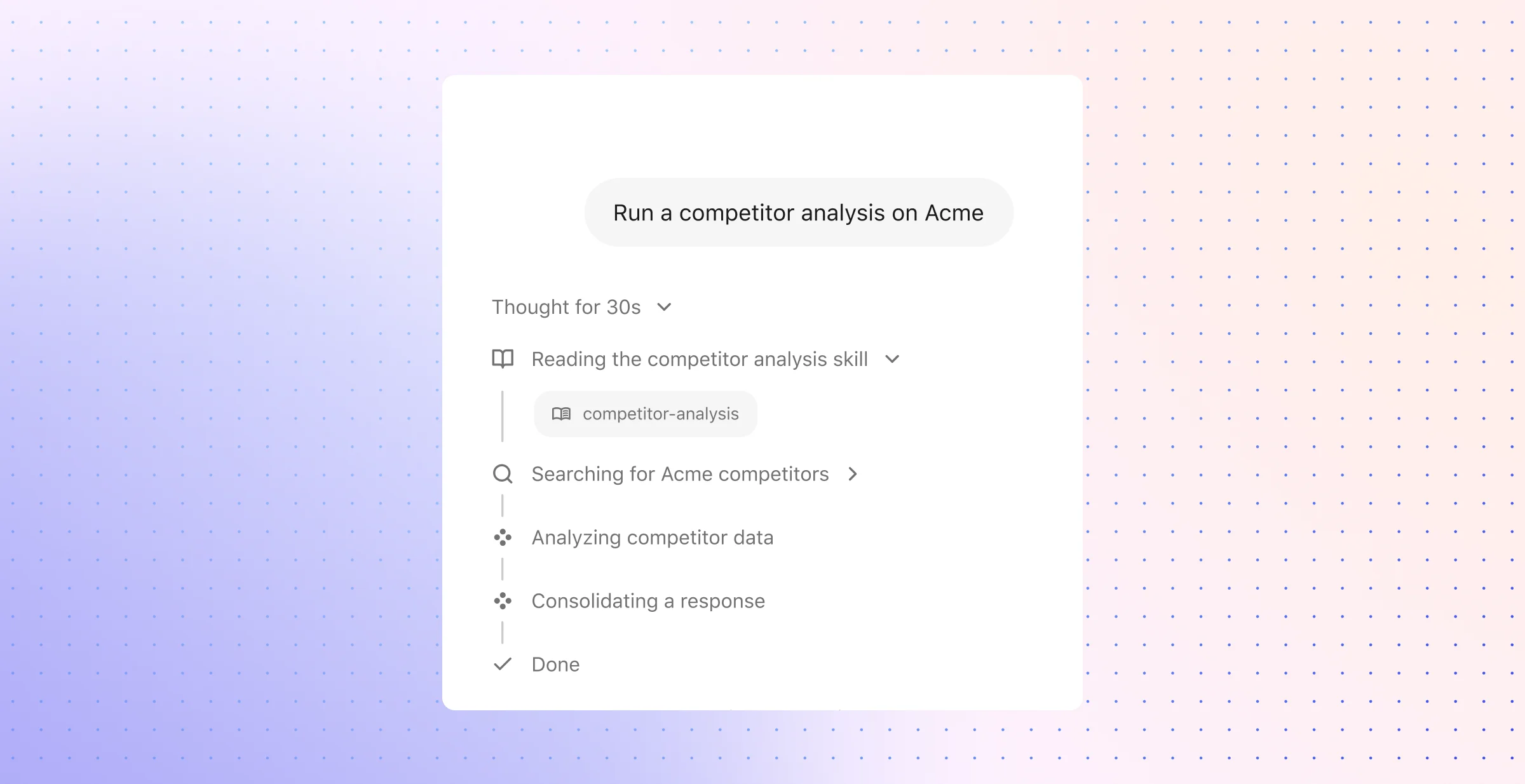

AI-driven search in quality assurance applies contextual enterprise search and grounded AI to retrieve requirements, code changes, defects, test cases, and release history across the full stack of work systems a QA team depends on. Unlike traditional keyword search or federated queries that pull incomplete results from individual tools, this approach continuously crawls, indexes, and ranks content from dozens or hundreds of applications — then delivers answers that respect the original access permissions of each source. The practical effect: testers spend less time switching between platforms and more time acting on trusted, verifiable information.

This distinction matters because QA work demands precision. A missing acceptance criterion, an overlooked dependency, or a stale test case can undermine both accuracy and coverage in ways that surface only after release. AI-driven search addresses that risk by connecting related concepts across systems — linking a feature name in a product spec to a service name in a code repository to a bug tag in a ticketing platform — so testers can see the full picture before they design a single scenario. The AI in software testing market reflects this shift; analysts project compound annual growth above 18% through 2033, driven by enterprise demand for faster delivery cycles and higher quality.

For teams evaluating how AI-driven search tools benefit quality assurance in testing, the core value proposition breaks down into three capabilities:

Contextual retrieval across fragmented systems: Rather than force testers to manually gather evidence from product management, version control, incident management, and support platforms, AI-driven search consolidates that context into a single query layer. The underlying technology — hybrid architectures that combine semantic understanding, lexical matching, and knowledge graphs — ensures results account for enterprise-specific terminology, relationships between people and content, and recency signals that static search engines miss.

Permission-aware access at every layer: Enterprise QA work routinely touches engineering data, customer information, internal policies, and incident records. AI-driven search tools that enforce source-level permissions ensure users only see what they are authorized to access — a non-negotiable requirement in regulated industries such as financial services, healthcare, and manufacturing. This security model also builds the organizational trust necessary for broad adoption across QA, engineering, product, and support teams.

Source-grounded answers with verifiable citations: The most effective AI search tools in QA do not just return plausible summaries. They ground every response in current documentation, recent changes, and real operating history — then link back to the original source material. That transparency allows testers to inspect code diffs, confirm whether a workaround still applies, or validate that a requirement has not changed since the last sprint. It also reduces the risk of hallucinated or oversimplified outputs, which is critical when test accuracy depends on factual precision rather than generative fluency.

This framing positions AI in quality assurance as a practical way to connect scattered organizational knowledge — not as a replacement for skilled testers. The technology serves as a retrieval and reasoning layer that helps QA professionals find the right evidence, focus on the highest-risk areas, and act on what the data shows. When retrieval quality is strong, every downstream activity improves: test design becomes more targeted, defect triage becomes faster, and coverage reflects genuine business risk instead of arbitrary test counts.

How to use AI-driven search tools to enhance test accuracy and coverage in quality assurance

Once a search layer exists, the real gains come from how QA teams use it in day-to-day work. The strongest pattern starts with release context, moves into scenario design, then carries through risk review, failure analysis, team coordination, and control.

That sequence matters because QA rarely fails on execution alone. More often, the miss starts earlier — a hidden dependency, an old assumption, an unreviewed exception path, or a release note that never reached the test plan.

1. Build a release evidence packet before test authoring

Before a team writes or updates cases, it helps to assemble a release packet that captures the full shape of the change. AI-driven search can pull the feature brief, acceptance notes, pull requests, commit summaries, prior bug history for the same area, support escalations tied to the feature, and the names of the engineers who own the affected services.

This step gives QA something more useful than a generic sprint ticket. It creates a release-specific record of scope, intent, prior instability, and technical blast radius. In fast-moving environments, that record often reveals gaps that would stay hidden in a single source — for example, a billing change that also touches permissions, reporting exports, and customer notification flows.

A good release evidence packet should answer four questions with little effort:

- What changed: Feature scope, code paths, APIs, flags, and configuration updates.

- What failed before: Old defects, rollback notes, postmortems, and repeat incident tags.

- Who owns the affected systems: Service owners, reviewers, and subject matter experts.

- Which users or workflows feel the impact: Customer segments, high-volume paths, regulated actions, or revenue-critical steps.

That foundation improves traceability from requirement to test. It also gives QA a clear basis for challenge; teams can test the real release, not the simplified version that often appears in planning tools.

2. Convert release evidence into scenario sets

After the release packet is ready, the next step is scenario expansion. Instead of ask for a generic test list, QA teams should use AI-driven search to derive scenario sets from the evidence already collected: role changes, boundary values, device or browser variations, API contract shifts, retry behavior, failure messages, and fallback paths.

This is where plain-language queries become useful in a concrete way. A tester can ask for all user states touched by a new entitlement rule, all workflows affected by a change to session handling, or all customer complaints tied to the same checkout path in the last quarter. The response should not stop at a short answer; it should expose enough source material for the tester to inspect edge conditions and exceptions.

High-value scenario sets often include:

- Primary flow checks: The core path the feature owner expects to work.

- Role and permission variants: Differences across admins, managers, agents, partners, or end users.

- Boundary and negative cases: Empty fields, expired states, invalid payloads, timeouts, and partial saves.

- Environment variants: Browser differences, mobile states, regional settings, and service dependency behavior.

- Historical recurrence cases: Older bugs with a realistic chance of return after a related change.

This method helps QA move beyond surface validation. The suite becomes more representative of production behavior because it reflects real usage, known defect history, and the technical details of the release itself.

3. Score the release by risk before suite expansion

Test count is a weak proxy for quality. A smaller suite with sharp priorities often gives better protection than a large suite filled with low-value checks.

AI-driven search helps QA score a release by risk before anyone adds more automation or broad regression. The search layer can surface churn in sensitive modules, repeat failure themes, support volume around the affected flow, unresolved defects in adjacent services, and signs of architectural fragility such as shared dependencies or recent refactors.

A practical risk score often draws from these inputs:

- Blast radius: How many downstream systems, user roles, or business processes the change can affect.

- Defect density: How often the same area produced bugs across prior releases.

- Support heat: Whether customers or internal teams already report friction in that workflow.

- Change volatility: Whether the code or configuration in that area shifts often.

- Control sensitivity: Whether the path touches compliance rules, financial actions, identity, or customer data.

That score gives QA a way to decide where depth matters most. A low-risk cosmetic change may need visual validation and a brief smoke pass; a permissions update inside a multi-service workflow may justify contract checks, role-based cases, data integrity validation, and tighter regression around adjacent paths.

4. Use failure packets to improve triage quality

When a test fails, speed matters less than clarity. Teams need to know whether the issue points to product behavior, test instability, environment drift, bad data, or a release artifact.

AI-driven search can assemble a failure packet that includes the failing step, related code changes, deployment history, similar incidents, known flaky tests in the same area, service logs, and runbook guidance from earlier outages. That packet gives QA and engineering a common view of the problem without a long hunt across dashboards and knowledge bases.

This changes defect triage in useful ways:

- Cleaner classification: Teams can separate true defects from noise faster.

- Better reproduction detail: Search can surface prior reports, environment conditions, and exact states that triggered the same issue.

- Faster routing: Ownership data helps the report reach the right engineer or service team sooner.

- Stronger pattern detection: Repeated failures across releases become easier to spot and track.

Over time, these packets do more than shorten triage. They show where the QA strategy itself needs adjustment — perhaps one area needs stronger data setup, another needs broader browser coverage, and a third needs fewer brittle UI checks and more API-level validation.

5. Use search to tighten coordination across adjacent teams

QA quality depends on the quality of handoffs. Problems grow when product, engineering, support, and operations each keep their own partial version of the truth.

AI-driven search improves this layer of work by making evidence easier to reuse across teams. A QA lead can pull support themes into release planning, an engineer can inspect prior incidents before a fix review, and a product manager can check whether expected behavior matches what support and test data show in practice. The result is not more meetings; it is better factual alignment before and after each release.

This also improves the artifacts teams rely on every week:

- Defect narratives: Clear summaries with evidence, owner context, and affected scope.

- Release-readiness notes: A concise view of open risk, unresolved defects, and high-risk paths already validated.

- Coverage reviews: A record of what received deep testing, what received spot checks, and what stayed out of scope.

- Escalation handoffs: Enough context for support or operations teams to respond without a fresh round of discovery.

In large enterprises, this matters even more because the relevant facts often sit across engineering tools, internal documentation, incident systems, and customer-facing platforms. Search reduces the lag between discovery and alignment.

6. Treat evaluation as part of the QA system, not an afterthought

AI-driven search in QA needs ongoing review with the same discipline teams apply to product quality. The question is not only whether the tool returns an answer; the question is whether the answer is precise enough, fresh enough, and useful enough to influence a release decision.

That means teams should define a small set of QA-specific evaluation tasks from the start. Examples include requirement trace checks, test gap detection for a release, owner lookup for a failing service, similar-defect retrieval, and release-readiness evidence collection. Each task should have an expected result so teams can review output quality on a repeatable basis rather than rely on anecdotal success.

Operational controls should include:

- Access audits: Verification that environment, customer, and policy boundaries hold across results.

- Freshness checks: Review of whether critical sources such as release notes, tickets, and incident records appear on time.

- Answer grading: Assessment of relevance, completeness, and factual support for high-value QA prompts.

- Workflow impact review: Measurement of whether the tool reduces cycle delays, misrouted defects, and missed coverage in practice.

Human review remains essential at this stage. Search can retrieve, rank, cluster, and draft; QA leadership still decides whether the evidence supports release confidence, whether the risk posture is acceptable, and whether a result deserves trust in a production workflow.

1. Connect requirements, code, and defect history before writing tests

The first real gain from AI in quality assurance shows up before the first test case exists. QA quality rises when teams can inspect the release as a chain of decisions — what product approved, what engineering changed, what support flagged, and what failed in earlier versions.

That chain rarely appears in one document. A tester may need a Jira epic, a design note, a schema change, a pull request discussion, a rollback note, and three old defects to understand one feature well enough to test it properly. AI-driven search shortens that path by assembling the release lineage up front, which gives QA a firmer basis for scope, test depth, and scenario choice.

Build a release record, not just a requirements list

A requirements doc tells QA what the feature should do. It does not show where the logic lives, which services now share responsibility, which assumptions changed in the code, or whether the same area caused customer pain last quarter. For test authoring, that missing detail matters more than polished documentation.

A useful AI search workflow should surface a working record with enough detail to answer three practical questions before test design starts:

- What changed in implementation terms: Not just the feature name, but the modules, APIs, permissions, data contracts, and service boundaries touched by the release. This helps QA choose the right level of validation — unit-facing, integration-heavy, UI-heavy, or all three.

- What instability already exists in this area: Prior defects, reopened tickets, rollback events, and support escalations can reveal repeat failure signatures. AI systems that learn from historical defects and execution results already support predictive defect detection; the same history should inform test design before any suite runs.

- What business conditions shape expected behavior: Approval rules, entitlement logic, exception paths, regional differences, and policy constraints often live outside the engineering ticket. Search should pull those conditions into view so the test set reflects real business behavior rather than a simplified happy path.

This changes the role of QA from script assembly to evidence review. Teams can start with a release record that reflects actual system movement and business intent, which leads to tighter assertions and fewer blind spots.

Use code intelligence to trace impact beyond the ticket

Code intelligence helps QA move past the narrow description in a sprint item. A pull request may mention one service, while the real effect reaches authentication, logging, notifications, reporting, or downstream billing logic. Search that can map those relationships gives testers a more reliable basis for case selection, data setup, and regression scope.

That view also exposes details that standard test artifacts often miss: which engineer owns the changed component, which interfaces now depend on new inputs, which old bug pattern matches the current edit, and which environment assumptions could break under load or role changes. In practice, this is where AI-driven testing tools become more useful than static repositories of old test cases. They help testers trace evidence across code, documents, ownership, and defect memory in one flow.

For enterprise buyers, this is one of the clearest quality signals. The most valuable capability is not automated wording for test cases; it is the ability to retrieve the right implementation context at the right time, with enough depth to support careful validation. When QA starts from that level of traceability, test cases reflect how the software actually behaves under change — not how the release was summarized in planning.

2. Use natural-language search to build more accurate test cases

Turn plain-language questions into test evidence

After QA has a reliable fact base, testers should work in the language of behavior and risk. A query such as “What broke for EU invoice export after the pricing update?” or “Which mobile flows changed after the new access policy?” should return ranked evidence that maps directly to test design: release notes, affected services, defect clusters, support complaints, and owner commentary.

This approach helps because QA work rarely starts from a perfect spec. Test authors usually begin with an observed risk, a product change, or a customer symptom. Natural-language search closes that gap by translating intent into usable test input, which cuts prep time and improves precision across high-impact flows.

- Behavior map: the paths, states, and user actions the change can affect; this gives testers a sharper view of what to validate first.

- Condition set: the roles, regions, devices, data rules, and policy exceptions attached to that behavior; this turns one broad test idea into concrete coverage dimensions.

- Validation clues: the outputs that prove success or failure, such as API fields, UI states, system messages, or downstream updates; this strengthens expected results before execution starts.

Refine broad prompts into precise scenarios

Strong AI-driven testing tools do more than answer the exact words in a prompt. They apply query planning: a rough request gets split into narrower retrieval tasks that reflect how enterprise systems actually fail. “Checkout issue” can become tax calculation changes, payment retries after timeout, wallet-specific errors, promo-code edge cases, and support tickets tied to duplicate charges.

That refinement makes test design more disciplined. Instead of one broad case that says “verify checkout works,” QA can author scenario sets around threshold values, country rules, payment fallbacks, retry logic, and service-to-service handoff failures. Modern AI in quality assurance works best in this mode because software changes fast, dependencies shift often, and brittle test assumptions age out quickly.

Natural-language systems also help with scenario expansion from short prompts or user stories. A brief request can produce negative cases, boundary cases, and environment variants that manual drafting may miss under time pressure. That matters in sprint-based release cycles, where missed detail often shows up later as escaped defects or noisy regressions.

Keep every answer tied to source material

Useful retrieval for QA should include the answer and the evidence trail behind it. Test authors need direct access to the requirement revision, commit reference, incident record, or support case that shaped the result. Without that trail, a polished summary may look credible while hiding stale logic, an exception rule, or a fix that never reached production.

This evidence chain supports better test artifacts across the board. Assertions become more exact because expected outcomes trace back to real system behavior; defect writeups improve because the failure context is already assembled; review cycles move faster because engineers and product managers can inspect the same facts. In enterprise testing, trust comes from traceability, not fluency.

3. Prioritize high-risk areas to improve coverage without bloating the test suite

Suite growth carries a real cost. Every new test adds execution time, maintenance load, and another potential source of noise, so coverage strategy has to answer a sharper question: which scenarios deserve depth because failure there would create the most damage. Research on AI in software testing points to the same pattern across modern QA programs — the best results come from selective expansion, where AI helps teams direct effort toward the most failure-prone and business-critical paths.

AI-driven search supports that choice by pulling together signals that usually stay separate: unstable test history, release cadence, environment-specific defects, customer escalation themes, and production behavior after similar changes. That wider view helps QA teams identify workflows with outsized exposure — not only because they break often, but because they affect high-value transactions, cross-team dependencies, or support volume when they fail. Coverage becomes sharper because the search layer shows where another test adds signal and where it only adds maintenance.

Rank exposure before suite expansion

A stronger prioritization model looks past defect counts alone. It weighs how a feature behaves across environments, how often related components change, and how expensive failure becomes once it reaches users. AI search tools can assemble that profile from test platforms, deployment records, incident notes, and support systems in a way manual triage rarely can.

A practical model often includes:

Environment spread: A workflow that behaves differently across browsers, mobile devices, regions, or identity states deserves deeper validation than one with a narrow execution surface. This matters most for UI-heavy and customer-facing paths, where small rendering or permission differences can create real defects.

Blast radius: Some failures stay local; others affect billing, access control, fulfillment, or downstream services. Search can connect architecture notes, service maps, and prior incidents to show which areas carry multi-system risk.

Suite health: Flaky checks, duplicate scripts, and stale regression cases weaken signal. AI can help teams identify tests that rarely catch defects, overlap with stronger checks, or fail for environmental reasons rather than product issues.

Post-release cost: Support escalations, manual workarounds, and rollback history reveal where defects create operational drag. Those paths usually merit more scenario depth than features with low user impact and clean release history.

This method improves coverage in a way that raw expansion cannot. Teams can add negative cases, boundary conditions, and environment variants where the evidence shows real exposure, while trimming low-yield checks that absorb time without raising confidence. In practice, that often means fewer broad regression runs and more targeted validation around fragile interfaces, high-change services, and workflows with expensive failure modes.

AI-driven search also helps expose where the suite has gone stale. A product area may look well covered until search surfaces a gap between current implementation and the tests still in rotation — a visual change that altered behavior across screen sizes, a new API response shape, or a policy update that changed access logic without a matching test refresh. That kind of retrieval supports tighter suites, cleaner release signals, and a more honest view of what QA has actually validated.

4. Investigate failures faster and improve defect detection quality

Test design sets the stage; failure analysis determines whether QA can protect release quality under pressure. Once a suite run surfaces an issue, the next task is classification: product regression, flaky automation, unstable dependency, bad data setup, browser-specific break, or environment drift.

AI-driven search tools improve that classification step by pulling together runtime evidence that rarely lives in one place. Instead of a manual pass across logs, traces, test management records, feature flag history, browser and device results, and incident systems, QA can inspect one grounded result set that reflects the exact failure signature. That shortens the path to a reproducible defect and cuts back on misrouted bugs.

What better triage looks like

The practical gain shows up in defect detection quality. A failed assertion on its own says very little; the surrounding evidence determines whether the team has found a real software issue or just noise from the test stack.

Root-cause ranking: Strong AI search can connect stack traces, error strings, prior failure signatures, and recent service anomalies to rank the most likely sources of a break. That gives QA a sharper first hypothesis and helps engineering start from the right subsystem instead of a broad search.

Environment-aware comparison: Many defects hide inside one condition set — one browser version, one mobile device class, one region, one permissions state, or one feature-flag path. Search can compare pass and fail results across those dimensions and expose whether the issue belongs to the product itself or to a narrow execution context.

Reproduction detail with less manual assembly: High-quality triage depends on exact inputs: test data version, account state, API responses, upstream service status, and dependency behavior. AI search can surface those facts together so QA can reproduce the issue with precision rather than rebuild context from memory and scattered notes.

This approach matters most in release environments with constant change. A single unclear failure can trigger duplicate investigation across QA, engineering, and support; a well-classified failure can move straight to the right owner with the right evidence.

Pattern detection turns isolated failures into strategy

The next advantage comes from recurrence analysis. AI-driven search can cluster failures by signature, subsystem, release train, customer impact, or environment profile, which makes hidden defect families far easier to spot. What looks like a one-off timeout may in fact map to the same dependency instability that appeared in three earlier sprints; what appears as separate UI issues may point to one underlying permissions defect across multiple workflows.

That broader view supports more than triage. It supports defect prediction and fault localization — two areas where AI already shows real value in software testing. When search consistently surfaces the same weak points, teams can respond with targeted action: deeper regression around one API contract, stronger validation for one integration, synthetic test data for a risky edge case, or tighter release controls around one service that shows repeat instability.

The discipline here should stay operational and measurable. QA leaders should track metrics such as time to first reproducible case, defect recurrence after fix, percentage of failures traced to flaky automation, and rate of bug reclassification after engineering review. Those measures show whether the search layer improves defect detection or simply moves noise faster.

5. Bring shared context to QA, engineering, support, and product teams

Software quality improves when QA does not carry the full burden of interpretation. AI-driven search helps each adjacent team work from live company context inside its own workflow — product sees requirement drift, engineering sees service impact, support sees ticket clusters, and QA sees how those signals connect before release pressure rises.

This matters most in large enterprises, where information spreads across issue trackers, repos, design systems, incident tools, internal docs, and customer platforms. Search across those systems reduces the need for manual status chasing and gives each function a clearer role in quality decisions, not just in post-release cleanup.

Shared context improves handoffs

Cross-functional work often fails because each team restates the same problem in a different format. QA logs the defect, product reframes the expected behavior, engineering traces the code path, and support adds customer urgency later than it should; the cost shows up as rework, slower triage, and weak prioritization.

AI-driven search reduces that translation tax. Instead of one team packaging context for the next, the system can assemble a role-specific view from connected evidence — requirement updates, ownership data, recent deployments, prior incidents, and support trends — so each group starts from a stronger baseline.

Planning briefs: A QA lead can surface repeat failure themes, recent feature changes, and affected services before sprint scope locks. Product teams get a sharper view of what needs explicit acceptance criteria; engineering sees where dependency risk sits before code review backlog grows.

Customer-impact snapshots: Support data adds weight to technical decisions. A low-frequency bug in an admin flow and a high-volume issue in checkout should not receive the same treatment; search can expose that difference early.

Decision logs: Teams need a compact record of what changed, what risk that change introduced, and which assumptions still need validation. That record cuts drift between planning, test execution, and release review.

Owner-aware routing: Connected search can tie a defect to the right service owner, code reviewer, or product contact faster than manual escalation chains. That matters in organizations with dozens of teams and shared platform dependencies.

Role-based visibility keeps collaboration usable

Shared context only works when access stays disciplined. Enterprise QA often touches confidential support cases, internal incident reviews, proprietary architecture details, and policy-controlled release material; a useful system has to preserve the access rules of each source while still letting teams collaborate across them.

That balance affects adoption as much as security. Product teams need visibility into quality signals without exposure to raw customer records; support teams need issue patterns and approved technical context without full access to engineering systems; QA and engineering need deeper detail where their work requires it. Search that respects role boundaries keeps collaboration practical instead of forcing manual redaction or side-channel updates.

Audience-specific summaries: The same underlying evidence can support different outputs for different teams — a product-facing behavior note, an engineering-focused implementation brief, or a support-safe issue synopsis.

Release risk memos: Grounded AI can draft a short memo that pulls from approved sources only, which helps stakeholders review open risk without a long prep cycle.

Validation checklists: Retrieved evidence can turn into a first-pass checklist for release managers, QA leads, or support readiness teams. That saves time and keeps the checklist tied to current system behavior rather than stale templates.

Follow-up records: After a defect review, teams need a clean record of owners, blocked dependencies, and next checks. A grounded draft gives them that structure without another round of manual assembly.

This approach does not replace judgment from QA leads, engineers, product managers, or support managers. It gives them a faster first cut based on retrievable evidence, which is one reason rich-context AI has improved onboarding and response quality in customer service workflows and why the same model fits enterprise QA.

6. Put governance, permissions, and measurement in place from day one

By the time AI-driven search starts to shape test plans, triage paths, or release checks, the question shifts from capability to control. QA leaders need clear rules for what the system can access, how answers get verified, and which signals prove that the tool improves software quality rather than just speeding up activity.

That discipline has to sit inside the platform design. Search for QA often pulls from ticketing systems, source control, test management, incident records, knowledge bases, and support tools in the same response; without strict controls on retrieval, citation, and data handling, the result may be fast but not dependable.

Set hard boundaries on data access and model use

A useful QA search system does more than hide files a user should not see. It needs connector-level controls, source-preserved permissions, and clear policies for what context can pass into downstream AI steps. That matters because test workflows often cross from internal engineering material into customer-facing evidence, regulated records, or post-incident analysis.

Strong governance also covers how model providers handle enterprise data after retrieval. For many teams, that means approved model routing, zero-retention terms where required, audit logs for sensitive lookups, and a clear separation between indexed company knowledge and model training. In sectors with tighter compliance demands, those controls decide whether AI for software testing is usable in production at all.

Choose workflows that QA can verify end to end

A broad rollout creates noise before it creates value. The better starting point is a small set of QA workflows where teams can compare the system’s output against known records, existing test assets, and release outcomes without ambiguity.

That usually means work such as:

- Change-impact review: Surface which services, APIs, or user flows changed in the latest release and which existing tests map to those changes.

- Requirement drift checks: Compare current acceptance criteria with test cases and release notes to spot where product intent shifted but validation did not.

- Flaky failure investigation: Pull prior runs, environment notes, similar failures, and recent code movement into one view so teams can separate instability from true defects.

- Customer-signal mapping: Connect support escalations and incident patterns to weak test areas, especially for regressions that standard suites miss.

- Release exception review: Assemble known issues, approved workarounds, ownership records, and unresolved blockers for final decision support.

This type of scope gives QA teams a closed loop. They can inspect whether the answer cited the right sources, whether the retrieval missed a critical artifact, and whether the output changed the quality of the decision.

Track evidence quality and workflow impact together

Usage does not equal value. A search tool may attract heavy traffic and still return stale documents, weak rankings, or overconfident summaries that add review work back into the process. QA leaders need measures that reflect retrieval quality and operational effect at the same time.

Useful measures often include:

- Time to first credible evidence: How fast a tester reaches the first source set strong enough to support a test decision.

- Grounded answer rate: The share of responses that include current, relevant source material rather than generic synthesis.

- Stale-source rate: How often answers rely on outdated release notes, superseded specs, or retired runbooks.

- Defect investigation compression: Whether the system reduces the number of steps needed to move from failure signal to likely cause.

- Coverage recovery: How often search reveals missing tests after a feature change, incident, or support pattern.

This review process should not depend on intuition alone. High-maturity teams build benchmark query sets for common QA tasks, score retrieval and answer quality on a recurring cadence, and use automated grading where it is reliable enough to support human reviewers. Research on AI evaluation in enterprise systems shows that model-based grading can approach human consistency when the task is narrow and the criteria stay explicit.

Human judgment still sets the bar for release quality. AI-driven testing tools can surface risk, cluster evidence, and draft a response path; they cannot decide which tradeoffs fit a launch, which defects need escalation, or what level of uncertainty a business should accept before shipment.

How AI enhances test accuracy and coverage in quality assurance: Frequently Asked Questions

A useful FAQ should add operational detail, not restate the main argument. These questions focus on what QA leaders and test engineers usually need next: concrete outcomes, evaluation criteria, and rollout risks that shape day-to-day use.

1. What specific benefits do AI-driven search tools provide in quality assurance?

One major benefit is lower false confidence. QA teams often pass a release because the written tests look complete, while key business exceptions, environment constraints, or support-driven edge cases never make it into the suite. AI-driven search helps surface those blind spots early by exposing signals that usually stay buried in internal comments, incident reviews, and prior release notes.

It also improves continuity across the software lifecycle. Teams gain a practical way to preserve test rationale, defect context, and release history so that knowledge does not disappear when ownership shifts. That matters in large enterprises, where product managers, SREs, support leads, and engineers may each hold one part of the quality picture.

2. How can AI-driven search tools improve the efficiency of testing processes?

The strongest efficiency gain often appears before formal execution starts. A tester can enter a sprint with a much shorter prep cycle because the system can expose risky changes, unresolved dependencies, and known trouble spots without a long discovery phase across separate tools.

This also helps QA maintain pace when release frequency rises. Instead of rebuilding context from scratch every cycle, teams can reuse evidence trails from earlier incidents, prior test plans, and release reviews. That reuse supports faster handoff between manual testing, automation work, and defect triage.

A few workflow improvements stand out:

- Exploratory test setup: Search can reveal unusual customer paths, support phrasing, and historical edge cases that deserve manual attention.

- Regression reruns: Teams can locate the exact areas where repeated instability shows up, which makes rerun decisions more deliberate.

- New team ramp-up: New hires can inspect past failures, ownership history, and release patterns without a long dependency on tribal knowledge.

3. What are the key features of AI-driven search tools for QA?

For QA, the most useful features go beyond basic retrieval. The tool should understand how software work evolves over time, who owns which systems, and where evidence stays fresh enough for release decisions.

Several capabilities matter in practice:

- Freshness awareness: Results should reflect recent commits, deployment changes, updated runbooks, and current product decisions rather than old artifacts with high keyword match.

- Entity resolution: The system should recognize that one feature may appear under different names across tickets, code, dashboards, and customer-facing language.

- Change sensitivity: QA needs tools that can distinguish stable reference material from content that recently shifted and may require renewed test depth.

- Ownership mapping: Search becomes more useful when it can connect a failing area to the right team, maintainer, or reviewer without extra lookup work.

- Quality controls: Admin teams need ways to inspect relevance, spot weak results, and refine retrieval behavior over time.

These features make the difference between generic enterprise search and a system that supports release-critical QA work.

4. How do AI-driven search tools enhance test accuracy and coverage?

Accuracy improves when test design accounts for the real shape of system behavior, not just the intended happy path. Search can reveal low-frequency but high-impact cases — permission edge cases, environment-specific breakpoints, dependency timeouts, or support-reported failures that formal requirements never spelled out clearly.

Coverage improves when QA uses those signals to rebalance the suite. Instead of adding more checks everywhere, teams can add depth where defect recurrence, service churn, or user friction suggests real exposure. That leads to broader practical coverage across integrations, exception handling, and release-specific change zones.

Three mechanisms matter here:

- Exception discovery: Internal notes, support escalations, and postmortems often describe behaviors that never reached the original test plan.

- Cross-system pattern matching: Similar failures across environments, releases, or services can reveal weak coverage in one shared dependency.

- Suite correction: Search can help expose obsolete assumptions in older tests, which improves result quality without a major suite expansion.

5. What challenges might organizations face when implementing AI-driven search tools in QA?

One challenge is evidence quality drift. Enterprise systems change fast; owners rename services, teams split repositories, release records move, and ticket taxonomies evolve. Without deliberate maintenance, retrieval quality can slip even when the underlying AI remains strong.

Another challenge is operational discipline. QA organizations need clear ownership for search quality, result review, and rollout policy. A tool may perform well in one workflow and fail in another if nobody tracks freshness, benchmarks hard queries, or checks whether the returned evidence still supports release decisions.

A careful rollout usually requires:

- Benchmark queries: Teams should build a set of difficult, high-value QA questions and review performance against them on a regular schedule.

- Source stewardship: Repositories, ticket fields, runbooks, and release records need consistent structure or the retrieval layer will inherit the mess.

- Policy review: Data residency, retention limits, and model-use terms should align with internal standards before broad deployment.

- Human review points: Test leads still need authority over risk acceptance, release judgment, and defect severity.

The strongest programs treat AI search as part of the QA operating model, not as a side utility.

AI-driven search does not replace the judgment that makes QA valuable — it gives that judgment a stronger foundation of evidence, context, and traceability. The teams that treat this as an operating model shift, not just a tool adoption, will build quality programs that scale with release velocity instead of falling behind it.

Request a demo to explore how we can help you bring AI-powered search and context into your QA workflows — so your team spends less time hunting for answers and more time protecting software quality.