How data governance frameworks support AI search optimization

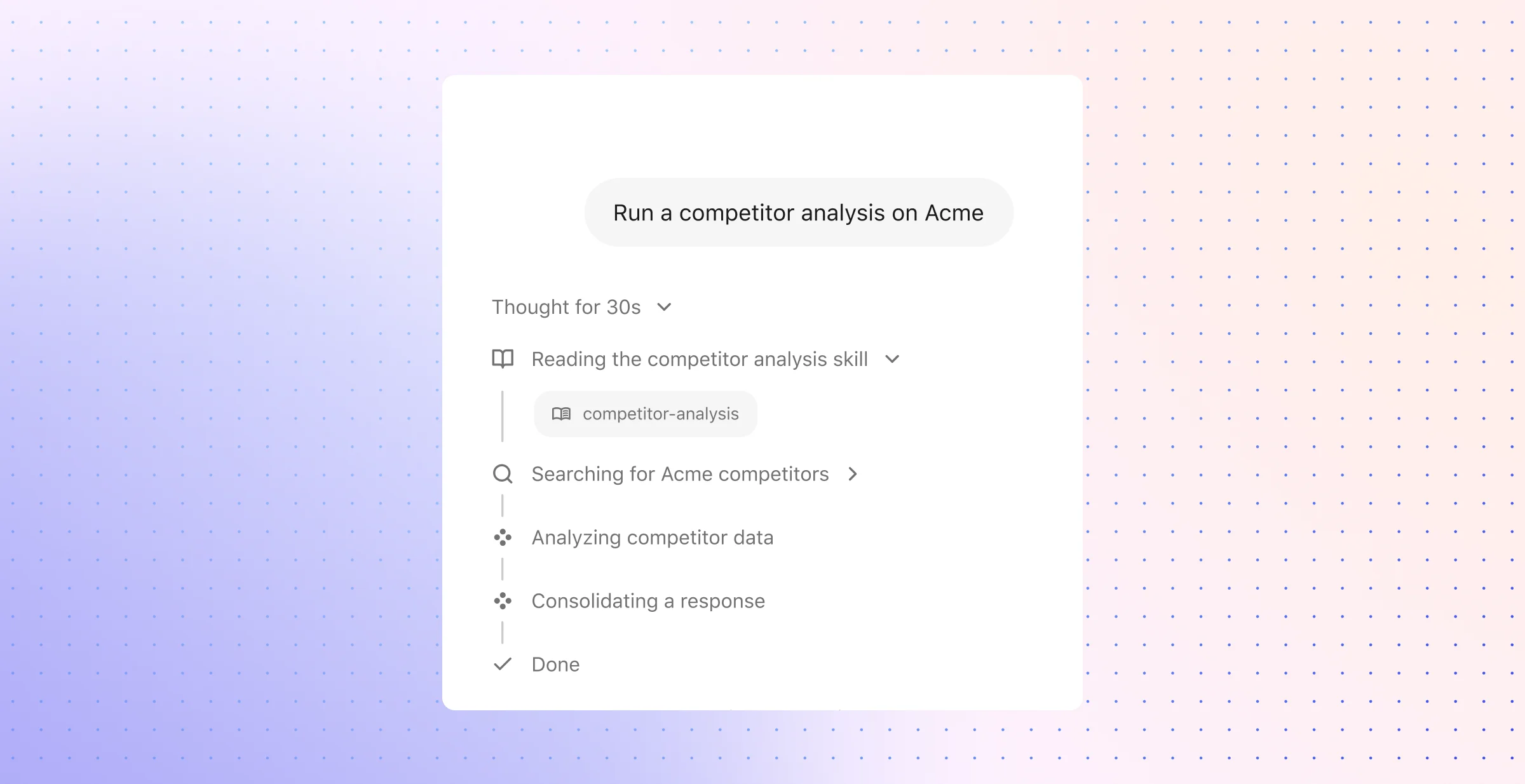

AI-powered search has fundamentally changed how enterprise teams find and use information. Instead of matching keywords across a single repository, modern systems interpret intent, retrieve content from dozens of applications, and synthesize answers in real time — often through techniques like Retrieval Augmented Generation (RAG) that ground large language models in company-specific knowledge.

That capability, however, depends entirely on the quality and control of the data underneath it. Data governance — the set of policies, roles, and controls that keep enterprise data accurate, secure, current, and usable — is what determines whether AI search delivers trustworthy answers or surfaces stale, duplicated, or overexposed content.

For fast-growing enterprises with distributed knowledge across engineering, support, sales, HR, and IT, the relationship between governance and search is not abstract. It is operational. Every access control, metadata standard, content lifecycle rule, and compliance policy shapes what an AI search system can retrieve, who can see it, and how confidently it can respond.

What is data governance and how does it relate to AI-powered search?

Data governance is the structured framework an organization uses to manage the availability, usability, integrity, and security of its data assets. It defines who owns specific datasets, who can access them, under what conditions, and how long they should be retained. In an enterprise context, governance encompasses data quality standards, metadata management, access control policies, compliance requirements, and data lifecycle management — all coordinated to ensure that information remains reliable and accountable as it moves through systems and teams.

The connection to AI-powered search is direct and consequential. Modern enterprise search does far more than return a list of links; it crawls and indexes content across hundreds of SaaS applications, normalizes that content for ranking, and — in RAG-based architectures — feeds retrieved documents to a large language model to generate a synthesized answer. Every stage of that pipeline depends on governed data. If source permissions are broken, the system may surface restricted content. If metadata is inconsistent, ranking suffers. If documents are outdated or duplicated, the generated response inherits those flaws.

Why governance is the foundation, not a bottleneck

A common concern is that governance adds friction to search. The opposite is true at scale. Governance is what makes AI search trustworthy enough to deploy across an entire organization. Consider the core dependencies:

Access control and permissions: Enterprise AI search must respect the permissions set in every source system — from document repositories to messaging platforms to ticketing tools. A well-governed data environment preserves those permissions upstream of any AI model, so users only see content they are authorized to access. Without this, data leakage becomes a systemic risk, especially as organizations move from basic search to assistants and agents that can plan, execute, and act on retrieved information.

Content quality and freshness: AI search relevance drops fast when the indexed content is stale, incomplete, or poorly labeled. Governance establishes review cycles, ownership accountability, and retention policies that keep the knowledge base current. This matters especially for RAG, where the language model's response quality is bounded by the quality of the documents it receives as context.

Metadata and taxonomy standards: Semantic search models and knowledge graphs both perform better when enterprise content carries clear, consistent metadata — titles, authors, dates, sensitivity labels, business units, and topic classifications. Governance defines those standards. The result is stronger ranking signals, more precise filtering, and better connections between people, content, and activity across the organization.

Authority and source trust: Not all enterprise content carries equal weight. A governance framework helps define which repositories and documents are official, which are drafts, and which are deprecated. This mirrors the logic behind authority-based ranking algorithms — similar in spirit to how PageRank determined webpage importance — and reduces the risk that low-quality or duplicated content outranks a trusted source in search results.

Compliance and auditability: Regulations like GDPR, HIPAA, and emerging AI-specific standards such as the EU AI Act require organizations to demonstrate control over how data is collected, stored, accessed, and used. Governance builds that control into the data layer itself — through classification, retention schedules, consent tracking, and audit trails — so that AI search and any downstream AI-driven insights remain compliant by design.

The governance-search feedback loop

A useful way to think about the relationship: governance defines the rules of the road, and AI search turns governed data into fast, usable answers. But the loop also runs in reverse. Search analytics — click-through rates, zero-result queries, low-confidence answers — reveal gaps in governance. A spike in stale results may signal a retention policy that needs enforcement. A pattern of permission errors may point to misconfigured access controls in a source system. Mature teams treat search relevance and data governance as part of the same enterprise data strategy, each one strengthening the other over time.

This operational link is what separates organizations that deploy AI search as a novelty from those that scale it into a reliable, permissions-aware, enterprise-grade platform — the kind of architecture, such as what we offer at Glean, where continuous crawling, hybrid retrieval, and knowledge graph context all depend on well-governed source data to deliver accurate results.

How data governance frameworks support AI search optimization

AI search optimization depends on more than model quality. It depends on whether the search layer can rank trustworthy material, honor source-level restrictions, and interpret the business meaning around every file, message, case, and record.

A strong governance framework supports that work in five practical ways: it gives ranking systems better signals, applies policy at retrieval time, keeps the index current, makes answers defensible, and turns search behavior into a source of operational insight. That is why mature organizations tie governance work to search performance from the start.

1. Governance gives ranking systems better signals

Enterprise search has a ranking problem before it has a model problem. The system needs enough structure to tell the difference between an approved policy and a team draft, between a current runbook and last year’s workaround, and between a source of record and a copied fragment.

That distinction comes from governed signals such as:- Metadata completeness: titles, authors, dates, business unit labels, document type, and sensitivity markers give the ranker usable structure instead of raw text alone.- Authority markers: approval state, repository class, owner status, and review history help the system assign weight to official material.- Entity relationships: accurate links across people, teams, projects, and records improve graph-based context for personalized retrieval.- Terminology control: shared taxonomy and consistent naming reduce drift across similar concepts, acronyms, and internal shorthand.

This matters most in hybrid retrieval. Semantic models help with intent; lexical methods help with exact strings like ticket IDs, error codes, and policy names; graph context helps with relevance inside a specific business setting. Governance improves each signal path, which raises precision without narrow query tuning.

2. Governance applies policy at retrieval time

Good security practice does not stop at storage. The retrieval layer needs the same control logic as the source systems, especially when one search experience spans chat, file storage, CRM records, wiki pages, and service platforms.

That means policy must travel with the content:- Permission inheritance: the search index should reflect source-system access rules, not replace them with a separate permission model.- Classification-aware filtering: labels for confidential, regulated, legal, finance, or HR content should shape retrieval before answer generation.- Audit-ready access control: teams need a record of what content entered the result set, why it ranked, and whether a user had rights to see it.- Oversharing detection: governance should surface files or folders with exposure beyond intended audiences before assistants or agents touch them.

This is where AI search and AI governance start to overlap. Once agents can draft replies, route work, or update systems, retrieval becomes a control point for risk. A weak policy layer can turn one bad permission into a broad downstream action.

3. Governance keeps the index current and coherent

Search quality drops when the index fills with expired, superseded, or context-poor material. Many organizations do not lack information; they lack clear rules for what should remain active, what should archive, and what should disappear from retrieval.

A governance framework addresses that through disciplined lifecycle control:1. Ownership assignment: critical content domains need named owners with responsibility for accuracy and review.2. Freshness standards: operational content such as support procedures, incident playbooks, and HR policies should meet explicit review intervals.3. Retention rules: outdated files should archive or lose rank based on policy, legal requirements, and business value.4. Lineage visibility: the system should preserve source history so teams can tell which version came first, which one changed, and which one replaced it.

These controls reduce conflict in the retrieval set. They also improve the context window for RAG systems, which perform best when retrieved material is current, distinct, and internally consistent.

4. Governance makes answers defensible

An answer has more value when a user can verify where it came from and why the system chose that source. In enterprise settings, that level of traceability matters as much as answer speed.

Governance supports defensible answers through provenance:- Source traceability: citations should resolve to identifiable, accessible records rather than anonymous snippets.- Approved-source preference: the system should favor sanctioned repositories for policy, process, and compliance questions.- Conflict visibility: when sources disagree, governance should help expose status, recency, and ownership so the system can prefer the stronger record.- Steward accountability: every high-value content area should map to a team or owner who can correct errors and maintain source quality.

This is especially important in regulated and high-change environments. A support lead needs the current procedure, not a plausible paraphrase. A sales rep needs the active pricing rule, not an old deck. A manager needs the approved leave policy, not a comment thread.

5. Governance turns search behavior into an operating signal

Search analytics do more than measure adoption. They reveal where the governance model breaks down.

Useful patterns include:- Zero-result queries: these often point to taxonomy gaps, missing synonyms, or content that lacks basic metadata.- Low-trust clicks: repeated selection of buried results can signal weak authority weighting or poor source labeling.- Outdated citations: frequent use of old material may indicate weak retention enforcement or absent review cycles.- Access anomalies: unusual permission denials or empty result sets for known content may expose identity, connector, or classification problems.

At that point, search becomes a diagnostic surface for governance quality. Teams can use live behavior to refine taxonomy, ownership, lifecycle rules, and access design instead of relying on one-time cleanup efforts.

The most effective order of operations

Organizations usually see better results when they sequence governance work in a practical order rather than tackle every control at once.

- Govern access first: preserve source permissions, apply classification labels, and identify exposed sensitive content.

- Improve content quality next: fix ownership gaps, remove redundant records, and repair weak metadata.

- Add business context after that: standardize taxonomy, connect entities across systems, and enrich content with role, team, and project data.

- Apply lifecycle and compliance controls: enforce review windows, retention rules, archival policy, and audit requirements.

- Use search analytics to refine the framework: let query failures, citation issues, freshness problems, and access anomalies expose the next set of governance fixes.

That sequence makes AI search optimization practical in the enterprise. The search layer gains cleaner inputs, stronger rank signals, clearer policy boundaries, and more reliable source evidence — all of which reduce avoidable error across search, assistants, and agents.

Frequently Asked Questions

Once governance and search sit inside the same operating model, the practical questions change. Teams stop asking whether governance matters and start asking how it shapes retrieval, answer quality, and production risk.

What is data governance and why is it important for AI?

In an AI context, data governance is the discipline that assigns decision rights around enterprise information. It defines who approves data use, what quality threshold a source must meet, how lineage is recorded, which content states exist — draft, approved, retired — and how exceptions move through review.

That structure matters because AI systems need more than raw access to documents. They need traceable inputs, known provenance, and a clear record of source validity at the time of retrieval. Without that, teams cannot reliably explain why an answer appeared, whether the source met policy, or which version of a record shaped the response. In RAG systems, weak provenance also makes evaluation harder; low-confidence answers often trace back to poorly governed source sets rather than model behavior alone.

How does data governance impact AI-powered search functionality?

Governance affects search at the document-processing layer as much as the policy layer. Standard field definitions, document states, and content labels help the system parse files correctly, split content into useful chunks, attach the right metadata, and assemble cleaner citations. That improves retrieval for direct lookup and for synthesized answers.

It also helps the system resolve ambiguity. In large enterprises, the same acronym may point to a product, a team, a cost center, or a project code. Governance gives search the context needed to disambiguate those terms through approved taxonomy, source labels, and relationship data. The result is better source selection, cleaner query expansion, and fewer cases where the system retrieves a plausible but wrong answer path.

What role does data quality play in AI search outcomes?

Data quality affects more than relevance. It shapes how well the system can parse, segment, and interpret enterprise content in the first place. A malformed PDF, an orphaned wiki page, a missing timestamp, or a mislabeled policy can weaken chunk quality, distort embeddings, and confuse source attribution even before ranking begins.

Several quality issues create outsized search problems:

- Ambiguous entity names: Shared acronyms and inconsistent naming conventions make it harder to connect the right people, projects, and records.

- Version conflicts: Multiple live versions of the same document can push the system toward mixed evidence and unstable answers.

- Incomplete structured fields: Missing dates, owners, status markers, or department tags reduce filter accuracy and hinder authority assessment.

- Low-value text extraction: Broken formatting, image-only files, and noisy OCR output degrade semantic interpretation and citation precision.

High-quality enterprise content gives AI search cleaner units of meaning. That supports stronger embeddings, more stable retrieval, and more reliable grounding across search, chat, and workflow use cases.

What are the best practices for implementing data governance in AI systems?

The strongest programs treat governance as an operating system for AI rather than a policy archive. That means clear stewardship, enforceable ingestion rules, and a remediation process for content that fails review. The work usually starts with high-value domains such as HR policy, customer support knowledge, engineering runbooks, and security documentation — areas where search errors carry operational cost.

A practical implementation model usually includes these elements:

- Create an authoritative source register: List which repositories count as system-of-record sources for each domain, and define which document states may enter AI retrieval.

- Set ingestion standards: Require minimum fields, approved templates, parse quality checks, and source identifiers before content enters the index.

- Establish exception workflows: Route policy conflicts, sensitive-content findings, and low-quality files to named stewards instead of leaving them in the retrieval pool.

- Capture provenance at answer time: Store source version, retrieval context, and policy state so teams can inspect why an answer appeared.

- Evaluate with production-style queries: Test retrieval and generated answers against real employee tasks, not only benchmark prompts, and track failure modes by source type.

- Use remediation SLAs: When search exposes taxonomy gaps, stale content, or broken source records, assign deadlines and ownership for correction.

This approach works best when paired with search architecture that can use exact match, semantic context, and relationship signals together. AI search in the enterprise must handle both precise lookups and broad task-oriented questions; governance helps each retrieval mode use the right evidence.

How can organizations ensure compliance in AI data governance?

Compliance becomes durable when policy moves from static documentation into system behavior. That requires policy-as-code where possible: content classes that trigger handling rules, lineage records that survive transformations, and retrieval controls that apply before an answer forms rather than after review.

Operationally, that usually means four layers of control:

- Regulatory mapping: Tie legal requirements to content classes, jurisdictions, and business processes so the system knows which rules apply to which data.

- Evidence capture: Preserve logs for classification decisions, source updates, retrieval events, policy exceptions, and answer provenance.

- Use restrictions for model processing: Apply contractual and technical controls that limit retention and prohibit model training on enterprise prompts or retrieved content where required.

- Action governance for agents: Define approval thresholds, execution scopes, and audit rules for systems that can send messages, update records, or trigger workflows from retrieved information.

This matters most in environments where AI does more than answer questions. Once a system can draft a customer reply, update a ticket, or surface regulated records across departments, compliance depends on traceability, purpose limits, and enforceable controls at each step of the chain.

Data governance is not a prerequisite you finish before deploying AI search — it is a continuous practice that improves alongside it. The organizations seeing the strongest results treat governance and search as one system, where better data produces better answers and search behavior reveals the next governance priority.

If you're ready to see how this works in practice, request a demo to explore how we can help AI transform your workplace.