How to choose scalable data solutions for growing volumes

Every enterprise reaches a point where the data infrastructure that worked at one scale starts to buckle under the weight of the next. The symptoms show up in familiar ways: slow queries, stale dashboards, brittle pipelines, and employees who can't find what they need across a growing sprawl of applications.

Choosing a scalable data solution is one of the most consequential decisions a growing organization can make — and one of the most misunderstood. Too often, the evaluation centers on raw capacity when the real challenge is whether people, systems, and AI can still use the data effectively as volume compounds.

This guide breaks down what scalable data solutions actually require, how to evaluate whether a platform will hold up as data sources multiply, and the practical steps that separate infrastructure that grows from infrastructure that just gets bigger.

What is a scalable data solution?

A scalable data solution is a system built to handle rising data volume, velocity, and complexity without degrading performance, access, or governance. The right choice does more than add storage capacity. It keeps data usable, secure, permission-aware, and ready for search, analytics, and AI as the organization evolves.

The distinction matters because scale in enterprise environments rarely fails at the obvious places. It fails at the seams — disconnected tools, stale indexes, brittle ETL pipelines, and inconsistent access controls that fracture the experience for end users. A company might have petabytes of cloud storage and still struggle to deliver a timely answer to a support engineer or surface the right document for a sales team. Data architecture best practices matter as much as infrastructure size; a larger system is not automatically a better one.

What true scalability demands

Genuine scalability extends across several dimensions simultaneously, not just raw capacity:

- Users and roles: The system must serve engineering, support, sales, HR, and IT teams without performance cliffs as headcount grows. Permission models need to propagate cleanly across every source and every query.

- Data sources and formats: Enterprises typically operate across hundreds of SaaS applications, internal databases, and document repositories. A scalable solution must ingest structured, semi-structured, and unstructured data — and keep it connected through a unified index or knowledge graph.

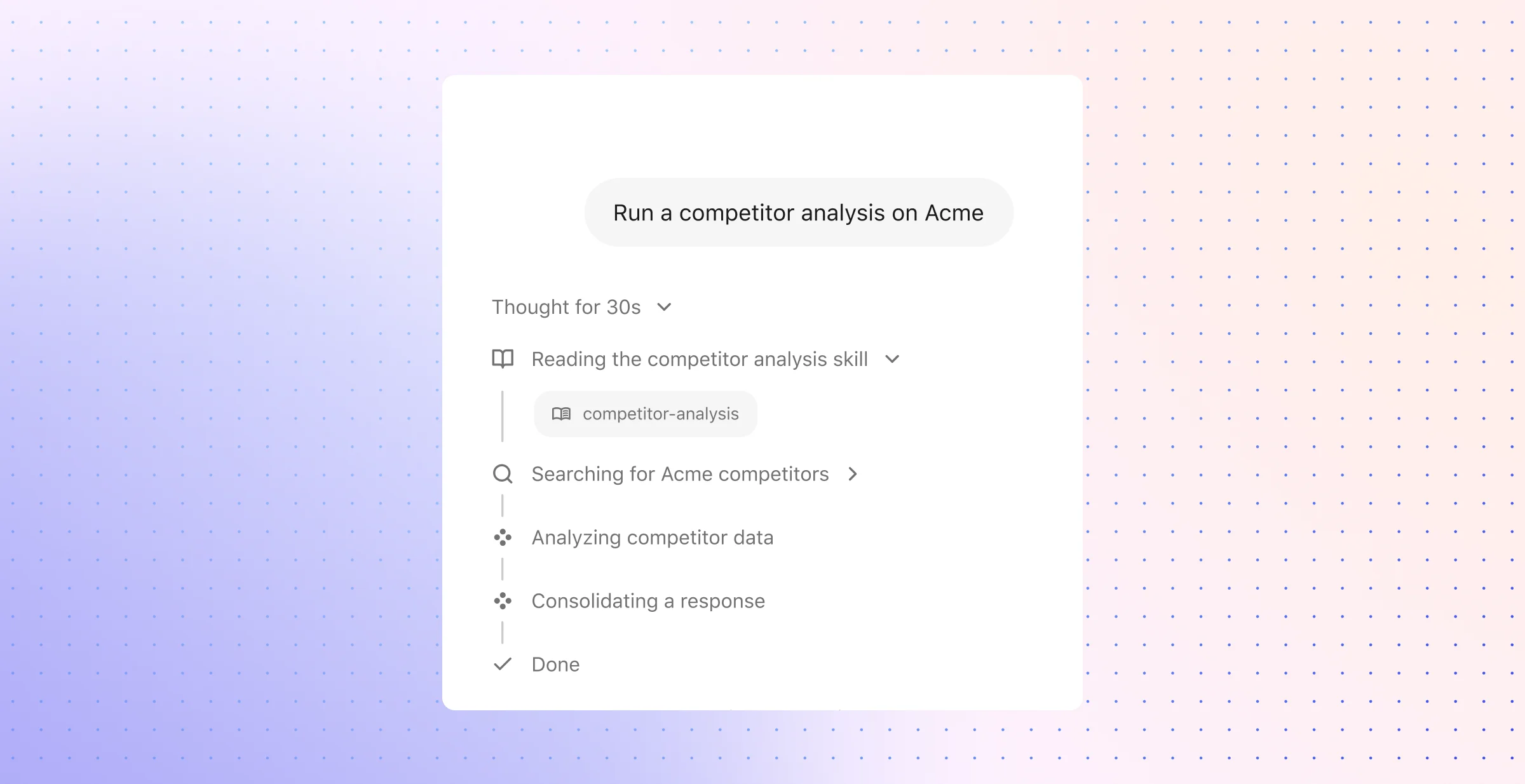

- Workflows and use cases: What starts as enterprise search often expands into AI-assisted answers, automated workflows, and agentic reasoning. The underlying data layer must support that progression without requiring a new platform at each stage.

- Governance and freshness: As data volumes grow, so does the risk of stale, duplicated, or improperly permissioned content. Scalable systems maintain continuous crawling, indexing, and permission enforcement — not periodic snapshots that drift out of sync.

Teams evaluating scalable data solutions are usually trying to answer a practical question: will this still work when our data sources multiply, our employees need faster answers, and AI becomes part of daily operations? The evaluation that answers that question well follows a clear sequence — requirements first, then architecture, then ingestion design, then governance, then proof under real load. Each layer builds on the one before it, and skipping any of them is how organizations end up with systems that look scalable on paper but collapse in practice.

How to ensure a chosen solution will scale with growing data volumes?

The safest choice is the one that preserves service levels after three kinds of change: more data, more concurrent demand, and more operational disruption. Storage capacity matters, but recovery time, freshness targets, admin effort, and policy enforcement decide whether the system remains usable at scale.

A disciplined review starts with hard thresholds, not feature claims. Set target numbers for ingest rate, query concurrency, retention horizon, recovery window, and acceptable cost growth; then examine whether the platform can meet those numbers through ordinary growth, source expansion, and infrastructure change.

Use a six-part evaluation sequence

Define the next stage of demand in exact terms: Forecast monthly data growth, peak events per second, daily document adds, user concurrency, retention rules, and regional residency requirements. Include workload shifts such as real-time dashboards, support escalations, model prompts, audit requests, and quarter-end reporting so the design reflects actual business pressure rather than average load.

Inspect the scale model inside the architecture: Check how the platform adds capacity — larger nodes, more nodes, or both. Favor systems with clear support for partitioning, replicas, compute-storage separation, and workload isolation so analytics jobs do not choke live application traffic.

Stress the ingest path early: Review whether the system supports parallel extraction, Change Data Capture, queue buffers for burst traffic, bulk-load paths, and retry controls that prevent duplicate writes. A platform that handles steady flow but breaks on backfill, replay, or source spikes will create risk long before storage runs out.

Review policy controls as part of data flow: Examine identity sync, group updates, retention controls, audit logs, legal holds, deletion workflows, and lineage records. Governance at scale depends on durable policy mechanics, not manual cleanup after data lands.

Run pressure tests against failure states: Simulate hot partitions, node loss, API throttling, cross-region latency, index rebuilds, and partial source outages. Measure backlog growth, retry success, cutover time, and the operator work required to return the system to a clean state.

Calculate the cost of change: Ask what happens when a source changes schema, a region needs local storage, or a database estate needs migration under load. A system that cannot absorb upgrades, source swaps, and tenant growth without long projects will raise operating cost each quarter.

This method gives buyers a clearer view than a feature matrix. Most scale failures appear after launch — during reorgs, source migrations, policy changes, or burst traffic that exposes weak assumptions in the original design.

Test operational scale, not lab scale

Benchmark numbers from a clean environment rarely capture what enterprise systems face in production. API rate limits, uneven key distribution, delayed retries, partial sync failures, and stale tombstones create the kind of friction that no idealized load test will show.

Operational visibility deserves the same scrutiny as throughput. The platform should expose queue depth, watermark age, failed sync counts, replay status, index lag, cache behavior, and the exact source of an access mismatch so teams can isolate faults without a long incident trail.

Use test cases that mirror real operational strain:

- Connector expansion: Add several new business systems in a short window; then inspect sync completeness, metadata coverage, identity mapping, and admin effort per source.

- Schema drift: Change field types, add nested attributes, and remove deprecated columns; then verify that pipelines, catalogs, and downstream tables stay intact.

- Freshness windows: Push burst updates into high-volume sources; then measure how fast those changes appear in retrieval, reporting, and automation flows.

- Access-policy updates: Run a department reorg or role review; then check whether nested groups, revoked access, and inherited controls update without lag.

- Migration and recovery: Cut over large numbers of database instances or restore from a partial outage; then compare downtime, validation effort, and rollback safety.

A platform that passes those tests has a far better chance of holding up during ordinary enterprise change. The point is not raw speed in isolation; the point is predictable behavior when the environment shifts.

Look closely at connectors, context, and permission fidelity

Source adapters often look interchangeable in a demo and behave very differently in production. Some pull little more than titles and body text; stronger ones carry ownership data, timestamps, comments, group membership, deletions, ACLs, and other source signals that downstream systems need for accurate retrieval and policy enforcement.

The review should get specific fast. Ask how each connector handles delta syncs, revoked access, nested groups, private channels, deleted records, API throttling, and source-side rate caps. Weak handling in any of those areas leads to ghost content, stale records, broken lineage, and costly cleanup work later.

Context quality matters here as much as source coverage. Search, analytics, and AI perform better when the system captures who created the content, who used it, what changed, when it changed, and how it relates to other entities in the business. Thin metadata produces flat results; rich metadata supports stronger ranking, cleaner retrieval, and safer automation.

Favor adaptability over raw size

A scalable platform should age well under maintenance. Version upgrades, storage-tier shifts, cloud-account changes, source replacements, and regional expansion should fit into normal operations rather than trigger a long rewrite.

This is where migration design becomes a real selection criterion. Ask how the system handles cutover plans, dual-write periods, validation checks, rollback paths, and phased moves for large database estates. A product that looks stable until the first major migration may not scale in any practical sense.

The strongest designs keep change local. Transactional systems serve live writes, analytics systems handle heavy scans, stream layers absorb event flow, and governance spans all of them through shared identity, lineage, and policy controls. That structure reduces blast radius when one layer changes and keeps future architecture decisions from turning into full-stack rebuilds.

Frequently Asked Questions

These questions usually come up after the first round of vendor conversations, when the discussion shifts from broad platform claims to practical tradeoffs. At that point, the useful questions become narrower: fit, limits, operating cost, and how much strain the system can absorb before everyday work slows down.

1. What factors should I consider when choosing a scalable data solution?

Start with the workload. A platform that handles transactional records well may struggle with long analytical scans; a system built for event streams may not suit document-heavy knowledge workflows. The right choice depends on the shape of the data, the speed at which it arrives, the kinds of queries teams run, and how much latency the business can tolerate.

A strong evaluation usually comes down to seven checks:

- Growth profile: Model the next 12 to 36 months in terms of records, files, events, users, and query volume. A system that looks comfortable at today's size can become costly or unstable once growth compounds.

- Workload fit: Separate operational traffic from analytical traffic. Order processing, dashboards, log search, and AI retrieval rarely belong on the same serving path.

- Scaling model: Determine whether the platform expands by adding stronger machines, more nodes, or both. Long-term growth usually favors systems that distribute load cleanly across partitions or services.

- Data model fit: Structured tables, semi-structured JSON, documents, messages, and media all behave differently at scale. Choose a system that matches the dominant format instead of forcing everything into one shape.

- Latency and consistency needs: Some workflows need millisecond reads and strict transactions; others can tolerate slight delay in exchange for lower cost and greater throughput.

- Operating model: Consider who will run it. A managed service can reduce maintenance overhead, but that convenience has to be weighed against configurability, portability, and long-term dependency.

- Total cost curve: Look beyond storage price. Compute spikes, data transfer, backup, failover, and administrative time often reshape the economics after launch.

The key test is durability of fit. The platform should remain useful after new teams, new data classes, and new decision paths enter the picture.

2. How can I assess the scalability of my current data infrastructure?

A useful assessment begins with evidence, not assumptions. Pull 30 to 60 days of operational data and map where the system slows down, where queues build, where retries rise, and where users feel the drag. A platform can look healthy in infrastructure dashboards while business teams still deal with late reports, lagging indexes, or failed refresh cycles.

After that baseline, compare your current environment against realistic alternatives under the same conditions. The most reliable way to do that is with a small scorecard that tracks technical behavior and operational effort side by side.

A practical assessment should include:

- Performance under normal load: Track p95 and p99 response times, ingest backlog, job duration, and data availability windows during ordinary business traffic.

- Behavior under surge: Recreate the pressure points that matter to your business — month-end reporting, a bulk import, a large migration wave, or a burst of customer activity.

- Recovery discipline: Measure how long it takes to restore service after a failed sync, a malformed feed, or a source outage; do not rely on backup promises alone.

- Change tolerance: Test how the system behaves when a source adds fields, changes formats, or expands sharply in row count.

- Operational effort: Record how much manual intervention the team needs for replay, rollback, access reviews, and routine maintenance.

The best assessments expose bottlenecks that architectural diagrams hide. They also show whether the current stack fails because of product limits, weak configuration, or a mismatch between the system and the workload it now carries.

3. What are the best practices for designing a scalable ETL system?

A scalable ETL system needs clear separation between extraction, transformation, and delivery so each stage can expand or recover without dragging the rest of the pipeline down. It also needs a bias toward smaller, repeatable updates instead of heavyweight full refreshes that consume time, compute, and source-system capacity.

Several design choices make a measurable difference:

- Use incremental movement wherever possible: Change data capture, watermark-based loads, and replay-safe writes reduce source strain and shorten recovery windows.

- Partition with intent: Split work by date, tenant, region, or another stable key so the pipeline can process data in parallel without hotspots.

- Write through bulk paths: Large loads should use native bulk interfaces such as Snowflake COPY INTO or BigQuery streaming and batch ingestion paths, not row-by-row inserts.

- Preserve business context: Source identifiers, event times, ownership fields, and transformation history should move with the data so downstream systems can interpret it correctly.

- Build for replay: Checkpointing, dead-letter handling, and idempotent writes make it possible to rerun failed segments without corruption or duplication.

- Keep migration risk low: ETL architecture should support phased cutovers and side-by-side validation; tools such as Google Cloud Database Migration Service illustrate why controlled migration matters as much as raw throughput.

Good ETL system design supports scale because it limits blast radius. When one source changes or one stage fails, the team should not need a full pipeline freeze to regain control.

4. How do cloud-based solutions enhance data scalability?

Cloud platforms improve scalability by making storage and compute easier to add, remove, and isolate. That flexibility matters when demand is uneven — for example, when ingestion spikes at the end of the quarter, analytics jobs crowd the warehouse in the morning, and customer-facing systems need steady response times all day.

The advantage comes from operating characteristics more than location alone:

- Elastic capacity: Teams can add compute for heavy transforms or large query windows without long procurement cycles.

- Fast provisioning: New environments, replicas, and recovery instances can come online far faster than in most fixed-capacity setups.

- Tiered storage: Object storage and archival classes make it easier to keep raw and historical data without treating every byte as high-performance data.

- Geographic distribution: Cloud regions help place data and compute closer to teams, systems, or regulatory boundaries.

- Managed resilience: Backup orchestration, failover options, and service-level tooling reduce the operational burden on internal teams.

That said, cloud storage scalability does not correct a poor data model, weak partition design, or undisciplined lifecycle management. The cloud gives teams more room to scale; it does not decide what should scale, when it should scale, or what the resulting bill will look like.

5. What common pitfalls should I avoid when scaling data systems?

The most expensive mistakes usually come from oversimplification. Teams assume scale means more storage, pick a platform that looks broad enough, and then discover that query patterns, write bursts, retention rules, and cost controls push the system in conflicting directions.

Several pitfalls show up repeatedly in growing environments:

- One system for every job: Transaction processing, search, reporting, and historical analysis often need different storage and compute patterns. Forcing them together creates contention.

- Vertical-only thinking: Bigger machines can delay redesign, but they rarely solve long-term growth on their own.

- Poor partition choices: A weak shard or partition key creates hotspots, uneven performance, and expensive rebalancing later.

- Heavy analytics on production stores: Long scans and large joins can degrade core application performance in ways users notice immediately.

- No retention or archival policy: Keeping all data in the hottest tier inflates cost and slows retrieval.

- Manual monitoring: Human checks do not keep pace with modern data flow. Teams need automated alerts, health signals, and clear failure states.

- Migration blind spots: Systems that look stable in steady state can become fragile during upgrades, platform moves, or region expansion.

- Hidden cost exposure: Egress fees, cross-region transfer, backup storage, and emergency compute often matter more than the headline storage rate.

A data foundation scales more cleanly when each layer has a clear job, a clear failure mode, and a clear path to change. That discipline prevents growth from turning into a chain of one-off fixes.

Scalable data infrastructure isn't a one-time decision — it's a discipline that compounds in value every time your organization adds a new source, a new team, or a new way of working. The organizations that get this right treat scalability as an operating principle, not a checkbox.

If you're ready to see how we help enterprises unify their data, maintain context at scale, and put AI to work across every team, request a demo to explore how we can transform your workplace.