How to implement AI sales assistants for better customer interactions

Sales teams in complex B2B environments face a persistent challenge: the gap between what a rep knows from memory and what a buyer needs to hear in the moment. That gap leads to hesitation, vague follow-ups, and lost credibility — all of which slow deals and erode customer confidence.

An AI sales assistant closes that gap by putting trusted company knowledge directly into the flow of a live conversation. Rather than force reps to search across tabs or defer to a colleague, the right assistant surfaces accurate answers, relevant context, and clear next steps exactly when they matter most.

This guide walks through a practical implementation framework — from defining high-value call moments to scaling across sales motions — so enterprise teams can deploy AI sales assistants that genuinely improve customer interactions, not just add another tool to the stack.

What is an AI sales assistant?

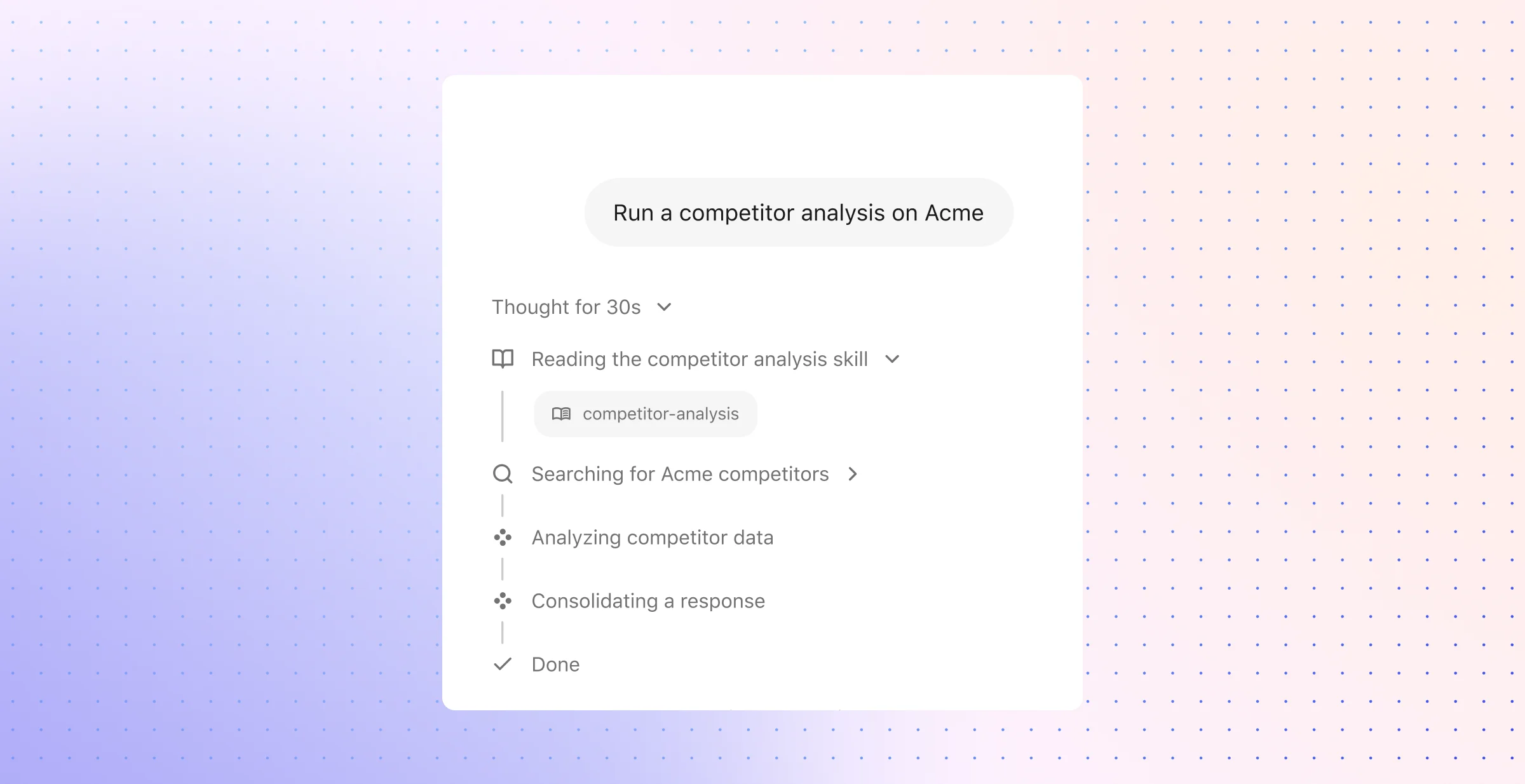

An AI sales assistant is a conversational agent that supports reps during customer calls by retrieving trusted answers, relevant account context, and next-step guidance in real time. It sits at the intersection of enterprise search, natural language understanding, and workflow automation — pulling from CRM records, product documentation, pricing policies, support history, and internal knowledge bases to deliver the right information at the right moment. The assistant does not replace the rep. It amplifies their ability to respond with speed, accuracy, and confidence while the buyer is still on the line.

The practical value becomes clear when you consider what reps actually struggle with during live conversations. Technical product questions, pricing clarifications, competitor comparisons, security reviews, and next-step alignment are all moments where a rep either has the answer or loses momentum. An AI sales assistant handles these moments by retrieving grounded, permission-aware responses from connected company systems — not by generating speculative answers from a general-purpose model. That distinction matters. Enterprise buyers expect precision, and a single inaccurate claim about compliance, integration capabilities, or contract terms can derail a deal.

Where an AI sales assistant delivers the most value

The strongest use cases sit where sales productivity and customer experience overlap:

- Real-time product and pricing retrieval: Reps get instant access to current feature details, plan comparisons, and approved pricing guidance without leaving the conversation.

- Objection handling AI: The assistant surfaces approved talk tracks, proof points, and customer stories tied to common objections — price sensitivity, competitor claims, implementation concerns, or timing hesitations.

- Account context at a glance: Prior interactions, open support tickets, renewal timelines, and stakeholder history appear automatically so reps never ask a buyer to repeat themselves.

- Post-call automation: Meeting summaries, CRM updates, follow-up drafts, and action items flow directly from the conversation into the systems of record, cutting after-call work from minutes to seconds.

- Sales coaching in the moment: Instead of waiting for a manager review days later, reps receive in-call guidance on discovery questions, competitive positioning, and next-best actions.

For enterprise teams, the goal is not to introduce another disconnected sales tool. The most effective AI sales assistants operate as a single secure layer across the systems reps already trust — CRM, knowledge bases, communication platforms, and internal wikis. That connected architecture is what separates a useful assistant from a novelty chatbot. When the assistant draws from live, permissioned company knowledge rather than a static copy or a generic training set, reps can rely on it during high-stakes moments without second-guessing the source.

This also means the assistant must respect organizational boundaries. Pricing exceptions visible only to senior sellers, internal competitive intelligence restricted to specific teams, and pre-release product details under NDA all require permission-aware retrieval at response time. An assistant that ignores those boundaries creates more risk than it eliminates — a reality that enterprise sales, legal, and security leaders weigh heavily before adoption.

How to implement AI sales assistants for better customer interactions

Start with the points in a sales conversation where momentum breaks. In most teams, those points look the same: a rep hunts for a security answer, hesitates on a pricing nuance, misses a proof point, or leaves the call with a vague list of manual follow-up tasks.

That is why the implementation target should be operational, not abstract. A strong rollout improves one concrete workflow at a time by unifying live retrieval, grounded response support, post-call execution, and policy controls inside the tools reps already use.

Design for the full call lifecycle

Treat prep, live conversation support, and follow-up as three separate jobs. Each job needs a different form of assistance, and the assistant should match that sequence rather than force one generic chat interface onto the entire sales process.

- Before the call: Build a short brief from the systems of record — account stage, recent emails, open support issues, prior call notes, stakeholder changes, and any unresolved commercial questions. This gives the rep a usable snapshot before the meeting starts.

- During the call: Surface short, usable guidance that fits the pace of a live discussion. Good support includes a direct answer, the source behind it, and a clear fallback path when the topic requires a specialist or approval.

- After the call: Convert the conversation into structured output — CRM fields, recap notes, task creation, follow-up drafts, and handoff details for solution engineering, legal, or customer success.

This approach keeps the assistant disciplined. Not every sales problem belongs in the middle of a call, and not every answer should rely on a live prompt. Strong implementation removes prep work and admin work around the call so the in-call experience can stay fast, selective, and useful.

Blend retrieval, generation, automation, and governance

Many projects stall because teams treat the assistant as a thin layer over a language model. The durable version looks more like an operating system for sales knowledge: one part finds the right material, one part shapes that material into rep-ready output, one part moves the work forward, and one part enforces boundaries.

- Retrieval: Pull from CRM data, product notes, security documentation, pricing policies, support history, and internal playbooks. The system should rank useful evidence across short snippets, long documents, ticket comments, and account records.

- Response composition: Turn that evidence into concise language a rep can use on the spot. The output should stay tied to approved sources so the rep can verify high-stakes answers quickly.

- Workflow execution: Write back to the business systems after the conversation. That may include note capture, task creation, approval routing, or a draft email with the right context already in place.

- Governance: Apply topic-level controls before scale. Commercial concessions, roadmap details, legal language, and compliance claims need tighter rules than general product guidance.

This is where implementation quality matters more than model novelty. A newer model will not fix stale source material, weak ranking, poor permissions design, or a lack of write-back into core workflows. The assistant becomes reliable only when fresh data, response discipline, and operational controls work together.

Keep phase one narrow and valuable

The first rollout should focus on one sales motion where answer quality and speed affect deal progress in an obvious way. Good candidates include discovery calls for a complex product, renewal conversations with adoption and support context, or late-stage meetings where commercial and technical objections appear in clusters.

- Discovery for complex deals: Reps often need fast access to integration details, implementation expectations, and product fit signals. This workflow tests whether the assistant can reduce stalls without overwhelming the rep.

- Commercial calls with repeat objections: Price pressure, competitor comparisons, procurement friction, and timeline concerns tend to follow recognizable patterns. This makes the workflow ideal for concise objection support and approved talk tracks.

- Renewal and expansion calls: These conversations depend on customer history, support trends, usage issues, and internal stakeholder context. A connected assistant can assemble that picture far faster than a rep can across separate systems.

- Post-call execution for high-volume teams: When managers care about CRM quality, next-step discipline, and follow-up speed, this workflow delivers measurable value quickly.

A narrow pilot also produces better evidence. Teams can compare pre-launch and post-launch performance on question deferral rates, time spent across internal systems, recap turnaround, next-step clarity, and manager edits to follow-up notes. That kind of measurement shows whether the assistant improves the work itself, not just whether reps clicked on it.

1. Define the call moments where reps need help most

The first step is a call-moment inventory. Instead of a broad goal such as better rep productivity, isolate the exact exchanges where a rep pauses, defers, or gives an answer that lacks enough detail for the buyer to move forward.

Build that inventory from evidence, not assumption. Pull a sample of discovery calls, demos, renewal reviews, and qualification calls; note the points where a rep opens a second system, asks to confirm details later, or shifts to a safe but generic answer. In most teams, those moments cluster around a small set of recurring buyer needs. That pattern gives the assistant a clear job.

Start with the moments that break flow

Most sales conversations do not fail because of one dramatic mistake. They slow down through small but costly misses — a detail the rep cannot verify fast enough, a proof point that stays buried in another system, or a process question that turns a live discussion into a later email chain.

A practical map usually includes a handful of high-value moments:

- Fact-check moments: Buyers ask for exact details that sit outside normal recall — support scope, audit-log access, implementation sequence, contract dates, regional data controls, or pilot terms.

- Decision-path moments: The buyer wants clarity on approvals, stakeholders, paperwork, or expected timing across procurement, security, and legal review.

- Proof moments: The rep needs the right customer story, deployment example, ROI point, or internal expert note to support a claim with something concrete.

- Recovery moments: A prior support issue, an earlier failed rollout, or an internal concern enters the conversation and the rep needs context before trust slips.

- Commitment moments: The buyer needs a specific next move — technical validation, executive review, questionnaire handoff, or proposal review — and soft language will stall progress.

This approach keeps the assistant useful from day one. A strong first rollout does not need to cover every sales task; it needs to handle the few call moments that most often shape buyer confidence and rep fluency.

Separate frequent issues from sensitive issues

Some requests appear on almost every call and fit a fast-answer pattern. Others carry legal, commercial, or reputational weight and need a narrower response path. Treat those groups differently at the start, or the assistant will either hold back on routine questions or overreach on topics that require tighter control.

A question about standard onboarding steps or the usual meeting cadence can support a short in-call response. A question about non-standard terms, service credits, data residency exceptions, or future-release availability should pull from approved language, source checks, or a named specialist. The point is not only speed; it is dependable precision at the right level of risk.

Decide what belongs before, during, and after the call

Live support works best when each task sits in the right part of the workflow. Some tasks prepare the rep before the conversation starts, some support the rep in the moment, and some remove admin once the call ends.

- Before the call: Surface account changes, recent product usage, open renewal risk, stakeholder notes, and prior objections. This gives the rep a sharper starting point and reduces avoidable surprises.

- On the call: Offer short responses, approved proof points, customer examples, and next-question suggestions that fit the pace of a live discussion. The rep should see the signal first, with extra depth only when needed.

- After the call: Create summaries, suggested follow-up language, CRM fields, action items, and handoff notes for sales engineering, support, or success. This is where automation removes the most low-value work.

Frontline teams should shape this map. Managers can point to stage-level problems such as low conversion or slow follow-up; reps can point to the exact sentence that forces a pause, the objection that needs a stronger answer, or the handoff that creates confusion.

Success should reflect the buyer experience too. Better interactions show up as fewer restarts, fewer “I need to verify that” detours, cleaner team transitions, and clearer ownership after the call.

2. Connect the assistant to the systems reps already trust

A clear use-case map only matters once the assistant can assemble the right record set in seconds. That work starts with source selection, field mapping, and content hygiene — not with prompt tweaks.

In most sales organizations, no single system holds a complete answer. A rep may need account history from the CRM, implementation detail from technical docs, approval rules from finance, security language from a review library, and prior issue context from service records; the assistant should pull those threads together inside one result.

Start with the sources reps already use

Begin with a source inventory rather than a feature list. The goal is not broad access for its own sake; the goal is answer quality in live customer moments.

- CRM objects and fields: Account ownership, stage history, stakeholder roles, prior meeting notes, renewal dates, and open tasks give the assistant the commercial shape of the deal.

- Product and deployment content: Release notes, setup guides, integration requirements, implementation checklists, and architecture references help with technical buyer questions that demand precision.

- Commercial policy records: Packaging rules, approval paths, discount matrices, quote guidance, and procurement steps keep the assistant aligned with how the business actually sells.

- Conversation records: Call transcripts, email threads, and meeting recaps give useful continuity across touches so reps do not lose context from one interaction to the next.

- Service and onboarding systems: Prior escalations, unresolved cases, onboarding blockers, and customer health notes add the operational detail that often shapes a late-stage conversation.

Source breadth changes the usefulness of the answer. A buyer question about rollout timing, for example, may depend on product dependencies, prior support history, and the current account plan at the same time; a narrow connection set will miss that context and leave the rep with a half-answer.

Avoid static copies that drift from the business

Many teams try to simplify deployment with manual exports, duplicate folders, or one-time content uploads. That shortcut creates quiet failure: SKU names change, implementation steps shift, approval rules update, and security answers age out long before anyone notices.

A better pattern uses direct connectors, steady sync cadence, and rich metadata. Document owner, publish date, approval state, audience, product family, region, and content type all help the assistant rank the right source higher than an old draft or an informal note. This is also where naming discipline matters. When one team uses a launch name, another uses an internal code, and a third uses a legacy label, retrieval quality drops even when the right document exists.

Preserve permissions from the start

Access rules need design work before the pilot begins. Most enterprise environments have more than one permission model, and the assistant has to honor all of them at once.

- Repository access controls: Shared drives, wiki spaces, and document libraries often restrict content by team, region, or project.

- CRM role scope: Opportunity data, account notes, and forecast detail may vary by territory, manager level, or deal assignment.

- Field-level restrictions: Certain values — margin data, exception terms, private notes, or internal approvals — may require masking even when the parent record is visible.

- Temporary access paths: Deal teams change, specialists rotate in, and project access expires; the assistant should reflect those shifts without manual cleanup.

This level of control affects adoption as much as security. Reps trust the system more when it knows the difference between a public security FAQ, a restricted pricing note, and a private deal-desk comment from another region.

Build for cross-functional context, not just the sales stack

Strong customer conversations depend on content that sales teams do not own. Product leaders define capability detail, finance defines commercial rules, security defines approved review responses, legal defines contract language, and support defines issue history. The assistant should retrieve across those domains with the same consistency a rep expects from the CRM.

That model also creates value beyond the call itself. The same knowledge foundation can support AI agents for customer service, onboarding teams, and renewal managers who need the same account context, policy answers, and source-backed guidance. In practice, this means each function needs clear content owners, review cycles, and approval markers so the assistant knows which material counts as current and customer-safe.

3. Prepare trustworthy knowledge for live use

Once the assistant has access to the right systems, the next constraint is editorial quality. Live sales support breaks down less often because of model capability than because of messy source material — duplicate answers, unlabeled drafts, buried policy changes, and outdated field guidance.

The fix is not a larger knowledge base. It is a tighter one: approved, current, and structured for fast retrieval under call pressure. Before launch, teams need a call-ready content set that reflects how buyers ask questions and how reps need to respond in the moment.

Convert source material into call-ready assets

Most internal content was never written for a live customer conversation. Product pages assume time to read; legal docs assume expert review; internal notes assume shared context. A sales assistant needs something else — compact assets that answer one clear question with language a rep can use immediately.

That often means repackaging existing material into more precise formats. Long narrative pages should become short answer blocks, comparison tables, implementation checklists, approval matrices, and objection-response cards. A security overview, for example, is far more useful in live use when it includes discrete fields such as authentication methods, encryption standards, data residency options, and review process for customer questionnaires.

A practical content set usually includes:

- Capability fact sheets: Clear answers on what a product does, what it does not do, and which plan or package includes it.

- Implementation snapshots: Standard deployment assumptions, common dependencies, typical handoff points, and known constraints.

- Commercial rule cards: Standard pricing logic, discount boundaries, quote escalation paths, and procurement milestones.

- Proof libraries: Approved customer examples sorted by industry, use case, company size, and measurable outcome.

- Competitive response briefs: Short, approved positioning notes that explain how to respond without overclaiming.

Make retrieval precise, not approximate

A rep can recover from a slow answer. It is harder to recover from a confusing one. Retrieval quality improves when content uses consistent labels, stable structure, and explicit metadata instead of team-specific shorthand.

This is where taxonomy matters. Product names, packaging labels, feature aliases, security terms, and commercial approval terms should follow one company standard. Synonyms still have value, but they belong in metadata, keyword fields, and redirect logic — not as competing terms spread across dozens of documents. That approach lets the assistant resolve a buyer’s phrasing to the correct internal reference without surfacing fragmented answers.

Document shape matters just as much. Strong live-use content tends to share a few traits:

- One question per section: Each block should answer a single customer issue, not three related ones.

- Front-loaded facts: Put the exact answer first; place nuance, exceptions, and edge cases after it.

- Structured fields: Use tables, short headers, and labeled sections such as “Supported,” “Not supported,” “Approval required,” and “Escalation path.”

- Visible source markers: Show the originating document, owner, and last verified date so reps can judge reliability at a glance.

Separate official guidance from working material

Not every internal document deserves a place in a live assistant. Draft launch notes, team brainstorms, unreviewed battle cards, and personal deal notes can help a human team internally, but they create noise in a retrieval workflow. The assistant needs a clear distinction between reference content that is fit for customer-facing use and material that is still under review.

A simple publication model helps. Teams can classify content by status — approved for live use, internal reference only, draft, retired — and apply stricter review rules to higher-risk topics. Legal clauses, compliance language, pricing exceptions, and security statements should carry version history, named ownership, and explicit expiration or review dates. Informal notes should not compete with them in the same retrieval path.

Staleness needs active control as well. Old package names, retired implementation steps, superseded procurement instructions, and outdated case studies all reduce trust in quiet ways. Before rollout, content owners should archive obsolete files, merge overlapping pages, and remove materials that no longer reflect current policy or product reality. In customer interaction tools, speed only helps when the answer still holds up under scrutiny.

4. Design the in-call experience around speed, clarity, and control

Once the knowledge layer is in place, the real design work begins at the rep’s screen. A live call leaves only a few seconds for the assistant to prove useful, so every prompt needs a clear job and a clear place in the workflow.

That usually means a compact interface with low-latency retrieval, visible source cues, and predictable behavior. Reps should know what the assistant will show on its own, what requires a prompt, and what will route to a person or a follow-up task instead of trying to answer on the spot.

Make proactive guidance selective

A strong in-call experience does not flood the rep with every possible signal. It watches for a small set of triggers and surfaces only the items that change the conversation in a meaningful way.

Useful proactive cards often include:

- Call context at open: meeting objective, attendee roles, account health, and recent changes that affect the discussion.

- Risk alerts during the conversation: an active service issue, a pending renewal decision, or an unresolved commercial question that should shape the rep’s language.

- Relevant proof on trigger: a case study, implementation note, or security response that appears only when the buyer raises that topic.

- Suggested follow-through: a proposal draft, specialist handoff, or procurement step that appears when the call reaches a decision point.

Everything else should stay one step away in a search bar, side panel, or expandable drawer. That design protects focus and keeps the assistant from turning into another feed that competes with the actual conversation.

Shape objection prompts for live delivery

Objection support needs to fit spoken conversation, not document review. The most effective prompts read like a quick coaching note: short response, relevant evidence, then a smart question that helps the rep move the discussion forward.

For common objection types, that structure works well:

- Budget pressure: a tight value statement, one metric or outcome, then a question that reframes the discussion around total business impact.

- Decision delay: a response tied to the buyer’s stated deadline, current initiative, or operational cost of waiting.

- Technical doubt: a plain-language explanation pulled from approved product or implementation material, followed by the right validation step.

- Competitive pressure: a factual differentiator and a matched customer example instead of a generic comparison chart.

This is where prompt design matters. The system should return language that a seller can actually use in conversation — not a paragraph that sounds polished in a document and awkward out loud.

Build depth behind a simple first view

Buyers often ask layered questions. A rep may need a fast answer first, then more detail only when the conversation moves deeper into security, pricing structure, or deployment specifics.

A three-part response format works especially well in that setting:

- A speakable first line: something the rep can say immediately without editing.

- A verification layer: the exact excerpt, document section, or approved note behind the response.

- An execution option: send the source, open a follow-up task, or pull in the right specialist.

This model keeps the surface area small while still supporting complex conversations. It also gives managers a clean way to review whether the assistant helped with accuracy, timing, and call progression rather than just word count.

Preserve seller judgment without hiding the machine

Control matters as much as speed. The seller should decide whether to use a suggested response, ignore it, or ask for a different angle based on the buyer’s tone, the deal stage, and the relationship in the room.

That is why the best systems expose their reasoning in practical ways: source labels, confidence cues, and clear fallback states when the answer needs review. Many teams start with one narrow in-call workflow — such as live objection prompts or technical answer retrieval — because that makes it easier to tune latency, validate prompt quality, and learn what the sales team will actually use under pressure.

5. Set guardrails before you scale

As the assistant moves closer to live deal work, policy needs to move closer to the point of use. Enterprise sales calls carry real exposure — commercial, legal, security, and reputational — so the system needs a clear operating model before broader rollout.

That model should answer a practical set of questions: which topics allow a standard response, which require a prescribed workflow, and which should trigger a handoff with no generated answer at all. Precision here removes ambiguity for reps, reduces policy drift across teams, and keeps customer-facing guidance consistent under pressure.

Turn policy into decision rules

Guardrails work best when they show up as explicit decision logic inside the product, not as a separate training deck. The assistant should follow a topic policy matrix that maps common call scenarios to a defined response path.

- Standard-response topics: Questions with stable, approved language — such as general product capabilities, standard deployment steps, or documented integration patterns. The system can answer these directly in a concise format.

- Workflow-bound topics: Questions that require a controlled sequence — such as discount approvals, security questionnaires, procurement steps, or competitive claims. Here, the assistant should guide the rep through the right workflow, surface the required material, and keep the response within approved bounds.

- No-answer topics: Questions that cross a hard line — such as legal interpretation, unapproved commitments, or account-specific concessions outside policy. In these cases, the assistant should stop short, capture context, and route the issue to the correct owner.

This structure matters because many risky moments do not sound risky at first. A buyer may ask for a “quick confirmation” on service terms, implementation timelines, or exception handling. Without explicit controls, a helpful assistant can drift into language that sounds official but carries no internal approval.

Constrain output where risk is highest

Not every answer needs the same degree of flexibility. For sensitive topics, the assistant should rely on response templates, approved snippets, field-level data, and deterministic actions rather than broad synthesis.

That is especially important in a few categories:- Commercial policy: Discount bands, approval thresholds, and non-standard packaging should map to current deal policy, not conversational guesswork.- Security and compliance: The assistant should surface approved statements, certifications, and review paths in a fixed format that sales, security, and procurement teams already recognize.- Roadmap and availability: Product plans should follow internal release policy and approved external language, with no room for improvised commitments.- Competitive positioning: Rebuttals should stay factual, current, and consistent with approved messaging rather than slip into unsupported comparison.

This is where high-performing teams separate speed from improvisation. The assistant can still move fast, but the path should narrow as topic sensitivity rises.

Treat access as a live control, not a one-time setup

Enterprise systems change every day — territories shift, approvals expire, account teams rotate, and confidential documents move behind new controls. The assistant should evaluate access at the moment it prepares a response, not rely on yesterday’s visibility rules.

That requirement extends beyond document access. It should include field-level account data, temporary pricing guidance, restricted sales notes, and internal briefings tied to specific teams or motions. A rep should see the answer appropriate to their role and deal context at that moment, no more and no less.

Design fallback behavior with intent

A strong assistant does not try to rescue every edge case with polished language. When confidence drops, policy conflicts appear, or source material falls out of date, the system should follow a defined fallback path.

A practical fallback design usually includes three layers:1. Clarify: Ask for the missing detail that would narrow the request to an approved path.2. Route: Send the issue to the right team with account context, transcript excerpt, and next-step urgency attached.3. Contain: Limit the visible output to neutral language that avoids an accidental promise or claim.

This pattern keeps the rep moving without forcing them to decide, in real time, whether the assistant has crossed a line.

Make review part of normal operations

Guardrails need feedback, not just rules. Teams should review exception cases, blocked responses, policy-triggered handoffs, and prompt patterns that recur across calls. That review loop helps revenue operations, enablement, security, and legal teams tighten weak spots before they spread across more deals.

The most useful audit trail captures more than the final answer. It should show the topic classification, workflow path, policy checks, surfaced assets, handoff event, and rep action that followed. That record turns governance into something measurable: where the assistant stayed inside policy, where content gaps forced escalation, and where the sales process itself needs a cleaner rule.

6. Pilot with one sales workflow and measurable goals

A pilot should answer a precise operational question: does the assistant improve one sales motion under real conditions, with real buyers, and with normal rep behavior. That requires a test design with tight scope, a clear comparison method, and evidence that holds up beyond a product demo.

Use a bounded cohort from the start — one manager, one segment, one workflow, one time window. A disciplined test gives you cleaner signal than a broad release because it separates product value from rollout noise, training gaps, and inconsistent usage.

Pick a workflow with repeatable pressure points

Choose a motion where the same decision pattern shows up often enough to study. The best pilot candidate usually sits at the point where reps must balance speed with precision — not because the call is generic, but because the stakes stay consistent from one conversation to the next.

That usually means a workflow such as:- Security and technical validation calls: Buyers ask for exact answers on architecture, integrations, data handling, or deployment constraints; weak retrieval shows up fast.- Competitive evaluation calls: Reps need approved differentiation, not improvised positioning; this makes output quality easy to review.- Procurement and commercial review calls: Buyers want clarity on approvals, packaging logic, and buying process; the assistant either reduces friction or exposes content gaps.- Multi-stakeholder recap calls: Reps must align technical, financial, and executive audiences in one conversation; this tests whether the assistant can surface context without clutter.

Set the baseline before the pilot starts

Before launch, define a control view and an assisted view. Compare the pilot group against either a similar team without the assistant or the same team across a fixed pre-launch period, with identical call types and review criteria.

Focus on measures that show whether the assistant improves execution quality, not just activity volume:- Answer latency: Time from buyer question to usable rep response on knowledge-heavy topics.- Citation use rate: How often reps open or rely on the source behind a surfaced answer before they respond.- Override rate: How often reps reject, edit, or ignore the assistant’s recommendation; a high rate often points to weak retrieval or poor timing.- Escalation accuracy: Whether the assistant routes uncertain or high-risk topics to the right specialist rather than forcing a low-confidence answer.- Next-step clarity score: Whether the call ends with a specific owner, date, and agreed action rather than a vague follow-up.

A strong pilot also needs operational thresholds. Set acceptable limits for response time, source freshness, and surfaced-answer accuracy before the first call review. That makes it easier to judge whether a miss came from the model, the content, or the workflow design.

Measure trust, not just output

Adoption does not come from speed alone. Reps trust a system when it behaves predictably, shows its work, and stays quiet when it has nothing useful to add.

That is why pilot review should include a small quality rubric for every call sample: did the assistant surface the right source, did it appear at the right moment, did it stay within approved boundaries, and did the rep use it without breaking conversational flow. Managers should score those moments directly from transcripts and call recordings rather than rely on aggregate dashboards alone.

Gather examples with enough detail to improve the system. Save cases where the assistant produced a strong recommendation, where it introduced noise, where it failed to recognize a high-risk topic, and where better source hierarchy would have changed the rep’s response. That review loop gives product, enablement, and operations teams a concrete basis for prompt tuning, content revision, and workflow changes.

7. Train reps, refine the system, and expand responsibly

A pilot proves that the assistant can work. Adoption depends on whether reps know how to use it under pressure. The best training does not center on features; it centers on a short set of sales moments such as a pricing pushback, a technical dependency, a competitor claim, or a procurement delay.

That approach matters because sellers do not need another dashboard lesson. They need repetition on the exact behaviors that help in a live conversation: how to ask for a tighter answer, how to turn a suggested response into their own voice, how to spot when a question needs a specialist, and how to use the assistant without slowing the call. New hires benefit from simulated call drills; experienced reps benefit from role-specific patterns that match their motion, deal size, and product complexity.

Turn managers into operators, not observers

Manager behavior sets the standard for the team. When frontline leaders use assistant data in call reviews, forecast discussions, and rep coaching, the system becomes part of normal execution rather than a side experiment.

A practical manager playbook usually includes three habits:

- Review a fixed sample of assisted calls each week: A small, consistent set of calls reveals more than a broad dashboard. Managers should look for buyer questions that changed the flow of the deal and check whether the assistant helped the rep recover, clarify, or move to a firmer next step.

- Coach for judgment, not tool usage alone: A rep should know when to accept a suggestion, when to reframe it, and when to ignore it. The goal is not mechanical compliance; it is stronger call quality and better buyer communication.

- Route patterns back to the right owners: When the same issue appears across calls — weak competitive language, unclear packaging guidance, gaps in security responses, or procurement confusion — managers should pass that pattern to enablement, operations, product marketing, or legal with concrete examples.

Use real call evidence to tune the system

The most useful improvement work starts with actual buyer language. Transcript clusters, prompt logs, and repeated search terms often show that the problem is not model quality at all. In many cases, the assistant misses because the business uses one term and buyers use another: a prospect says “SSO,” internal content says “identity federation”; a rep asks about “enterprise controls,” the product team writes “admin policies.” Those mismatches reduce answer quality even when the underlying content is correct.

Teams need a steady review cadence to fix that gap. A monthly operating review should examine unresolved intents, low-use prompts, overly long answer cards, manual follow-up work that still slips through, and cases where the assistant surfaces the wrong level of detail. From there, teams can update terminology maps, tighten prompt templates, restructure answer cards, and adjust routing logic. That process keeps the system useful as messaging changes, packaging evolves, and new objections appear in market.

Expand by motion, then across the revenue cycle

Expansion should follow operational fit, not internal excitement. The next rollout should go to the sales motion that shares the most context, language, and workflow logic with the first one. A team that starts with inbound qualification may move next into discovery for a complex product line; a team that starts in late-stage commercial calls may move next into renewals, where pricing policy, customer history, and support context already matter.

As coverage grows, the assistant should start to produce value beyond the individual rep. Patterns from sales conversations can sharpen onboarding content, expose product friction, improve handoffs to support, and give revenue operations cleaner data about why deals stall. At that stage, the system does more than help a seller answer faster; it gives the business a tighter feedback loop between customer conversations and the teams responsible for product, policy, and service.

Frequently Asked Questions

1. What specific tasks can a conversational agent perform during sales calls?

During a live sales call, a conversational agent can handle far more than basic lookup. The strongest systems listen for intent shifts, detect buying signals, and surface the exact asset a rep needs without breaking the flow of the conversation.

Common in-call tasks include:

- Live transcription and speaker separation: The system captures what each participant says, which makes later summaries more accurate and helps the assistant react to the right speaker.

- Question-to-answer matching: When a buyer asks about deployment limits, implementation scope, contract terms, or feature fit, the assistant pulls the best approved answer from internal sources.

- Signal detection: It flags phrases that indicate urgency, budget approval, stakeholder expansion, churn risk, or procurement readiness.

- Prompted next moves: It suggests a technical follow-up, executive review, pricing review, or specialist handoff based on the direction of the discussion.

- Structured capture: It records decisions, open questions, owners, deadlines, and agreed next steps in a format the CRM or downstream workflow can use.

Some teams also use the assistant for multilingual support on global calls, especially when one buyer asks for clarification in a second language or when regional teams need consistent terminology across markets. In those cases, the agent acts as a precision layer for language as much as for knowledge.

2. How does real-time AI support improve sales outcomes?

The biggest gain comes from compression of decision time inside the call. A buyer asks a detailed question; the rep gets a usable answer immediately; the conversation continues without a reset. That shortens the distance between buyer interest and seller response, which often determines whether a call moves toward technical validation, commercial review, or stalls.

Real-time support also changes how teams operate after the meeting. It can reduce recap lag, improve field completion in the CRM, and make pipeline updates more consistent across the team. In practice, that means sales leaders can review cleaner data, managers can coach from real call moments instead of memory, and operations teams can trust that next-step records reflect what the buyer actually agreed to.

The outcome is not only faster execution. It is better decision quality across the revenue process — fewer missed commitments, fewer dropped details, and fewer deals slowed by avoidable internal cleanup.

3. What are the key benefits of using conversational agents for sales reps?

For reps, one of the clearest benefits is cognitive relief. Enterprise calls often require a seller to track product detail, buyer intent, commercial boundaries, and internal process all at once. A conversational agent offloads part of that burden so the rep can focus on tone, judgment, and relationship management.

That creates practical advantages across the team:

- Lower mental overhead: Reps no longer need to hold every product limit, policy nuance, and customer detail in working memory.

- Stronger execution across regions and segments: Teams in different geographies or product lines can use the same approved language and response patterns.

- Better manager review: Calls become easier to assess because the system captures not just the transcript, but also the prompts, sources, and actions tied to the interaction.

- Smoother specialist collaboration: Solution consultants, security teams, legal reviewers, and customer success leads receive cleaner context when a deal moves across functions.

- More repeatable performance: Sellers can rely on a stable operating layer instead of building every answer from scratch.

In high-change environments, this matters even more. New packaging, updated policies, evolving competitor claims, and product releases can shift weekly. A conversational agent helps teams keep pace without constant retraining on every minor update.

4. How can conversational AI help with objection handling during calls?

Conversational AI helps most when objection support follows a clear taxonomy. Not every objection needs the same response structure, and the system should treat commercial pushback differently from technical doubt or procurement friction.

A useful objection model usually separates concerns into categories such as:

- Commercial: price, discount requests, contract flexibility, payment terms.

- Technical: integrations, architecture, scalability, deployment model, API limits.

- Operational: implementation effort, internal change load, training needs, timeline.

- Competitive: side-by-side comparisons, migration concerns, claims from another vendor.

- Risk and review: security questionnaires, compliance language, data handling, legal review.

Once the objection type is clear, the assistant can surface the right response shape. A pricing objection may require value framing plus a packaging note. A technical objection may require a product fact plus an architecture reference. A procurement objection may require process clarity and owner assignment. The best systems also know when not to answer directly — for example, when a buyer asks for a non-standard concession or a formal legal commitment that requires specialist review.

5. What technologies are involved in implementing conversational agents for sales?

A production-ready sales agent usually relies on six distinct layers. This matters because most implementation failures come from a gap in one layer, not from the language model alone.

- Speech layer: speech-to-text, speaker identification, and timestamp accuracy for live calls.

- Understanding layer: intent classification, entity extraction, and conversation-state tracking so the system knows what the buyer actually asked.

- Retrieval layer: hybrid search across structured records and unstructured content — CRM fields, contracts, call notes, policy docs, knowledge articles, and internal communications.

- Response layer: grounded answer construction, citation attachment, response ranking, and quality checks before the rep sees the output.

- Action layer: workflow triggers for CRM updates, email drafts, calendar follow-ups, task creation, and internal routing.

- Control layer: policy enforcement, fallback logic, audit trails, confidence scoring, retention rules, and usage analytics.

The most mature deployments also add evaluation systems that grade answer quality and retrieval quality separately. That distinction matters. A weak answer may come from poor retrieval, weak phrasing, stale source material, or a policy rule that blocked the best source. Teams that measure each layer can improve the assistant with much more precision.

The difference between a sales team that stumbles through live questions and one that handles them with precision often comes down to whether the right knowledge reaches the rep at the right moment. That gap is solvable now — not with more tools, but with a unified AI layer that connects your people to trusted answers exactly when they need them.

Request a demo to explore how we can help you transform the way your team sells, supports, and connects with customers.