How to use AI for proactive customer support notifications

Most customer support teams still operate in a reactive cycle: a customer hits a problem, files a ticket, and waits. AI changes that equation by giving teams the ability to detect friction early and deliver useful updates before the customer ever reaches out.

Proactive customer support notifications represent a fundamental shift in how enterprises manage the service experience. Instead of treating every interaction as a response to a complaint, AI-driven notifications turn support into a continuous, trust-building channel that reduces uncertainty and shortens resolution time.

This guide covers what proactive AI notifications actually look like in practice, how to implement them without creating noise, and how to measure their impact across the customer lifecycle. The focus is on enterprise teams that need real strategies — not theory — to improve customer experience at scale.

What is AI for proactive customer support notifications?

AI for proactive customer support notifications is the use of connected, permission-aware AI to detect customer risk or friction early and deliver timely, useful updates before a ticket is filed. The system works best when each notification is grounded in trusted company data, tied to a clear next step, and easy to escalate when human judgment is needed. This is not about increasing message volume. It is about making support feel more responsive by reducing the gap between an issue and the customer's awareness of it.

For enterprise teams, the distinction between proactive and reactive support comes down to data connectivity. A proactive system must pull context from multiple sources — support ticket history, product telemetry, internal knowledge bases, live operational dashboards, and customer account data — to determine whether an outreach is actually warranted. Without that unified context, automated notifications become noise: generic alerts that erode trust instead of building it.

What makes proactive notifications effective

The difference between a helpful proactive notification and an unwanted interruption is precision. Three factors separate the two:

- Grounded context: The AI must retrieve verified, permission-enforced information from the systems that matter — CRM records, knowledge articles, incident monitoring tools, billing platforms — before it generates any customer-facing message. If the model cannot confirm the relevance and accuracy of the underlying data, it should not send the notification.

- Actionable next steps: Every notification should answer a simple question for the customer: "What do I do now?" A shipping delay alert paired with a link to reschedule delivery is useful. The same alert without a path forward creates frustration. Proactive customer service only works when the outreach leads somewhere.

- Escalation readiness: Not every situation can be resolved through an automated message. The system needs clear thresholds for routing cases to a human agent — and when it does, the agent should receive full context so the customer never has to repeat themselves.

Where proactive AI notifications fit in the support lifecycle

Proactive notifications are not a replacement for reactive support. They are a layer that sits upstream, designed to intercept common friction points before they generate inbound volume. The most practical applications map to specific moments in the customer lifecycle:

- Incident and outage communication: AI monitors service health data, identifies affected customer segments, and delivers targeted updates with estimated resolution timelines — all before the first ticket arrives.

- Onboarding guidance: When product usage data shows a new customer has stalled during setup, AI can trigger a contextual message with the right knowledge base article or a direct link to schedule help.

- Billing and renewal support: Failed payment attempts, upcoming renewals, and plan changes are high-churn moments. Automated, timely reminders with clear recovery options prevent silent cancellations.

- Case milestone updates: Rather than force customers to check a portal or send a follow-up email, AI can push real-time status changes — "Your case has been assigned," "A fix has been deployed" — directly to the customer's preferred channel.

Each of these use cases depends on the same underlying capability: the AI must connect to the systems where customer and operational data lives, retrieve the right information with appropriate permissions, and generate a message that is specific enough to be useful. Platforms such as Glean that combine enterprise search, retrieval-augmented generation, and workflow integration make this kind of cross-system intelligence practical without requiring support teams to rebuild their existing tooling.

The real measure of success is not how many notifications get sent. It is how much inbound ticket volume drops, how much faster issues reach resolution, and how consistently customers feel informed without having to ask.

How to use AI for proactive customer support notifications

A strong proactive notification program starts with one operating rule: send an update only when it reduces customer effort at a specific moment. The message should remove uncertainty, clarify status, or shorten the path to resolution; it should never ask the customer to interpret an internal event on their own.

That rule shapes the architecture. High-performing teams use AI customer support systems that consult support records, product activity, incident feeds, order and billing data, and approved internal content before they draft anything customer-facing. Research across customer service platforms shows the same pattern: the best results come from connected orchestration across systems, not from a stand-alone notification bot.

Set the operating rule before you configure the system

Before teams automate outreach, they need a policy for what counts as customer-visible. Many internal events never merit a message. A slight latency increase, a brief queue spike, or a transient sync issue may matter to operations; it may not matter to the customer unless it blocks an action or creates real account risk.

A practical rule set usually includes four checks:

- Material impact: The issue affects access, delivery, billing, onboarding, or another customer task with clear business value.

- Clarity: The system can explain the issue in plain language with enough certainty to avoid confusion.

- Usefulness: The outreach includes a clear path forward — such as a workaround, status page, appointment option, payment fix, or expected update window.

- Review threshold: Sensitive cases move to a person before any message goes out; this often applies to regulated workflows, high-value accounts, and emotionally charged situations.

This standard prevents over-notification. It also gives support, product, and operations teams a shared basis for message approval, channel choice, and response ownership.

Build a signal model around customer outcomes

The most reliable proactive systems look for patterns that precede support demand. That means more than anomaly detection alone. The AI needs to weigh customer history, account state, known issue patterns, and operational changes to determine whether the customer will benefit from an update now.

A practical signal model usually draws from four categories:

- Usage friction: Failed actions, abandoned setup flows, repeated retries, login trouble, or sudden drops in feature adoption.

- Case history: Reopened tickets, unresolved dependencies, repeat contact on the same issue, prior escalations, and negative sentiment.

- Business events: Renewal windows, payment problems, delivery delays, SLA risk, maintenance windows, and service degradation.

- Approved guidance: Support macros, incident playbooks, help center articles, policy language, and customer-specific notes from account teams.

This is where retrieval discipline matters. The model should work from verified business records and approved support content, not from loose inference. Research on support automation and AI-assisted service workflows points to the same lesson: prediction becomes useful only when it connects directly to an informed operational response.

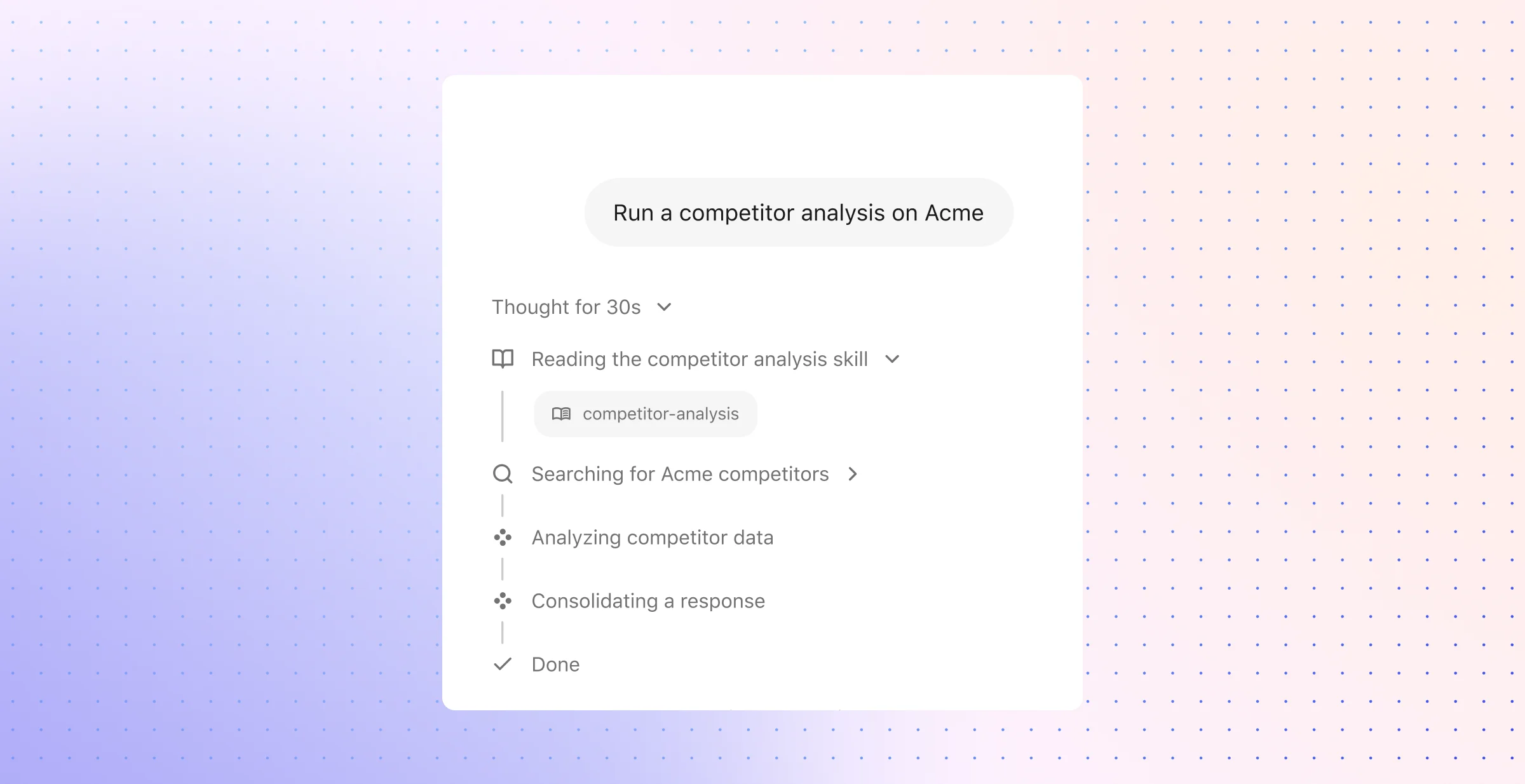

Use a simple workflow that support teams can govern

The workflow should stay easy to audit. Complex logic belongs behind the scenes; the operating path should remain readable for support leaders who need to monitor quality, risk, and customer impact.

A practical sequence looks like this:

- Detect: A trigger enters the system from telemetry, case activity, billing status, logistics data, or service monitoring.

- Validate: The AI checks whether the event still applies, whether the customer already received an answer elsewhere, and whether duplicate outreach would create noise.

- Draft: The system selects the right template and fills it with verified account and issue context.

- Direct: The message points the customer to one clear option — check status, retry with instructions, review a knowledge article, confirm account details, or expect a timed follow-up.

- Transfer: When the issue falls outside automation policy, the case moves to an agent with a concise brief, source context, and recommended handling notes.

That flow matches what support teams usually need from automated notifications: fewer repetitive contacts, cleaner case triage, and a service model that scales without forcing agents to work from fragmented systems.

Turn operational detail into customer-safe language

Raw support data rarely belongs in a customer notification. Internal notes often contain technical shorthand, incomplete root-cause analysis, and process language that makes sense only to engineers or operations staff. AI becomes useful here when it converts that material into a message a customer can understand in seconds.

Prompt-based support workflows and contextual summarization play a central role. One prompt can shape a customer-ready update with the right tone, length, and policy constraints. Another can produce an internal agent brief with account context, prior interactions, likely customer concerns, and the best approved resolution path. Research on service AI consistently highlights this split-output model because it improves both message quality and handoff quality.

The customer version should stay concrete: what changed, who may feel the impact, what the organization knows now, and what the customer can expect next. The agent version should stay operational: timeline, ticket and account history, approved knowledge sources, and risk signals that could affect the conversation.

Keep the workflow inside the systems agents already trust

Notification quality drops when support teams need to leave their main workspace to verify a message or recover context. Embedded assistance inside established support environments solves that problem because the same AI layer that prepares the outbound update can also surface relevant case notes, account history, service events, and approved content in the agent view.

This model improves handoffs without forcing a workflow change. Support teams can review the notification, inspect the underlying context, and take over the case from the same system where they already manage tickets. Research on contextual assistance inside platforms such as Zendesk and Service Cloud shows why this matters: adoption rises when AI fits the existing support workflow, and response quality improves when the agent receives a compact, context-rich brief instead of a bare alert.

The operational advantage is straightforward. Detection, drafting, review, and transfer all happen within the same support motion, which makes proactive outreach easier to govern and far more consistent across teams.

How to use AI for proactive customer support notifications: Frequently Asked Questions

After the core workflow is in place, most teams hit a second layer of questions: which tools deserve budget, what prediction methods hold up in production, which notification patterns avoid fatigue, how agent work changes, and which outcomes matter beyond ticket count. Those details shape whether proactive support feels disciplined or noisy.

1. What are the best AI tools for proactive customer support notifications?

The best tools act like an operating layer for support communication, not a campaign engine. They should handle event intake, policy checks, message assembly, approval logic, channel controls, and post-send measurement in one place. That matters most in enterprises where a single notification may depend on an incident platform, a billing system, a CRM record, and a help center article.

A practical evaluation framework usually includes these capabilities:

- Event intake from core systems: The tool should accept signals from product telemetry, case platforms, order systems, billing systems, and service monitoring tools without custom work for every new use case.

- Template control with dynamic fields: Teams need message templates that pull in live account, case, or service data without free-form drift.

- Approval paths and policy rules: High-risk notices, regulated account updates, and executive-tier customer messages often need stricter review.

- Delivery governance: Frequency caps, quiet hours, channel preference logic, and language support prevent overreach.

- Operational reporting: Support leaders need send volume, open rate, click rate, deflection impact, reopen rate, and escalation rate in one view.

Tools that sit inside established support platforms tend to work better than tools that require agents to switch tabs for every task. Summaries, response drafts, and customer updates land closer to the work itself, which improves consistency across shifts and teams.

2. How can AI predict customer issues before they arise?

Prediction starts with baselines. The system learns what normal behavior looks like for a product, account segment, region, or lifecycle stage; it then flags movement away from that baseline when the pattern matches past support outcomes. That approach works far better than a simple rule such as “send a notice after one failed action.”

Several signal types tend to hold up well in production:

- Time-series changes: A sudden drop in successful task completion, a spike in retries, or a rise in latency for one customer segment.

- Cohort comparison: One account moves off the normal path for peers of similar size, plan, or rollout stage.

- Case-sequence patterns: A billing question after a downgrade, followed by a permissions issue, may point to a likely escalation.

- Text signals: Support replies, survey comments, and chat transcripts can reveal frustration, confusion, or urgency before a formal complaint.

- Lifecycle gaps: A customer misses setup milestones that usually precede activation or renewal success.

The strongest prediction systems pair model output with confidence thresholds and fallback rules. That reduces false alarms and helps support teams reserve outreach for cases with a clear reason to act.

3. What strategies work best for automated customer notifications?

The best strategy is prioritization by customer effort. Start with moments where silence creates the most friction: service incidents, shipping or delivery changes, payment problems, onboarding stalls, and case status changes that customers would otherwise chase on their own. Those events have clear value and a low burden of explanation.

Execution quality depends on message design as much as trigger design. Strong programs usually share four traits:

- Specific scope: State who is affected, what changed, and what remains normal.

- Plain language: Replace internal labels and diagnostic terms with customer-ready wording.

- One next action: Offer one clear step — review a status page, confirm a payment method, complete setup, or reply for help.

- Cadence rules: Decide when to send the first notice, when to send the next one, and when to stop.

Prompt-based summarization helps here because raw incident notes and case logs rarely make sense to customers as written. AI can convert technical context into short updates that preserve accuracy without forcing a support lead to rewrite every message by hand.

4. How does AI improve customer experience in support roles?

AI improves support quality when it removes avoidable lag between internal change and customer communication. A case owner no longer has to piece together product logs, prior replies, and service notes before each update. The system can prepare a usable draft, flag policy issues, and keep the message aligned with the latest account state.

That shift also changes agent work in a practical way. Teams spend less time on status composition, duplicate explanation, translation of technical language, and after-hours update prep. In many environments, that leads to cleaner SLA performance, more even quality across agents, and fewer replies that drift in tone or detail from one queue to another.

Another benefit shows up in escalation paths. When a complex account issue moves to a specialist, AI can attach the case timeline, prior customer communication, and unresolved dependencies in a form that shortens review time and reduces back-and-forth inside the support team.

5. What are the benefits of using AI for proactive customer service?

The most immediate benefit is trust through predictability. Customers do not have to guess whether a team noticed the issue, whether a case still sits in queue, or whether a renewal problem will affect service. Timely notice reduces uncertainty in moments that usually damage confidence.

Operational gains follow, but they show up in more than one metric. Teams often see fewer “just checking” contacts, lower case reopen rates, faster movement through onboarding milestones, and better response coverage outside peak support hours. For leadership, the value extends into service consistency: one standard for message quality across products, regions, and queues.

The long-term upside is a more stable support model. AI can help enterprises maintain a high volume of updates without a matching rise in manual coordination, which makes growth easier to absorb across technology, financial services, retail, and other environments with distributed customer operations.

Proactive support is no longer a competitive advantage — it's the baseline customers expect. The teams that get this right are the ones that connect their AI to real operational context, enforce clear notification standards, and keep agents in control of the moments that matter most.

If you're ready to move from reactive firefighting to intelligent, connected support, request a demo to explore how we can help AI transform your workplace.