Tools and techniques for effective AI compliance training

AI agents now handle a growing share of customer support interactions across financial services, healthcare, technology, and retail. That expansion brings a direct challenge: every response, every action, and every piece of data the agent touches must align with legal, regulatory, and organizational standards.

The stakes are high. Healthcare data breaches involving improperly trained AI systems cost an average of $7.42 million per incident, and regulatory penalties for non-compliant customer interactions continue to rise across industries.

A structured approach to AI compliance training bridges the gap between speed and safety. The methods, tools, and techniques that make this possible go well beyond prompt engineering — they require a system-level commitment to trusted knowledge, permission-aware access, and continuous evaluation.

What is AI compliance training for support?

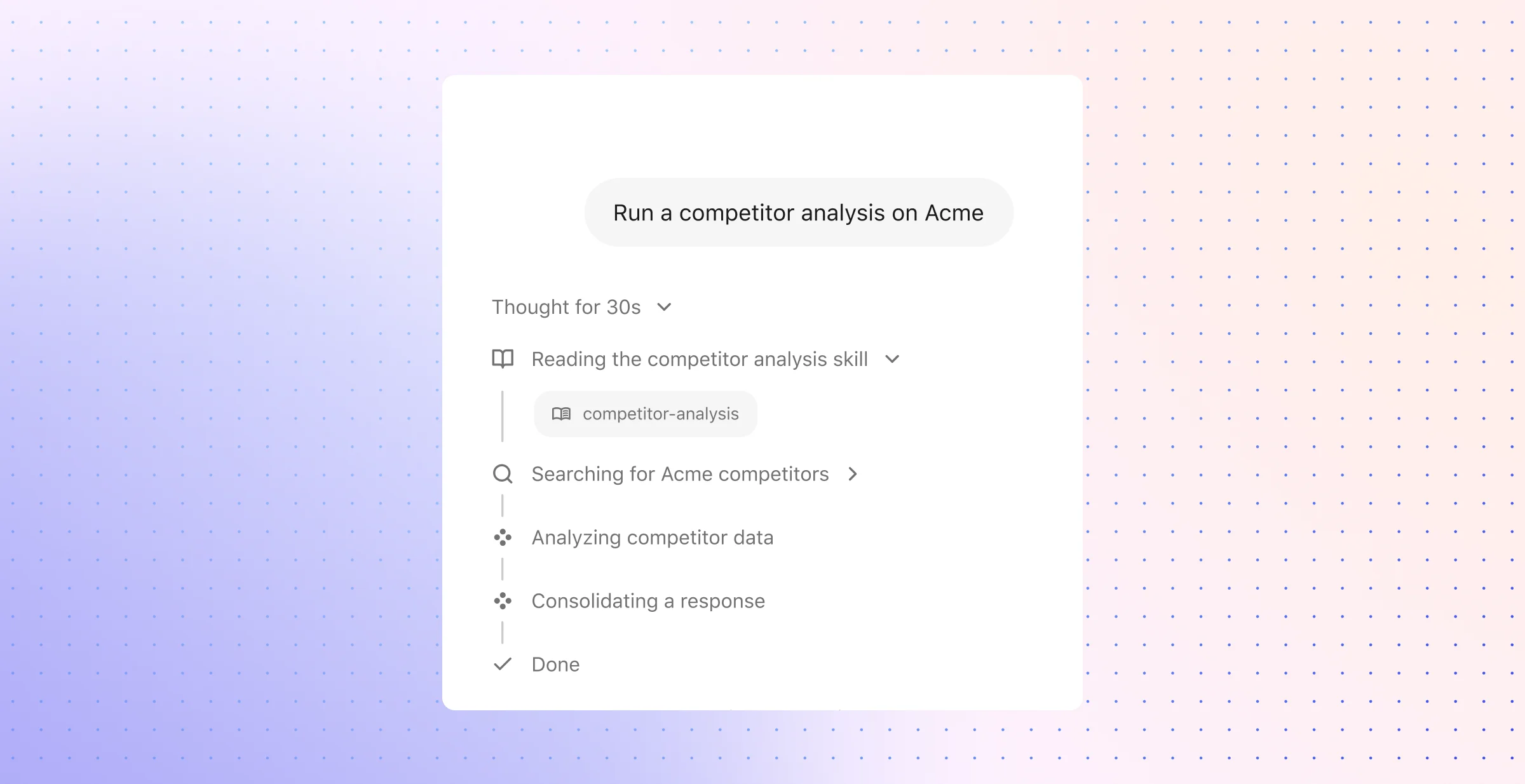

AI compliance training for support is the process of teaching an AI agent to answer, act, and escalate within the legal, regulatory, and company-specific rules that govern customer interactions. Unlike general-purpose model fine-tuning, this discipline addresses the full scope of agent behavior: what the agent can see, which tools it can invoke, which actions it may take, and the precise conditions under which it must hand work to a person.

A strong compliance training program ties the agent to approved company knowledge, current policies, and source-level permissions rather than broad internet knowledge or static prompts alone. This distinction matters. Large language models on their own are prone to hallucination, data leakage, and irrelevant responses. Enterprise-ready AI compliance training wraps the model in a retrieval layer — often built on Retrieval Augmented Generation (RAG) — that grounds every response in verified, permissioned content. Platforms like Glean reinforce this approach by combining enterprise search, a knowledge graph, and strict permission enforcement so the agent only surfaces information a given user is authorized to access.

The goal is practical and measurable across three dimensions:

- Accuracy and grounding: Every agent response should trace back to an approved source — a policy document, a knowledge base article, a product specification. Unsupported generation is the fastest path to compliance failure.

- Appropriate escalation and action boundaries: The agent needs clear rules about when to resolve, when to escalate, and when to refuse. Compliance covers not just language but also system actions — account changes, refund processing, data disclosure — each of which carries its own risk profile.

- Auditability and ongoing evaluation: Compliance cannot be assumed; it must be demonstrated. That requires logging, citation of sources, structured evaluation frameworks, and regular review cycles that compare agent behavior against current policy.

Permission-aware retrieval sits at the center of this architecture. Traditional support tools often treat access control as an afterthought, bolting permissions onto the response layer after the model has already processed sensitive data. A compliance-first design enforces permissions upstream — during retrieval — so the model never encounters information the end user or agent should not access. This approach addresses data leakage at the architectural level rather than relying on post-generation filters.

The compliance surface in support is broader than most teams initially expect. A single customer interaction might involve identity verification, account data lookup, policy interpretation, a system action, and a disclosure requirement — each governed by different rules. AI compliance training must account for all of these moments, not just the final text the customer reads. The agent's internal reasoning, tool selection, and data access patterns all fall within the compliance boundary, and each layer needs its own controls, tests, and audit trail.

How to train an AI agent to follow compliance guidelines during support

A reliable training program begins with a small slice of support work, not the full queue. Choose one or two flows where the rule set is stable, the customer outcome is easy to judge, and the team can watch performance closely without broad exposure.

Typical starting points include policy questions, shipment or order updates, simple account inquiries, and step-by-step troubleshooting. These cases let teams define concrete targets such as lower handle time, fewer policy exceptions, and cleaner case notes before the agent touches sensitive approvals or high-risk disclosures.

Start with bounded workflows

Early workflows should have a narrow decision surface. The agent should pull from a limited set of sanctioned sources, follow a short sequence of steps, and operate within a known risk tier. Informational support tasks usually fit this model well because they rely on current documentation and have fewer edge cases than disputes, exceptions, or account changes.

This stage also gives the team a chance to map the work with precision. Each flow should spell out the trigger, the data the agent may consult, the language it may use, the actions it may propose, and the points where a specialist must take over. That map turns an abstract policy requirement into a support process the organization can test and improve.

Build the five foundations into the system

Strong training depends on five operational layers. Each layer answers a different compliance question, and each one needs clear ownership.

- Curated source material: Limit the agent to current help content, approved internal procedures, product references, and sanctioned response language. Remove duplicates, retire stale articles, and resolve conflicts before launch so the agent does not inherit confusion from the knowledge base.

- Authorization-scoped lookup: The support system should narrow what the agent can fetch based on role, case context, and customer entitlement. This protects restricted notes, internal escalations, and sensitive records from accidental exposure.

- Machine-readable policy logic: Rewrite policy into rules the system can apply. That includes eligibility checks, required disclaimers, exception criteria, hold conditions, and refusal patterns for requests that fall outside policy.

- Guarded task execution: Split information access from state changes. Reading an order status may require one set of controls; a refund, profile update, or ticket closure may require another plus a manual checkpoint.

- Structured quality review: Use test suites, case replay, red-team scenarios, and sampled human review to catch drift, edge-case failure, and rule miss. The point is not just to score fluency; it is to verify policy fit under real support pressure.

These layers should connect to each other in a deliberate way. A well-written response template cannot correct a broken approval path, and a strong search result cannot fix a missing entitlement check.

Turn compliance into workflow logic

Policy needs translation before an agent can apply it with consistency. Legal and procedural documents often describe obligations in dense language, while support operations need explicit conditions, branch rules, and concrete next steps. The practical move is to convert those documents into decision trees, workflow steps, and control points that match how support work actually happens.

That translation should tie directly to moments inside the service journey. An account verification step may require one branch. A request for an exception may require another. A product warranty issue, a regulated disclosure, or a request to modify customer data may each need separate treatment with distinct approvals, disclosures, and logging requirements. When those branches live inside the workflow, the agent has less room to guess.

The underlying stack should support that design with live context from the support environment. The strongest setups connect the agent to real case state, policy repositories, product records, and operational controls so a rule update appears in the support experience without a full model retrain. That gives teams a practical route to effective AI compliance training because the controls live where the work happens.

Define handoffs, approvals, and failure paths

No support agent should operate as if every case fits its scope. Some issues belong with frontline support; others belong with billing operations, risk teams, engineering, or a human supervisor. A compliant design makes those boundaries explicit before launch and treats escalation as a normal part of the workflow rather than as an exception.

Approval points matter just as much. A low-risk informational reply may move straight to the customer, while a write action or policy exception may require a manager check, a second system validation, or a specialist review. The same applies to failure paths: uncertain identity, conflicting records, unsupported exceptions, and suspected abuse should trigger a controlled pause, not a creative answer.

Use memory, feedback, and audit loops to refine behavior

Support policy does not stay still. Products change, regulations shift, and teams discover new edge cases only after live use. The agent needs a disciplined update process that collects problem cases, tags failure patterns, and turns those lessons into better workflows, stronger source content, and sharper controls.

That process should combine automated scoring with expert judgment. Automated checks can flag source mismatch, policy branch errors, missing disclosures, or unsafe task execution across large case volumes. Human reviewers should focus on ambiguous interactions, industry-specific obligations, and the cases that reveal gaps in the workflow design. Over time, that review discipline helps the agent align more closely with support AI guidelines without turning compliance into a manual bottleneck.

Frequently Asked Questions

Teams usually reach this stage once the first pilot shows promise and the operating questions become more concrete. The work shifts from “can the agent answer” to “can the organization run this safely, repeatedly, and with evidence.”

1. What are the best practices for training AI agents on compliance?

The best programs train people and systems at the same time. That means role-based instruction for support leads, compliance teams, IT, security, and workflow owners — not just a generic module for everyone who touches AI in customer support.

A mature program also uses realistic practice instead of policy review alone. Scenario-based exercises, short refreshers, and tabletop simulations help teams apply compliance standards for AI under pressure, especially when a customer asks for an exception, disputes a decision, or presents incomplete information.

The most durable practices tend to look like this:

- Role-based learning paths: Support managers need policy enforcement and escalation judgment; administrators need configuration discipline; compliance leads need documentation and review methods.

- Scenario training tied to live support patterns: Exercises should reflect the actual queue — disputed charges, identity-sensitive requests, regulated disclosures, service outages, and account recovery.

- Frequent refresh cycles: Quarterly updates work better than annual awareness sessions because policy, tooling, and risk change quickly.

- Attestations and comprehension checks: Short assessments, signed acknowledgments, and role certifications give teams a record that training happened and that key concepts were understood.

- Cross-functional ownership: Compliance works better when support, legal, security, and operations share one review rhythm instead of separate checklists and separate vocabularies.

2. How can compliance guidelines be integrated into AI training?

The cleanest approach treats compliance guidance as a maintained operating asset with version control, owners, and release procedures. Teams should map each policy requirement to a support scenario, a training module, and a control owner so that changes in regulation or internal policy trigger a visible update instead of a quiet drift.

This also helps with policy change management. A revised retention rule, disclosure requirement, or data handling standard should not wait for an annual retraining cycle. It should update the training library, the job aid, the review checklist, and the related support runbook on a fixed cadence.

A useful integration model includes three layers:

- Policy mapping: Break large policies into specific obligations by support event — identity checks, cancellations, benefit inquiries, sensitive record access, or refund disputes.

- Training delivery: Assign those obligations through an LMS or internal learning system by role, risk level, and system access.

- Operational reinforcement: Pair each training topic with job aids, exception guides, and manager review routines so staff can apply the rule consistently after the lesson ends.

This method turns AI compliance training into an operating discipline with maintenance, ownership, and traceability rather than a one-time educational asset.

3. What tools are available for AI compliance training?

The most useful tooling usually falls into six categories, and each serves a different part of the control model. Strong programs rarely rely on one product because training, governance, testing, and incident handling have different requirements.

An effective stack often includes:

- Learning management systems: These handle course assignment, completion tracking, attestations, refresher schedules, and audit-ready reports.

- Governance, risk, and compliance platforms: These connect training to broader control frameworks, risk registers, issue tracking, and remediation workflows.

- AI governance platforms: These support model inventories, use-case classification, approval workflows, monitoring, and documentation such as model cards or system records.

- Policy management repositories: These give employees a current source for acceptable-use rules, prohibited-use lists, response standards, and exception procedures.

- Technical assurance tools: These include fairness tests, explainability checks, red-teaming methods, privacy scanners, and misuse detection.

- Incident and vendor review systems: These support escalation intake, case management, root-cause analysis, and third-party diligence for external models or service providers.

The real value comes from coordination across those layers. A support lead should be able to trace one requirement from policy language to training record, risk owner, approval history, and incident path without piecing together five disconnected systems.

4. What challenges might arise when training AI for compliance?

One of the hardest problems is regulatory drift. Rules change, internal policies change, and support content changes — but many organizations fail to update training, review criteria, and operational guidance at the same pace. The result is not a dramatic system failure; it is a slow mismatch between what the organization thinks the agent should do and what teams actually enforce.

Another frequent challenge is ownership ambiguity. Support may assume legal owns the rules, legal may assume IT owns implementation, and security may focus only on technical controls. When no one owns the full training lifecycle, gaps appear in refresh schedules, exception handling, and incident follow-up.

Other issues show up often in training AI for compliance:

- Overly broad instruction: High-level principles like “be careful with sensitive data” do not help much in a real support queue without examples, thresholds, and exception logic.

- Weak documentation discipline: Teams launch new support use cases without current records of policy interpretation, reviewer sign-off, or test history.

- Blind spots around third parties: External models, plugins, and service providers may introduce obligations that internal teams never covered in training.

- Metrics that track completion but not readiness: A 100% course completion rate says little if no one measures error patterns, escalation quality, or incident response speed.

- Claims risk: Marketing, product, and support teams may describe the agent’s capabilities too broadly, which creates exposure when public-facing language outpaces actual controls.

The practical challenge is less about intelligence and more about operational discipline. The organizations that struggle most are usually not short on policy; they are short on maintenance, ownership, and measurable review.

5. How do compliance requirements differ across industries for AI agents?

The baseline training model can stay consistent, but the emphasis changes sharply by industry. Financial services teams usually need stronger training around disclosure discipline, books-and-records obligations, transaction-related communication, and suitability boundaries. Healthcare teams need closer focus on protected health information, minimum necessary access, consent handling, and strict limits on how support staff view or share patient data.

Education and public sector environments often add their own constraints. Education teams may need training that reflects student privacy rules, parent access boundaries, and institutional record handling. Public sector programs often place more weight on procurement controls, records retention, transparency obligations, and heightened scrutiny around automated decisions.

A useful approach is to keep one enterprise framework, then layer industry modules on top:

- Core enterprise module: common operating rules, review expectations, incident paths, and documentation practices

- Industry module: sector-specific duties, restricted data types, disclosure language, and retention rules

- Use-case module: the exact support environment — benefits support, account servicing, claims intake, patient communications, student services, or regulated product support

That structure supports regulatory compliance AI without forcing every team into the same rulebook. The common framework creates consistency; the industry layer keeps the training accurate enough to hold up in real operations.

The difference between a compliant AI support program and a risky one is rarely a single decision — it is the sum of hundreds of small operational choices made consistently over time. The organizations that get this right treat compliance training as a living system with clear ownership, measurable review cycles, and architecture that enforces rules where the work actually happens.

If you're ready to see how that kind of system comes together, request a demo to explore how we can help AI transform your workplace.