Which team should lead your AI search implementation project

AI search implementation sits at the intersection of technology, organizational knowledge, and daily workflows. It demands more than a software rollout — it requires a deliberate team structure, clear ownership, and alignment across departments that rarely share a project plan.

The difference between a successful deployment and a stalled initiative almost always comes down to people, not platforms. Organizations that treat AI search as a cross-functional capability — rather than an IT-only project — consistently see faster adoption, stronger relevance, and measurable productivity gains.

This guide breaks down the roles, team structures, and skills that drive successful AI search implementations. It also covers the common failure points that derail projects and the long-term practices that keep enterprise search sharp as organizations evolve.

What is an AI search implementation project?

An AI search implementation project deploys intelligent search capabilities across an organization's tools, data, and workflows. Unlike traditional keyword-based search, AI search relies on machine learning, natural language processing (NLP), and knowledge graphs to deliver contextual, permission-aware results that reflect how employees actually look for information. The goal is not just retrieval — it's surfacing the right answer, from the right source, for the right person, at the right moment.

These projects touch every department. Engineering teams need access to documentation and code repositories. Sales teams search for competitive intelligence and deal history. HR teams look up policies and onboarding materials. Customer support teams pull from knowledge bases and past ticket resolutions. Because AI search connects to the systems everyone uses daily — documents, wikis, messaging platforms, ticketing tools, CRMs — the scope of implementation is inherently broad. Data integration, model configuration, security enforcement, and ongoing optimization all fall within the project boundary.

What separates AI search from a simple search bar upgrade is the depth of the underlying architecture. A well-designed system combines several layers:

- Hybrid search architecture: Semantic search models understand the meaning behind a query, while lexical search handles exact-match needs like document titles or product codes. Together, they cover the full range of how people search — from broad exploratory questions to precise lookups.

- Knowledge graphs: These map relationships between people, content, teams, and activity data. A knowledge graph helps the system understand that a query about "Q3 revenue targets" should prioritize results from the finance team's most recent planning documents — not a two-year-old slide deck with similar keywords.

- Permission-aware retrieval: Enterprise search must respect existing access controls. Every result a user sees should align with the permissions already set in the source application. This is a foundational requirement, not a feature toggle.

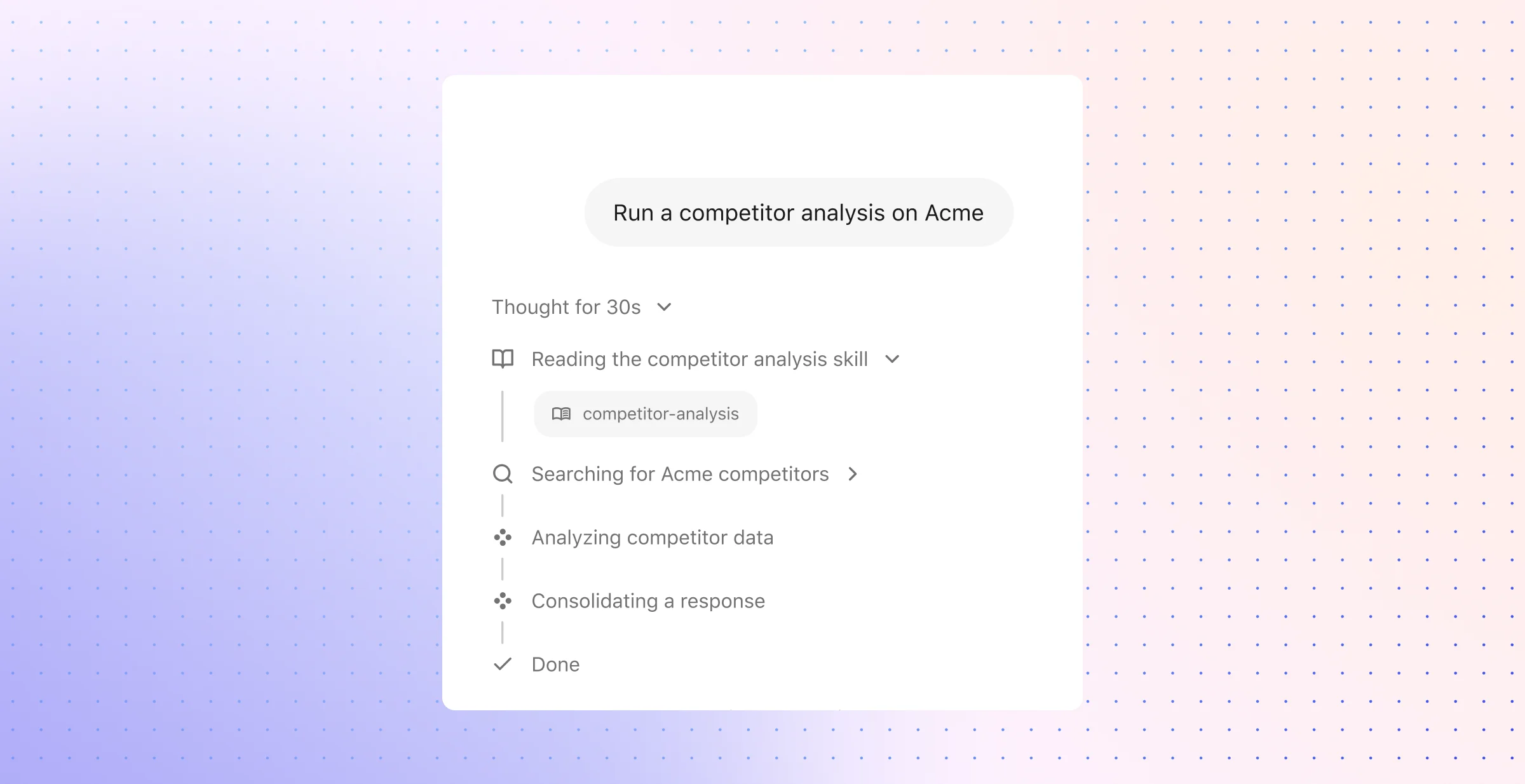

- Retrieval-augmented generation (RAG): Many modern AI search implementations pair search with large language models to generate direct answers grounded in company data. RAG pipelines plan a query, retrieve relevant documents with strict permission enforcement, and then generate a response with citations — a process that demands tight coordination between search infrastructure and language model behavior.

The scope of an AI search project also extends beyond the initial launch. Connector quality — how well the system ingests and indexes content from each integrated application — directly affects result freshness, metadata fidelity, and overall trust. Teams must plan for continuous tuning, new data source onboarding, and evolving security requirements as the organization grows. Getting the team structure right from the start is one of the most critical AI search strategies an organization can adopt, because every downstream decision — from relevance tuning to governance — depends on who owns it.

Why the wrong team structure causes AI search projects to fail

Misaligned ownership turns a platform project into an orphaned experiment

Recent enterprise AI research points to the same pattern again and again: programs stall when leadership treats them as technical deployments instead of operating-model changes. Search sits inside that pattern. It reshapes how people verify answers, where teams look for authoritative information, and how work moves across systems; without a single accountable owner, those shifts never receive consistent direction.

That gap shows up early. One group prioritizes launch speed, another pushes for broader source coverage, and a third raises risk concerns after core decisions have already been made. The project then slips into committee mode — lots of input, little authority, no durable tradeoff framework. Search quality becomes harder to improve because nobody owns the full chain of decisions around source prioritization, rollout scope, user trust, and business impact.

A strategy tied to business outcomes changes that dynamic. A project scoped around support deflection, faster onboarding, lower time-to-answer, or stronger policy compliance has a clear basis for decision-making. A project framed as a general AI initiative rarely gets that discipline; it accumulates ambition faster than it builds accountability.

Single-team control creates blind spots that users feel fast

A technology team on its own may excel at deployment, architecture, and service reliability. That still leaves major gaps: content stewardship, source authority, training, rollout sequencing, and workflow validation. The result often looks complete on a project plan and incomplete to the people expected to rely on it every day.

A business-led effort can miss in the opposite direction. Leaders may identify the right use case and still underestimate identity controls, compliance review, audit requirements, production support, or the operational burden of dozens of enterprise integrations. That mismatch creates delay, rework, and avoidable risk. Surveys of enterprise data leaders have reinforced this point: organizational and cultural barriers block AI progress more often than raw technical limitations.

The failure pattern usually appears in a few predictable forms:

- Decision rights stay fuzzy: source selection, rollout criteria, and model behavior require fast calls; without a designated owner, each issue waits for ad hoc approvals.

- Governance enters too late: security, privacy, legal, and risk teams review near the end; retention rules, audit logs, and access boundaries then force redesign.

- Content authority goes unmanaged: outdated policies, duplicate pages, and low-trust repositories remain in scope because no business owner validates what should count as the answer of record.

- Metrics split by function: platform teams report uptime and response speed; business leaders look for case deflection, time saved, or adoption by role; no shared scorecard means weak prioritization.

- Pilot logic never scales: a proof of concept works for one team, yet no operating model exists for new stakeholders, additional sources, or long-term evaluation.

The same problem grows sharper as search expands into richer AI experiences. Systems that answer questions directly or support multi-step work need aligned review across planning, policy, quality checks, and end-user validation. Fragment those responsibilities across separate silos, and the organization gets inconsistency instead of confidence.

Essential roles for leading an AI search implementation project

Role design shapes the outcome before the first connector goes live. AI search reaches across systems, policy, and everyday work, so the leadership bench must match that scope.

The best implementations pair senior sponsorship with operators who can move fast and specialists who can protect quality. Each role below covers a separate risk area — strategy drift, execution slippage, weak source coverage, access mistakes, or poor fit with real work.

Executive sponsor

The executive sponsor gives the project organizational weight. This role usually sits with a CIO, CTO, chief digital officer, or transformation executive who can tie AI search to a business plan, secure funding, and settle disputes that sit above the project team.

This leader does three jobs that no one else can do as well:

- Set the mandate: The sponsor frames AI search as a core initiative tied to concrete outcomes such as faster case resolution, shorter ramp time for new hires, or better access to policy and technical knowledge.

- Win cooperation across functions: Search touches systems and content owned by many groups. The sponsor can unlock participation from IT, security, HR, support, and business leaders when priorities clash.

- Protect momentum: Enterprise projects often slow down when teams debate access to systems, staffing, or rollout order. A strong sponsor keeps those decisions from lingering.

Without this role, the project often looks important in theory and optional in practice.

AI project lead

The AI project lead runs the operating rhythm of the implementation. This person converts business goals into workstreams, keeps technical and nontechnical teams aligned, and makes sure open issues do not pile up between meetings.

The role needs a rare mix of judgment and range. Technical literacy matters because the lead must understand source coverage, ranking quality, sync behavior, and access controls well enough to challenge assumptions. Strong communication matters just as much because the same person must explain tradeoffs to engineers, legal teams, executives, and department heads in plain language.

A capable project lead also keeps decision velocity high. They maintain the backlog, track dependencies, and close routine questions without constant escalation. AI support can reduce administrative drag here — weekly readouts, risk digests, stakeholder notes, and action trackers all become easier to maintain — but the lead still owns prioritization, sequence, and release readiness.

Data and infrastructure engineers

Data and infrastructure engineers build the foundation that determines whether the search experience feels complete or unreliable. Their scope includes source integration, schema normalization, document parsing, identity mapping, sync cadence, and index health across content systems such as ticketing platforms, shared drives, chat tools, intranets, and CRM records.

In enterprise AI search, technical depth at this layer has a direct effect on trust. A missed sync can hide a critical article. A weak parser can strip structure from a policy document. Poor identity mapping can confuse authorship and ownership. Inaccurate source attributes can damage ranking, filtering, and answer quality even when the model itself performs well.

This team usually works across search engineering, platform engineering, and data engineering. Their core responsibility is simple to state and hard to execute: make the organization’s knowledge available in a form that is current, structured, and reliable enough for search and answer generation to use with confidence.

Security and governance lead

The security and governance lead defines the boundaries of acceptable use. This role owns access policy, compliance requirements, audit readiness, data handling rules, and review standards for sensitive repositories.

That work cannot wait until the end of the project. Architectural choices around identity, logging, model provider controls, retention, and source inclusion all carry policy implications from the start. A security review that happens after implementation usually leads to redesign, delay, or reduced scope.

A strong lead in this area focuses on practical controls:

- Access consistency: Search should honor the same source-system rules that already govern a file, wiki page, support note, or HR record.

- Risk classification: The team needs clear rules for highly sensitive content, excluded repositories, and restricted use cases.

- Audit posture: Regulated environments often require clear logs, review paths, and defensible controls for how answers draw from enterprise data.

This role matters even more once search starts to power summaries, chat responses, and workflow actions. A single access mistake can undermine confidence far beyond one bad result.

Domain experts and business stakeholders

Department representatives bring the practical test that technical teams cannot supply on their own. People from engineering, customer support, sales, HR, finance, and IT know which sources carry authority, which terms matter inside their teams, and which results count as useful in the middle of real work.

Their contribution tends to fall into three areas:

- Content judgment: They identify the repositories and document types that deserve priority for their function.

- Language calibration: They help the team account for internal acronyms, product names, team-specific shorthand, and role-based search intent.

- Workflow fit: They test whether results help someone complete a task such as account research, incident response, onboarding, policy lookup, or case handling.

This role works best as a rotating group tied to major phases such as source onboarding, relevance review, and pilot validation. That structure keeps the core team small while still grounding the system in the language, habits, and expectations of the people who will rely on it.

How to structure a cross-functional AI search team

A strong team design starts with the business problem, not the org chart. Pick one workflow with clear value — policy lookup, technical documentation access, or case deflection — then assign a compact team with authority to make tradeoffs fast.

That structure should reflect how enterprise AI search actually ships. Product ownership sits at the center; technical ownership stays close to identity, data, and evaluation; business stakeholders step in where workflow knowledge matters most.

Start with a core team, not a committee

The first phase needs a delivery trio rather than a broad steering group. A business or product owner sets the target outcome; a technical lead maps source systems, access rules, and evaluation criteria; an implementation lead keeps dependencies, rollout tasks, and stakeholder communication on schedule.

- Business or product owner: Chooses the initial use case, defines success, and approves scope changes.

- Technical lead: Sets connector order, source priorities, identity requirements, and quality checks.

- Implementation lead: Coordinates reviews, pilot logistics, launch readiness, and issue resolution.

This setup reflects a common pattern in successful AI programs: one owner for business value, one owner for technical quality, and one owner for delivery. Teams move faster when each of those lanes has a named lead.

Use a hub-and-spoke model as the project grows

After the first release, add expertise through a hub-and-spoke model. The hub keeps standards consistent across evaluation, governance, and rollout policy; the spokes come from the departments whose content and workflows enter the next phase.

This model works well because enterprise search rarely expands in a straight line. Legal may need review of retention-sensitive content; finance may need strict validation around quarter-close materials; field operations may need mobile-friendly access to service documentation. A rotating spoke model brings in that expertise at the right moment without turning the core team into a permanent large program office.

Define decision rights early with a RACI model

A cross-functional team still needs sharp lines of authority. Set the RACI before connector work starts, especially for source approval, launch gates, exception handling, and quality sign-off.

- Responsible: The people who execute connector setup, indexing checks, relevance review, and enablement work.

- Accountable: One owner per decision area; no shared final approval.

- Consulted: Security, privacy, records management, legal, and departmental content owners.

- Informed: Teams affected by rollout, such as internal support, communications, and training leads.

This model reduces rework. It also prevents a common failure pattern: technical teams pause for policy input while business teams assume someone else approved the data scope.

Build a shared operating cadence

Team structure fails without a routine that matches the work. Set a fixed cadence for three reviews: implementation status, quality review, and adoption review.

Each review should look at a different signal set. The quality session should inspect empty-result rates, citation usage, low-confidence query groups, and permission exception rates; the adoption session should compare product usage with workflow outcomes such as faster policy resolution, fewer duplicate questions, or shorter time to find reference material. A shared cadence gives technical and business leads the same fact base before priorities shift.

Expand in phases, not all at once

Scale through stage gates rather than a broad company-wide launch. A practical sequence looks like this:

- Prove the first workflow: Validate one use case with a defined user group and a short feedback loop.

- Add adjacent repositories: Expand source coverage only after quality holds across the initial set.

- Broaden user access: Introduce new departments once training, support, and governance are ready.

- Extend the experience: Move into richer assistant behavior only after search quality stays stable.

Each phase should end with a go-or-no-go review tied to metrics, not enthusiasm. That discipline keeps cost under control, protects trust, and avoids the drag that comes with a large permanent team too early in the project.

Skills your AI search team needs to succeed

The right team does more than cover job titles. It brings the judgment to make sound tradeoffs when relevance, latency, governance, and usability pull in different directions.

That skill mix matters because AI search rarely fails in obvious ways. More often, it drifts — results feel slightly off, trusted sources lose visibility, access edge cases appear, and rollout momentum slows because no one can diagnose the full chain of issues with confidence.

Technical and system-level skills

Teams need practical fluency across the mechanics of enterprise AI, not just familiarity with AI terms. Search quality depends on dozens of small decisions — how queries get interpreted, how enterprise language maps to source content, how retrieval gets tuned, and how answer generation stays grounded under real usage conditions.

- Technical fluency: Team members should understand how ranking signals, embeddings, prompt design, orchestration logic, and model selection affect enterprise search behavior. That includes the ability to reason through tradeoffs such as fast responses versus deeper retrieval, or broad recall versus tighter precision.

- Systems thinking: Strong practitioners can trace a failure from symptom to root cause. They know when a weak answer points to bad chunking, poor entity resolution, thin metadata, noisy prompts, or weak test design rather than a vague “AI problem.”

- Connector evaluation: This skill should include an eye for schema drift, deleted-content handling, delta-sync windows, identity resolution, and document parsing edge cases. Teams that can inspect those details catch the quiet failures that distort search long before users file complaints.

- Data literacy: The work here goes beyond basic quality checks. Teams should know how to audit content patterns, spot blind spots in repository coverage, assess whether labels and taxonomies support retrieval, and identify where fragmented ownership weakens answer quality.

This skill set grows in importance once the system moves beyond direct lookup and into more compositional work. Teams with a working grasp of how a system can plan, choose tools, evaluate intermediate outputs, and revise its path are better equipped to support future assistant and agent experiences without architectural guesswork.

Adoption and execution skills

Successful teams treat rollout as an operating discipline, not a launch event. They know how to turn a technically capable system into one people rely on during routine work.

- Change management: Teams should know how to design role-based enablement, set realistic expectations, and introduce the system through concrete use cases instead of abstract AI messaging. Adoption improves when each group sees the feature in the context of its own work patterns, escalation paths, and decision points.

- Communication across functions: The team needs people who can write clear briefs, run efficient review meetings, document tradeoffs, and resolve disagreements without endless escalation. In practice, that means crisp language, decision logs, and a habit of translating technical findings into operational impact.

- AI-assisted project execution: Project leads can use AI to compress coordination work — draft meeting recaps, extract open issues from notes, convert stakeholder feedback into task lists, and maintain rollout documentation with less manual effort. Used well, that support improves project cadence without adding process overhead.

This is where many teams separate into two groups: those that keep momentum and those that lose it. The difference usually comes from execution discipline — how well the team communicates progress, captures decisions, and adjusts rollout plans as real usage reveals new constraints.

Judgment, trust, and responsible use

Enterprise AI search shapes attention. It influences which sources gain visibility, which answers appear settled, and where employees place confidence when time is short.

- Ethical judgment: Teams should recognize when system behavior can create uneven outcomes, expose sensitive context, or overstate certainty. That requires clear thinking about privacy boundaries, escalation policies, and the difference between a plausible response and a dependable one.

- Evaluation discipline: High-performing teams build structured test sets, compare outputs against business expectations, and review failure patterns by scenario rather than anecdote. They measure whether the system performs well for the queries that matter most, not just for generic benchmark cases.

- Collaboration and domain fluency: Teams need people who can absorb the language of each function quickly and turn that understanding into better tuning, testing, and rollout choices. In practice, that means recognizing where one team relies on acronyms, another on ticket IDs, and another on policy language — then shaping the experience accordingly.

The teams that stand out are rarely the ones with the most specialists on paper. They are the ones with enough technical range, operational discipline, and contextual judgment to improve the system in ways employees can feel.

Common challenges teams face during AI search implementation

Once a project moves from planning to execution, a different class of problems appears. The hardest issues usually come from operating discipline: weak escalation paths, uneven source readiness, unclear usage boundaries, and metrics that fail to reflect real employee behavior.

Unclear ownership slows progress

Many projects begin with broad executive support and still stall in day-to-day execution. The problem often sits in the gray areas: who approves sensitive repositories, who resolves duplicate source conflicts, who decides when a department-specific complaint requires a relevance change, and who owns the backlog after launch. A steering committee can approve strategy, but it rarely handles operational tradeoffs well.

This gap creates friction long after kickoff. Access exceptions move to one team, content issues to another, search complaints to a third, and no one owns the full incident from report to fix. That pattern does more than slow work — it makes the system feel unreliable because users never know where problems go or how quickly they will get resolved.

Data complexity appears fast

Enterprise information has far more variation than most teams expect at the start. One system stores rich metadata and clean titles; another stores fragmented threads, attachments, and comments with little structure. Some sources reflect updates within minutes, while others depend on scheduled syncs or brittle export jobs. Search quality can drop sharply when those differences stay unresolved.

A few data problems show up with unusual frequency:

- Identity fragmentation: The same employee may appear under different usernames, emails, or group memberships across systems. That mismatch can distort access rules, ownership signals, and people-related results.

- Version sprawl: The same policy, FAQ, or deck may exist in several tools at once. Without clear source preference rules, outdated copies can outrank the current version.

- Archive leakage: Old project spaces, retired documentation, and closed ticket systems often remain accessible to the index. That content can pollute results unless the team sets clear lifecycle rules.

- Structure loss: Threads, comments, and attached files can lose context during ingestion. Once that structure disappears, the retrieved result may look complete even when key meaning is missing.

Change management receives too little attention

Most employees already have a default path to find answers: ask a teammate, scan recent chat threads, or return to a bookmarked document. AI search must displace those habits before it can create measurable value. That shift requires manager support, role-specific enablement, and clear guidance on when the tool should serve as the first stop.

Usage boundaries matter just as much. In high-stakes workflows such as HR policy interpretation, financial reporting, or legal review, teams need explicit rules for when human review remains mandatory. Without those rules, some employees rely on the system too much, while others avoid it entirely. Neither outcome helps adoption.

Scope expands faster than teams expect

Early success tends to attract more requests than the team can absorb. Departments ask for custom ranking logic, separate rollout plans, new source connections, workflow-specific prompts, and tailored analytics. The risk is not ambition itself — it is loss of focus before the core operating model proves stable.

The strongest teams use a narrow filter for what enters the next phase:

- High query concentration: Start where search demand clusters around a known set of questions.

- Source readiness: Favor repositories with clear ownership, decent structure, and current content.

- Easy validation: Choose use cases where teams can tell quickly whether the answer helped or missed the mark.

That sequence reduces noise, keeps the backlog sane, and gives the team cleaner signals for the next round of tuning.

Weak evaluation hides real problems

Many teams test AI search with curated queries from project members and subject matter experts. Those tests help at first, but they rarely reflect messy real-world traffic — short queries, internal shorthand, acronyms, half-formed requests, and edge cases tied to access controls. A system can look strong in a demo and still fail under normal employee use.

A stronger review process looks at production behavior, not just lab results. The most useful checks tend to include:

- Failure clusters: Query types that repeatedly produce weak or inconsistent results

- Source quality drift: Cases where low-trust or stale content starts to surface more often

- Answer verification: Whether users open cited material, reformulate the query, or abandon the result

- Access edge cases: Situations where the system blocks too much, exposes too much, or handles inherited permissions poorly

- Fallback behavior: Patterns where employees leave search and return to old channels such as chat or direct outreach

Without that level of review, teams can mistake surface fluency for real usefulness. The result looks polished, but the operational cracks stay hidden until adoption stalls.

How to set your AI search team up for long-term success

Long-term performance depends on operating discipline that looks closer to product management and service ownership than a launch checklist. The strongest teams treat AI search as a service with named owners, service levels, release controls, and a roadmap tied to business priorities.

That shift matters because enterprise search changes with the company itself. New repositories appear, org charts change, policy language evolves, and high-value questions move from simple retrieval to answer generation and task execution; the team needs a structure that can absorb that change without constant reorganization.

Build an operating model, not a temporary project team

A durable model starts with explicit ownership artifacts, not informal handoffs. High-performing teams keep a living operating file that covers source owners, system dependencies, escalation paths, release approval rules, and the success measures that matter to each function.

That operating file should answer a few questions with precision:

- Who owns each source after it goes live: Every indexed system needs a business steward and a technical steward. One owns content value and source relevance; the other owns sync reliability, schema mapping, and issue response.

- What qualifies a source for inclusion: New repositories should meet entry criteria before indexing starts — a clear business use case, identifiable source ownership, stable metadata, and testable access rules.

- What service levels apply: Teams should define thresholds for source freshness, incident response, and search issue resolution. Without those standards, quality problems sit in a queue until users stop trusting the system.

- What gates apply to major changes: Ranking updates, prompt changes, answer formatting changes, and source expansions need release notes, test plans, and rollback paths.

A model like this also protects continuity. Team changes, vendor changes, and budget changes are common in enterprise AI programs; documented ownership and release discipline keep the system stable when personnel or priorities shift.

Put governance and feedback on a fixed cadence

Governance becomes useful when it produces repeatable decisions instead of one-off approvals. The most resilient teams run a standing review cycle that covers source intake, quality exceptions, policy changes, and expansion requests in one place.

That review cycle should include a compact scorecard with metrics that expose operational health from multiple angles:

- Coverage quality: Track zero-result queries, low-confidence answer rates, and the percentage of priority repositories indexed. This shows where the system lacks enough usable knowledge to support real work.

- Trust quality: Track citation usage, correction rates, unresolved user feedback, and policy exceptions. These signals reveal whether employees believe the answers and know when to verify them.

- Operational quality: Track failed syncs, schema breaks, stale indexes, and unresolved incidents by source. This reveals where technical debt will soon affect the user experience.

- Business quality: Track usage by department, query success by workflow, and measurable impact such as faster case resolution, reduced duplicate work, or shorter onboarding time.

User feedback should enter the same operating rhythm rather than sit in a separate inbox. Department champions from support, engineering, sales, HR, and IT can review flagged results, identify patterns in missed intent, and help the core team decide whether the fix belongs in source curation, ranking logic, answer presentation, or user training.

Grow internal capability before you widen the use case

Sustained quality depends on people who know the company’s language, decision paths, and content habits. Outside specialists can accelerate setup, but internal capability determines whether the system stays useful a year later.

The best teams build that capability with a deliberate skills matrix:

- Repository stewardship: Team members should know how to audit a source for ownership, metadata discipline, archival sprawl, and business relevance before it enters the index.

- Evaluation design: Teams need people who can build test sets from real employee questions, spot drift across departments, and judge answer usefulness in context rather than in isolation.

- Operational analytics: Someone should understand how to read scorecards, trace quality drops back to a source or release, and separate adoption issues from system issues.

- Workflow design: As search matures into assistants or agentic flows, teams need people who can map task sequences, handoff points, and approval requirements across departments.

- Program resilience: Mature teams plan for role coverage, succession, and knowledge transfer so that search quality does not depend on one engineer, one admin, or one enthusiastic sponsor.

Expansion should follow readiness gates, not enthusiasm. Teams that move into assistants, direct answers, or multi-step automation too early usually expose weaknesses that basic search had masked — poor source ownership, inconsistent content hygiene, weak test coverage, or unclear approval logic. A mature team expands only after it can prove that the underlying service is stable, measurable, and well understood across the business.

The team you build around AI search matters more than the technology you select. Every decision about source quality, access rules, rollout sequence, and long-term ownership flows through the people you put in charge — and the operating discipline they bring to the work.

If you're ready to see how the right platform can support your team from day one, request a demo to explore how we can help transform your workplace.